Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways

- AI coding tools boost productivity 3-5x but create PR review bottlenecks, with 91% longer review times from validation overhead.

- Natural language rules in plain English automate code enforcement and fix issues proactively instead of only suggesting changes.

- Gitar auto-applies fixes, analyzes CI failures, and consolidates feedback into a single comment, outperforming suggestion engines like CodeRabbit.

- Ten copy-paste rules cover security (secret detection, SQL injection), maintainability (async patterns, dead code), and quality (error handling, tests).

- Teams can implement these rules through a simple 7-step GitHub Actions integration with Gitar, offering a 14-Day Free Trial.

The Problem: AI Coding’s 2026 PR Bottleneck Drowning Teams

Engineering teams face an unprecedented review bottleneck from AI-generated code. 95% of developers spend at least some effort reviewing, testing, and correcting AI output, with 59% rating that effort as moderate or substantial. Despite a 35% average productivity boost from AI coding tools, teams report stagnant sprint velocities because review work keeps piling up.

This bottleneck stems from the sheer volume of AI-generated code that still needs human validation. The flood of AI-generated PRs creates cascading problems: notification spam from suggestion engines, YAML configuration complexity in CI pipelines, and manual fixes that still require human intervention.

These inefficiencies compound when teams pay for tools that do not solve the core problem. Tools like CodeRabbit and Greptile charge $15-30 per developer monthly for suggestions that leave the actual work to humans. When you factor in time spent implementing suggestions and managing CI failures, a 20-developer team can lose $1 million annually in productivity.

Logic and correctness issues are 75% more common in AI-generated PRs. Yet only 48% of developers always check their AI-assisted code before committing. Teams need autonomous enforcement that fixes problems directly instead of more suggestion streams that add to the noise.

The Solution: Natural Language Rules for Autonomous AI Code Reviews

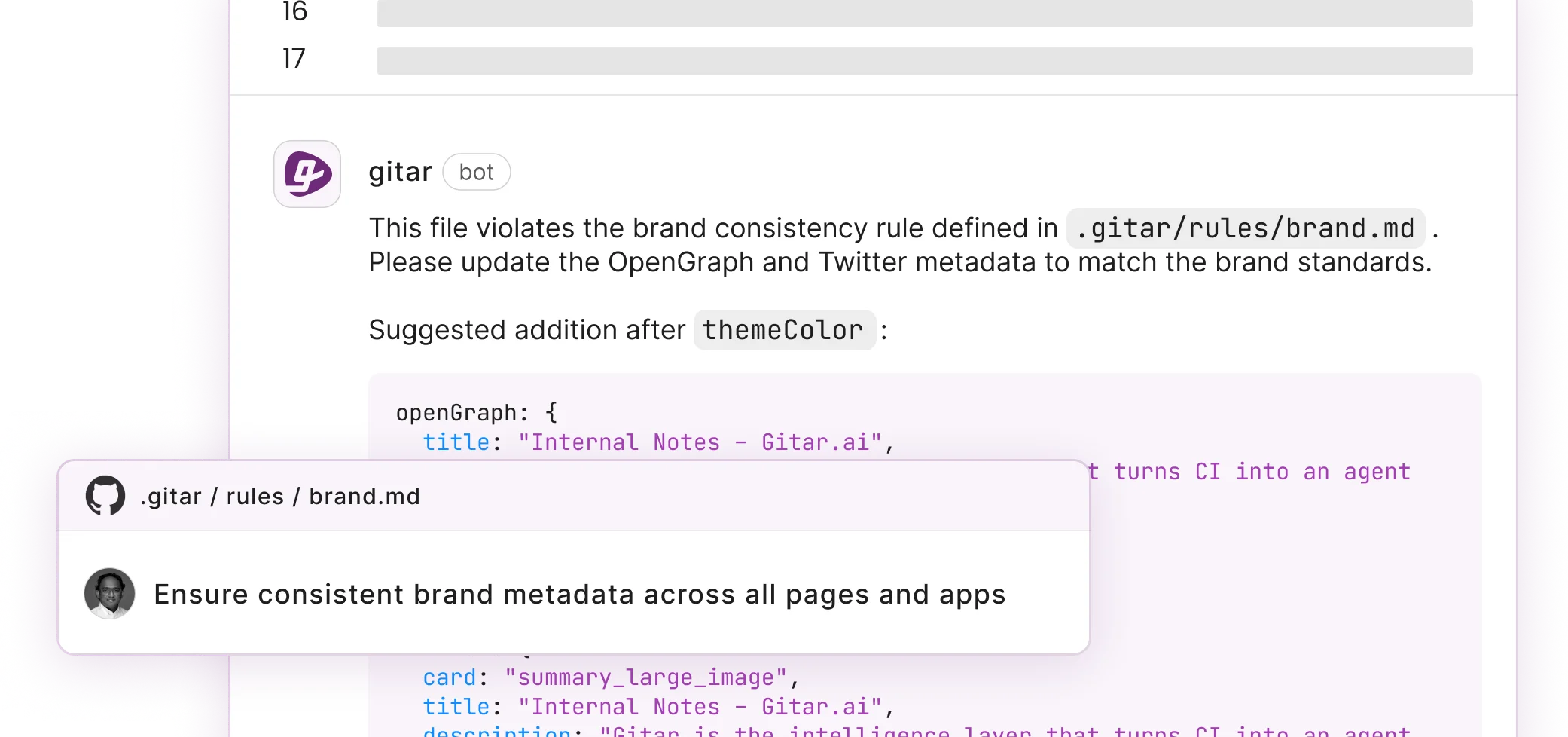

Natural language rules turn code review into proactive automation that enforces standards consistently. Teams replace complex YAML configurations or custom scripts with policies written in plain English markdown files. Gitar introduced repository rules on October 2, 2025, defined as natural language MD files in .gitar/rules, enabling automated actions like adding comments or labels on package upgrades. For full details, see the Gitar documentation.

These rules scale because they stay readable for both humans and AI systems. Engineering leaders can define standards without deep DevOps expertise. Over time, the same rules evolve into richer workflows that cover more of the development lifecycle.

Unlike suggestion engines that stop at comments, natural language rules in Gitar trigger autonomous fixes that run through CI before committing. The system enforces policies, applies changes, and validates green builds so developers focus on higher-level work.

Start your free Gitar trial to see natural language rules enforce standards automatically.

Gitar’s Healing Engine: How It Actually Fixes Code

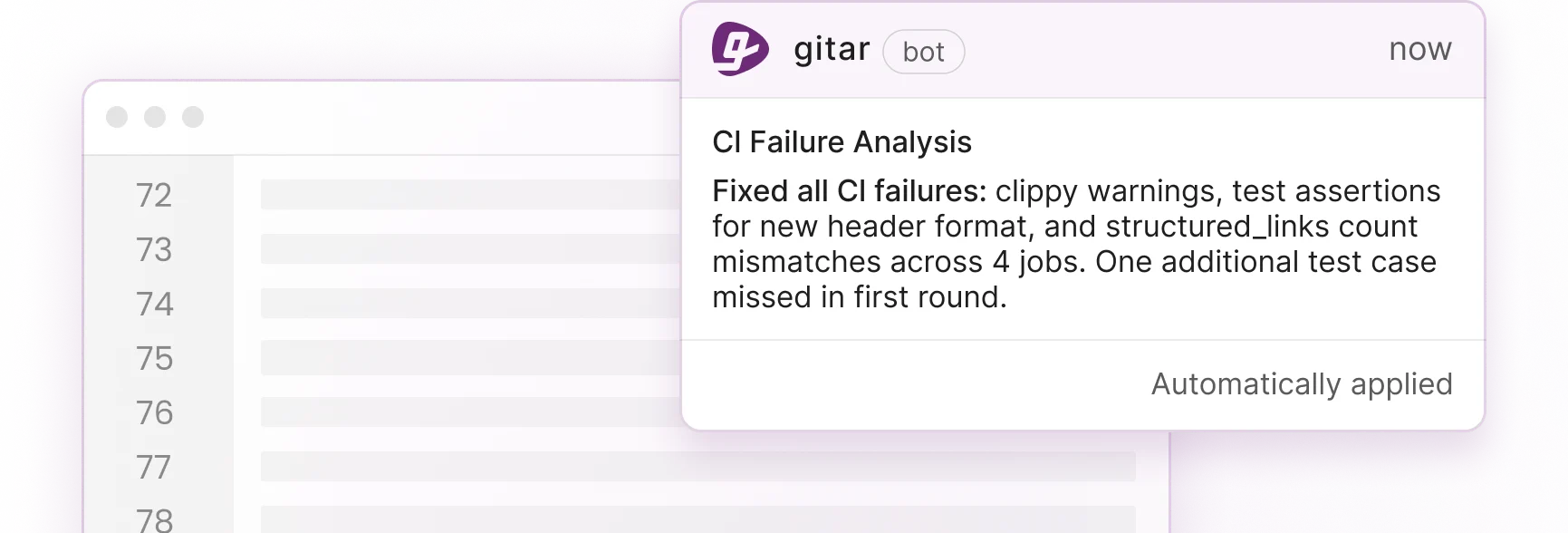

Gitar differentiates itself by applying fixes automatically instead of only suggesting them. The platform added CI failure analysis on October 2, 2025, automatically analyzing failures and providing insights in the dashboard comment, updating dynamically with new commits. For more on CI failure analysis, refer to the Gitar documentation. When CI breaks, Gitar analyzes root causes, generates fixes with full codebase context, validates solutions, and commits them automatically.

The following comparison shows how Gitar’s autonomous approach differs from traditional suggestion engines:

|

Capability |

CodeRabbit/Greptile |

Gitar |

|

PR summaries |

Yes |

Yes (concise, single comment) |

|

Inline suggestions |

Yes |

Yes, plus auto-apply |

|

CI failure analysis/auto-fix |

No |

Yes (validates green builds) |

|

Natural language rules |

Limited |

Full (.gitar/rules/*.md) |

The platform consolidates all findings, including CI analysis, review feedback, and rule evaluations, into a single dashboard comment that updates in place. This approach eliminates notification spam and keeps attention on the most relevant signals. Teams report that Gitar’s summaries stay more concise than competitors because the system prioritizes signal over noise.

10 Natural Language Rules for AI Code Reviews: Copy-Paste Ready

Teams can drop these production-tested natural language rules directly into the .gitar/rules/ directory. Each rule targets a specific risk area and defines a clear trigger and action.

Security Rules for High-Risk Changes

1. Authentication Code Security Review

— title: “Security Review for Auth Changes” when: “PRs modifying authentication or encryption code” actions: “Assign security team and add security-review label” —

This rule routes sensitive code changes to security specialists and flags them for enhanced scrutiny. Gitar executes the specified actions such as assignment and labeling.

2. Secret Detection and Remediation

— title: “Secret Scanning” when: “New commits contain potential secrets or API keys” actions: “Block merge and create security incident ticket” —

This rule prevents accidental secret commits by scanning for patterns like API keys, tokens, and passwords. It also creates immediate incident response workflows.

3. SQL Injection Prevention

— title: “SQL Injection Check” when: “Database queries use string concatenation” actions: “Flag for parameterized query conversion and auto-fix where possible” —

This rule identifies dangerous SQL construction patterns and converts them to parameterized queries when the fix is deterministic.

Maintainability Rules for Cleaner Code

4. Async/Await Enforcement

— title: “Modern Async Patterns” when: “New code uses callbacks instead of async/await” actions: “Convert to async/await pattern and update tests” —

This rule modernizes asynchronous code patterns automatically, which improves readability and error handling.

5. Function Length Limits

— title: “Function Size Control” when: “Functions exceed 50 lines” actions: “Suggest extraction opportunities and create refactoring PR” —

This rule enforces the single responsibility principle by identifying overly complex functions and proposing extraction patterns.

6. Magic Number Elimination

— title: “Named Constants” when: “Code contains magic numbers or hardcoded values” actions: “Extract to named constants with descriptive names” —

This rule identifies magic numbers and creates named constants, following clean code practices for clearer intent.

7. Dead Code Removal

— title: “Dead Code Cleanup” when: “Unused functions, classes, or commented code detected” actions: “Remove dead code and update documentation” —

This rule removes unused code automatically while version control preserves history for future reference.

Logic and Quality Rules for AI-Generated Code

8. Error Handling Enforcement

— title: “Error Handling Check” when: “Functions lack proper error handling” actions: “Add explicit error handling and logging” —

This rule strengthens reliability by detecting unhandled exceptions and adding appropriate try-catch blocks or result types.

9. Test Coverage for AI Code

— title: “AI Code Test Requirements” when: “AI-generated code lacks corresponding tests” actions: “Generate comprehensive test suite covering edge cases” —

This rule addresses the higher logic error rate in AI-generated code by creating test coverage for boundary conditions and failure modes.

10. Documentation Synchronization

— title: “Documentation Updates” when: “Code changes affect public APIs without documentation updates” actions: “Update relevant documentation and add usage examples” —

This rule keeps documentation current by detecting API changes and updating the corresponding documentation files.

Implementing Rules in CI: 7-Step GitHub Actions + GitLab Tutorial

Teams can integrate these rules into CI with a short setup that starts in GitHub and extends to GitLab.

1. Install Gitar App

Add the Gitar GitHub App or GitLab integration to your repository through your platform’s marketplace.

2. Start 14-Day Trial

Activate your Team Plan trial for full access to auto-fix capabilities and rule execution.

3. Create Rules Directory

Create a .gitar/rules/ directory in your repository root to organize natural language rule files.

4. Add Your First Rule

Create a simple rule file to test the integration:

— title: “PR Description Enhancement” when: “PR description is shorter than 50 characters” actions: “Request more detailed description with context” —

5. Test with Sample PR

Open a test PR with a minimal description and confirm that Gitar evaluates the rule and responds.

6. Enable Auto-Commit

Configure auto-commit for trusted fix types after validating fixes in suggestion mode.

7. Integrate Notifications

Connect Slack or Jira for cross-platform workflow automation and team notifications.

Effective rollouts start in suggestion mode for trust building, use rate limits to control costs, and rely on kill switch phrases for critical failures that must halt builds.

Get started with Gitar’s GitHub and GitLab integration to automate rule execution.

From Rules to Full Workflows: Gitar’s Platform Vision

Natural language rules form the base layer of a broader development intelligence platform. Gitar’s platform uses repository rules so teams can express complex workflows and policies as prompts, enabling the agent to reason about context, apply fixes, and automate workflows that previously required complex YAML or scripts.

These repository rules extend beyond simple code checks to cover complete development workflows. Teams gain automated workflow execution, deep analytics for CI failure patterns, and integrations that maintain context across development tools. This approach removes YAML configuration complexity while still providing natural language CI workflows.

FAQ

How does Gitar differ from CodeRabbit and other suggestion engines?

Gitar operates as a healing engine that automatically applies fixes and validates them against CI, while CodeRabbit and similar tools only provide suggestions that require manual implementation. Gitar keeps builds green by testing fixes before committing them, whereas suggestion engines leave validation to developers. The platform also consolidates all feedback into a single dashboard comment instead of scattered inline suggestions.

Can I configure different trust levels for automated fixes?

Yes, Gitar supports fully configurable automation levels. You can start in suggestion mode where you approve every fix and build confidence in the system. After that, you can enable auto-commit for specific failure types that feel safe. You stay in control of how aggressive automation becomes.

What are the most effective natural language rules for security?

The most effective security rules focus on authentication code review, secret detection, SQL injection prevention, and dependency vulnerability scanning. Rules should specify clear triggers such as “PRs modifying authentication code” and concrete actions such as “assign security team and scan for secrets.” Rules work best when they stay specific enough to avoid false positives while broad enough to catch real vulnerabilities.

What common pitfalls should I avoid when implementing CI integration?

Common pitfalls include enabling auto-commit too early without building trust, defining overly broad rules that create noise, and skipping rate limiting for cost control. Start with read-only operations, use draft PRs for initial testing, and establish clear kill switch phrases for critical failures. Monitor token usage and set spending caps to prevent unexpected costs from extensive rule execution.

How do natural language rules handle complex repository contexts?

Natural language rules handle complex contexts by referencing multiple factors at once, including file paths, commit messages, PR descriptions, and linked tickets. Unlike rigid YAML configurations, these rules can reason about intent and context. For example, a rule can distinguish between intentional test vulnerabilities and real security issues by checking file paths and commit messages for context clues.

Conclusion: Automate AI Code Reviews Today

Natural language rules shift AI code review from suggestion engines that create work to healing engines that complete it. By defining policies in plain English and enabling autonomous fixes, teams remove the manual toil that bottlenecks modern development workflows. The ten copy-paste examples here cover security, maintainability, and logic enforcement, which are the areas where AI-generated code needs the most oversight.

GitHub Actions and GitLab CI integrations ensure these rules run automatically without complex configuration. As teams generate code 3-5x faster with AI tools, natural language rules provide the enforcement layer that keeps builds green and quality high.

Ready for green builds? Install Gitar now to automate AI code reviews and eliminate PR bottlenecks.