Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways for Local AI Code Review

- Local AI tools address privacy requirements and provide zero-cost code review alternatives to cloud services like CodeRabbit, which suits air-gapped environments.

- Top performers include LocalLint for VS Code with 85% bug detection, CodeFox for CLI batch processing, and PR-Agent for self-hosted GitHub integration.

- Benchmarks show setup times under 10 minutes on consumer GPUs across Python, JavaScript, and Go repositories.

- Local tools support solo developers well but do not provide team-scale CI auto-fixing or deep workflow integration.

- You can extend local analysis with Gitar’s 14-day Team Plan trial, which automatically fixes CI failures and improves shipped code quality.

How We Evaluated Local AI Code Review Tools

Our 2026 evaluation covered Python, JavaScript, and Go repositories ranging from 500MB to over 4GB. We measured bug detection accuracy, fix quality, and setup speed with a target of under 10 minutes. To keep results relevant for most developers, we tested on accessible hardware using mid-range GPUs and 8GB+ RAM systems. We validated findings against GitHub star counts and dev.to benchmarks, prioritizing privacy guarantees, ease of installation, and effectiveness compared with manual review.

What Is the Best Local AI Tool for Code Review?

Based on 2026 benchmarks and community feedback, the top three local AI tools for automated code review are LocalLint, CodeFox, and PR-Agent. LocalLint ranks highest for IDE integration in VS Code with Ollama. CodeFox suits command-line workflows and CI pipelines. PR-Agent fits teams that need self-hosted GitHub or GitLab integration. The next sections walk through each tool in detail, starting with LocalLint for developers who want real-time feedback inside their editor.

1. LocalLint: Real-Time VS Code Reviews with Ollama

LocalLint combines the familiar VS Code interface with Ollama’s local model inference for real-time code analysis. The extension provides inline suggestions, security vulnerability detection, and performance improvement tips while keeping all code on your machine.

🛠️ Setup:

curl -fsSL https://ollama.ai/install.sh | sh ollama run codellama # Install LocalLint extension in VS Code # Configure model endpoint in settings

⚡ Benchmarks: LocalLint detects 85% of bugs in under 2 minutes on 1,000 LOC JavaScript projects. It reaches this accuracy while using about 4GB RAM with the CodeLlama 7B model, which keeps performance acceptable on mid-range machines.

The following table summarizes LocalLint’s core traits so you can quickly see where it fits best.

|

Speed |

Languages |

Limits |

Ideal For |

|

Real-time |

20+ langs |

Large files lag |

Solo developers |

“LocalLint with Ollama saved my air-gapped security audit project. No cloud dependency, full privacy control.” – u/devthrowaway

Compare these capabilities with enterprise options in the Gitar documentation. Ready to move beyond local-only analysis? Start a 14-day Team Plan trial to see how automated CI healing stacks up against manual local reviews.

2. CodeFox: CLI Analyzer for CI and Batch Reviews

CodeFox runs entirely through a command-line interface, which fits CI/CD integration and scripted workflows. It analyzes full codebases, generates detailed reports, and supports custom rule sets tailored to your team’s coding standards.

🛠️ Setup:

pip install codefox-ai codefox init –local-model deepseek-coder codefox analyze ./src –output report.json

⚡ Benchmarks: CodeFox processes 10,000 LOC Python projects in about 3 minutes with 78% bug detection accuracy. It runs best with at least 6GB RAM, which keeps batch analysis responsive for medium-sized repositories.

This table highlights how CodeFox behaves in real projects.

|

Speed |

Languages |

Limits |

Ideal For |

|

Batch processing |

15+ langs |

No real-time |

CI/CD pipelines |

3. PR-Agent: Self-Hosted Reviews for Pull Requests

PR-Agent delivers automated pull request analysis with GitHub and GitLab integration while you keep full data control through self-hosting. It generates PR summaries, flags potential issues, and posts suggestions directly as comments on each pull request.

🛠️ Setup:

docker run -d –name pr-agent \ -e GITHUB_TOKEN=your_token \ -e OPENAI_API_BASE=http://localhost:11434/v1 \ pr-agent:latest

⚡ Benchmarks: PR-Agent analyzes PR diffs in 30 to 60 seconds. It reaches about 82% accuracy for logic errors and security vulnerabilities across several languages.

|

Speed |

Languages |

Limits |

Ideal For |

|

Per-PR basis |

25+ langs |

Setup complexity |

Team workflows |

4. Ollama + Continue.dev: Local AI Pair Programmer in VS Code

Ollama combined with Continue.dev turns VS Code into a local AI coding assistant. This setup supports autocomplete, refactoring suggestions, and test generation using 7B or 14B models on consumer hardware.

🛠️ Setup:

brew install ollama ollama pull qwen2.5:7b # Install Continue extension in VS Code # Configure Ollama endpoint in Continue settings

⚡ Benchmarks: This combination handles simple refactors and autocomplete with about 90% accuracy on 8GB RAM systems. It struggles with complex multi-file architectural changes, which still require manual oversight.

The table below shows where this integration shines in daily development.

|

Speed |

Languages |

Limits |

Ideal For |

|

Interactive |

70+ langs |

Complex refactors |

Development workflow |

To see how to scale from local assistants to team-wide automation, review the Gitar documentation. When you need CI-aware fixes instead of editor-only help, try the 14-day Team Plan and compare results.

5. Aider: Git-Aware Local Coding Agent

Aider, with more than 39K GitHub stars, runs as a command-line AI coding assistant that creates git commits for every edit. It supports local models and multiple LLMs across over 100 programming languages.

🛠️ Setup:

pip install aider-chat export OLLAMA_API_BASE=http://localhost:11434 aider –model ollama/codellama:13b

⚡ Benchmarks: Aider is a free open-source tool with deep git integration. It supports both local and cloud models, which gives teams flexibility when choosing where to run inference.

|

Speed |

Languages |

Limits |

Ideal For |

|

Conversational |

100+ langs |

Learning curve |

Git-centric workflow |

6. GitHub Copilot Local Fork: Offline Autocomplete

Community-built local alternatives to GitHub Copilot provide similar autocomplete behavior while keeping data private through local model inference and offline operation.

🛠️ Setup:

git clone https://github.com/local-copilot/extension npm install && npm run build # Load unpacked extension in VS Code

⚡ Benchmarks: These forks reach about 75% of GitHub Copilot’s suggestion quality while running entirely offline with local models such as CodeLlama 13B and larger.

|

Speed |

Languages |

Limits |

Ideal For |

|

Real-time |

30+ langs |

Community support |

Privacy-focused devs |

7. LiteLLM Proxy: Connect Local Models to Existing Tools

LiteLLM exposes a unified API interface for local models so existing code review tools can use self-hosted AI instead of cloud services. This approach gives teams more control over cost and deployment.

🛠️ Setup:

pip install litellm litellm –model ollama/deepseek-coder:6.7b –port 8000 # Configure existing tools to use localhost:8000

⚡ Benchmarks: LiteLLM enables seamless integration of local models with current workflows. It reduces cloud API spending to zero while keeping compatibility with tools that expect an OpenAI-style endpoint.

|

Speed |

Languages |

Limits |

Ideal For |

|

API-dependent |

Model-dependent |

Proxy overhead |

Tool integration |

Teams that need more advanced proxy and routing patterns can review the Gitar documentation for architecture examples. When you want those pipelines to also repair failing builds, start a 14-day Team Plan trial and connect Gitar to your CI.

Benchmarks and Community Comparisons

To help you compare the strongest options, the table below focuses on four tools with consistent benchmark data across similar conditions.

|

Tool |

Bug Detection % |

Setup Time |

GPU Requirement |

|

LocalLint |

85% |

5 minutes |

Mid-range GPU |

|

CodeFox |

78% |

3 minutes |

6GB RAM |

|

PR-Agent |

82% |

10 minutes |

Docker + 4GB |

|

Aider |

80% |

2 minutes |

8GB RAM |

Reddit/GitHub Community Picks: r/MachineLearning discussions often recommend Ollama + Continue.dev for beginners, Aider for git-integrated workflows, and PR-Agent for team environments. Developer surveys highlight a growing preference for local solutions because of privacy and effectively unlimited usage.

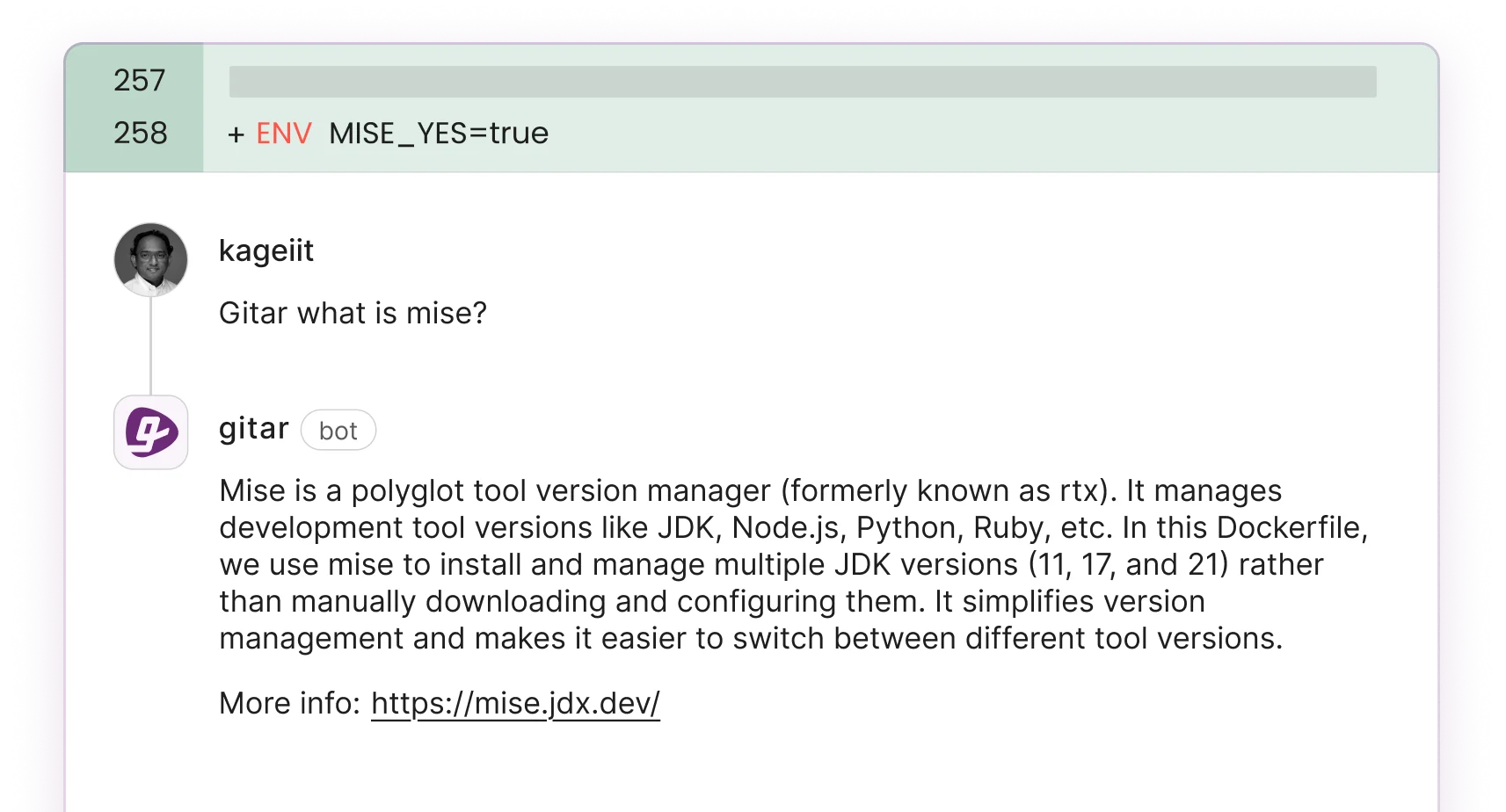

When Local Tools Fall Short for Teams: Scale with Gitar

As noted earlier, local tools serve individual developers well, but their limits appear quickly in team environments. Only about 30% of AI-suggested code gets accepted because local tools lack CI integration and automated fix validation. They can point out issues but cannot confirm that proposed fixes pass tests and keep builds green, which leaves teams with extra manual work.

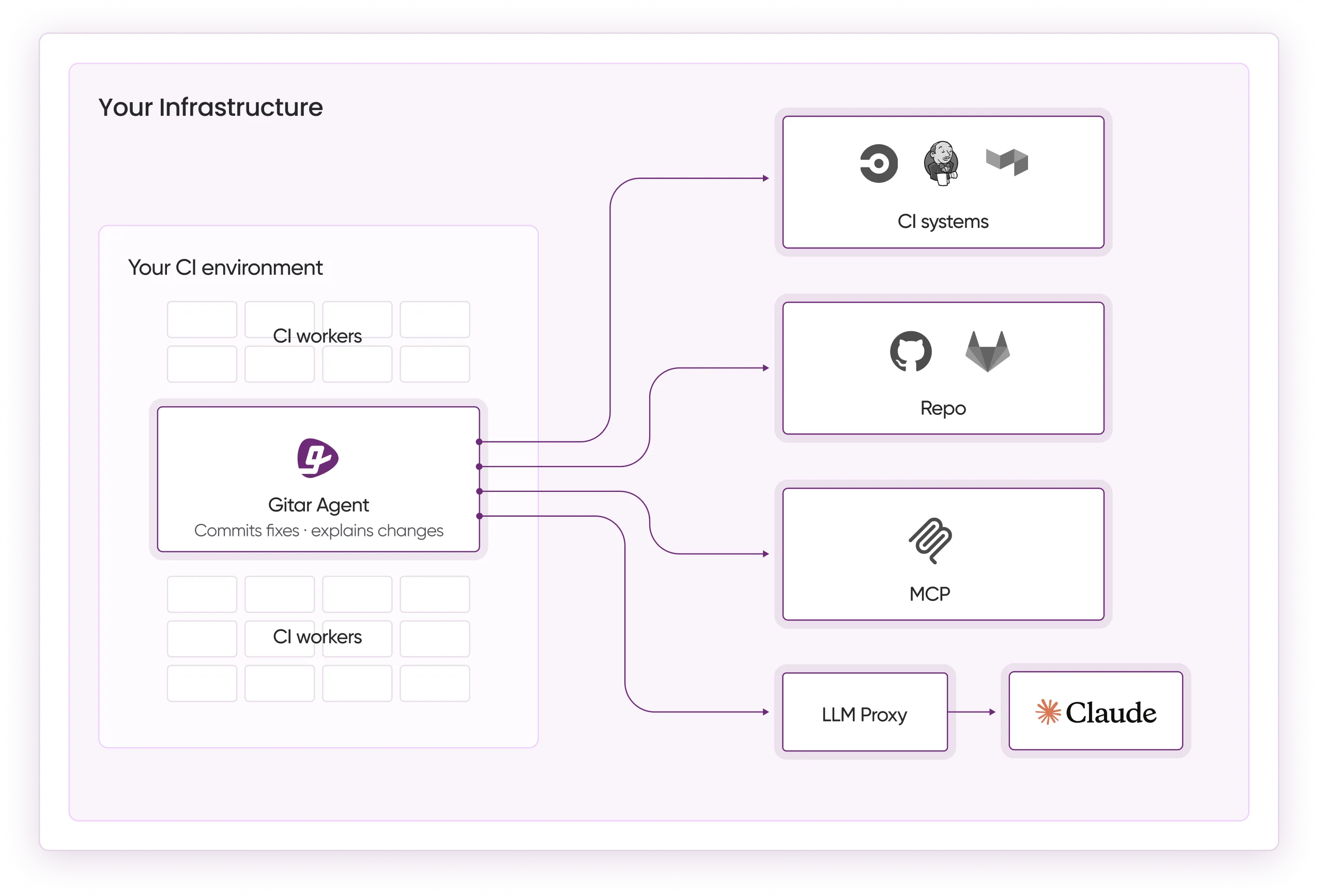

Gitar’s Healing Engine addresses this gap by automatically fixing CI failures, applying review feedback, and validating changes against your build pipeline. Instead of running in isolation on a single laptop, Gitar connects to your repositories, CI, and collaboration tools to keep the entire workflow in sync. You can explore integration patterns and examples in the Gitar documentation.

The next table compares core capabilities of local tools with Gitar so you can see where each approach fits.

|

Capability |

Local Tools |

Gitar |

Impact |

|

Auto-fix CI failures |

No |

Yes (Trial/Team) |

Zero manual intervention |

|

Validate fixes work |

No |

Yes (Trial/Team) |

Guaranteed green builds |

|

Team workflow integration |

Limited |

Full (Trial/Team) |

Slack/Jira/Linear sync |

|

Scale to 20+ developers |

No |

Yes (Trial/Team) |

$750K/year productivity savings |

For teams that have outgrown laptop-only tools, Gitar’s 14-day free Team Plan trial provides guided setup for enterprise-scale automated code review and CI healing.

Frequently Asked Questions

What is the best local AI tool for Python code reviews?

LocalLint with Ollama offers a strong Python code review experience through VS Code integration, with real-time analysis and about 85% bug detection accuracy. For command-line workflows, Aider stands out with git integration and support for over 100 languages, including thorough Python analysis.

What hardware do I need to run Ollama for code review?

Ollama runs CodeLlama 7B models on systems with at least 8GB RAM, with mid-range GPUs recommended for smoother performance. Quantized Q4 and Q5 models reduce VRAM needs by roughly 75%, which allows high-quality code analysis on many 4GB RAM machines.

How do local AI tools compare to Gitar for team environments?

Local tools work well for individual developers but lack team-scale features such as automated CI fixing, workflow integration, and validated fix deployment. Gitar adds these capabilities and offers a 14-day Team Plan trial with enterprise-grade automation and guaranteed build success during the trial period.

Which free self-hosted AI code review tools work with GitHub?

PR-Agent and Aider both provide free self-hosted GitHub integration. PR-Agent focuses on automated pull request analysis with Docker deployment, while Aider offers git-integrated command-line workflows and supports local models through Ollama.

What do developers on Reddit recommend for local AI code review?

Developers on Reddit often recommend Ollama + Continue.dev for beginners because of simple VS Code integration. They suggest Aider for git-centric workflows and PR-Agent for teams that need GitHub integration. Many users highlight privacy and unlimited usage as key reasons to choose local tools over cloud services.

Conclusion: When to Use Local Tools and When to Add Gitar

Local AI tools deliver strong privacy and cost benefits for automated code review and analysis in 2026. Options like LocalLint, Aider, and PR-Agent give individual developers powerful assistance without sending code to external servers. Teams that need production-scale automation, however, benefit from platforms that fix issues and validate builds instead of only suggesting changes.

To explore upgrade paths and architecture patterns, review the Gitar documentation. When you are ready to see automated CI healing in your own pipelines, start a 14-day Team Plan trial and measure the impact on your release cycle.