Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways for 10–50 Developer Teams

-

Paid AI coding assistants serve teams better than free tools through deeper project context, stronger security, CI/CD integrations, and autonomous fixes instead of basic autocomplete.

-

Free tools like Codeium and Gemini impose usage limits and lack team-scale features, which creates PR review overload and more CI failures.

-

Paid options such as GitHub Copilot Business and Gitar add enterprise security (SOC2, IP indemnity) and handle large monorepos reliably.

-

Gitar reduces CI and review time from 1 hour to about 15 minutes per developer, which can unlock up to $750K in annual savings for a 20-person team.

-

Teams dealing with PR floods and CI bottlenecks should evaluate Gitar’s 14-day Team Plan trial to measure whether autonomous fixes remove their specific blockers.

Decision Point for 10–50 Dev Teams: Free or Paid AI Coding Assistants

Engineering teams now face a critical choice as AI-generated code floods review pipelines. This flood creates a quality gap: NxCode Team’s March 2026 ranking shows Claude Code achieving 80.8% on SWE-bench Verified, while free alternatives cap teams at basic autocomplete that cannot keep pace. To navigate this gap, evaluate tools based on project context in large codebases, security and compliance features, per-user limits and scalability, CI/CD pipeline integrations, PR noise reduction capabilities, and autonomous fixes versus suggestion-only workflows.

The following comparison shows how leading tools stack up on pricing, usage limits, and benchmark performance for team deployments:

|

Tool |

Free Tier |

Paid Tier |

SWE-bench Score |

|---|---|---|---|

|

GitHub Copilot |

2,000 completions/month |

$19-39/user/month |

~70% |

|

Codeium |

Unlimited autocomplete |

$12/user/month |

~65% |

|

Gitar |

14-day Team Plan trial |

Custom pricing |

Auto-fix focused |

|

Claude Code |

40 messages/day |

$20-200/month |

80.8% |

Run each tool against your own PR flood scenarios and measure fix time, build success rates, and notification noise. Start your 14-day Gitar trial to see how autonomous fixes behave on your actual branches and pipelines.

These evaluation criteria become concrete when you compare specific capability gaps between free and paid tools.

Free vs Paid AI for Teams: Capability Gaps in 2026

Augment Code’s April 2026 testing on a 450,000-file monorepo reveals critical gaps in free tools for team environments. Teams should examine how free alternatives handle architectural reasoning, multi-file accuracy, and security posture compared to paid solutions.

This capability breakdown highlights where free tools fall short across four dimensions that matter most for teams:

|

Capability |

Free Tools |

Paid Tools |

Winner |

|---|---|---|---|

|

Context Window |

Limited (8K-32K tokens) |

1M+ tokens |

Paid |

|

Security Features |

Basic/None |

SOC2, IP indemnity |

Paid |

|

CI Integration |

None |

Auto-fix, healing |

Paid |

|

Team Collaboration |

Individual focus |

Shared context, analytics |

Paid |

Veracode’s 2025 GenAI Code Security Report found 45% of AI-generated code contains vulnerabilities, which exposes teams that rely on tools without enterprise security controls.

Top Free AI Coding Assistants for Teams in 2026

Free AI coding assistants work well for individual autocomplete but fall short for team-wide workflows. As established earlier, these free tools excel at helping a single developer type faster yet fail requirements around security, CI context, and collaboration. Teams should review that Gemini Code Assist free edition enforces 1,000 requests per user per day, while Codeium offers unlimited autocomplete but no CI awareness.

The table below summarizes strengths and team limitations for leading free options:

|

Tool |

Strengths |

Team Limitations |

|---|---|---|

|

Codeium |

Unlimited autocomplete |

No CI integration, hallucinations |

|

Tabnine |

Local deployment |

Team scalability challenges |

|

Aider |

Git integration |

Terminal-based workflow |

Free tools suit solo developers but fail teams that need security, compliance, and autonomous CI fixes. Checkmarx reports AI coding tools introduce insecure logic and exposed secrets faster than manual review processes can handle, which raises the stakes for any team-scale rollout.

These security gaps, along with the scalability limits above, push many teams toward paid solutions with enterprise-grade controls.

Best Paid AI Coding Assistants for Teams in 2026

Paid AI coding assistants address team bottlenecks through enterprise features, security controls, and autonomous fixes. GitHub Copilot Business at $19 per user provides IP indemnification, while CodeRabbit at $480-600 per user for a 20-person team offers suggestions with some automation.

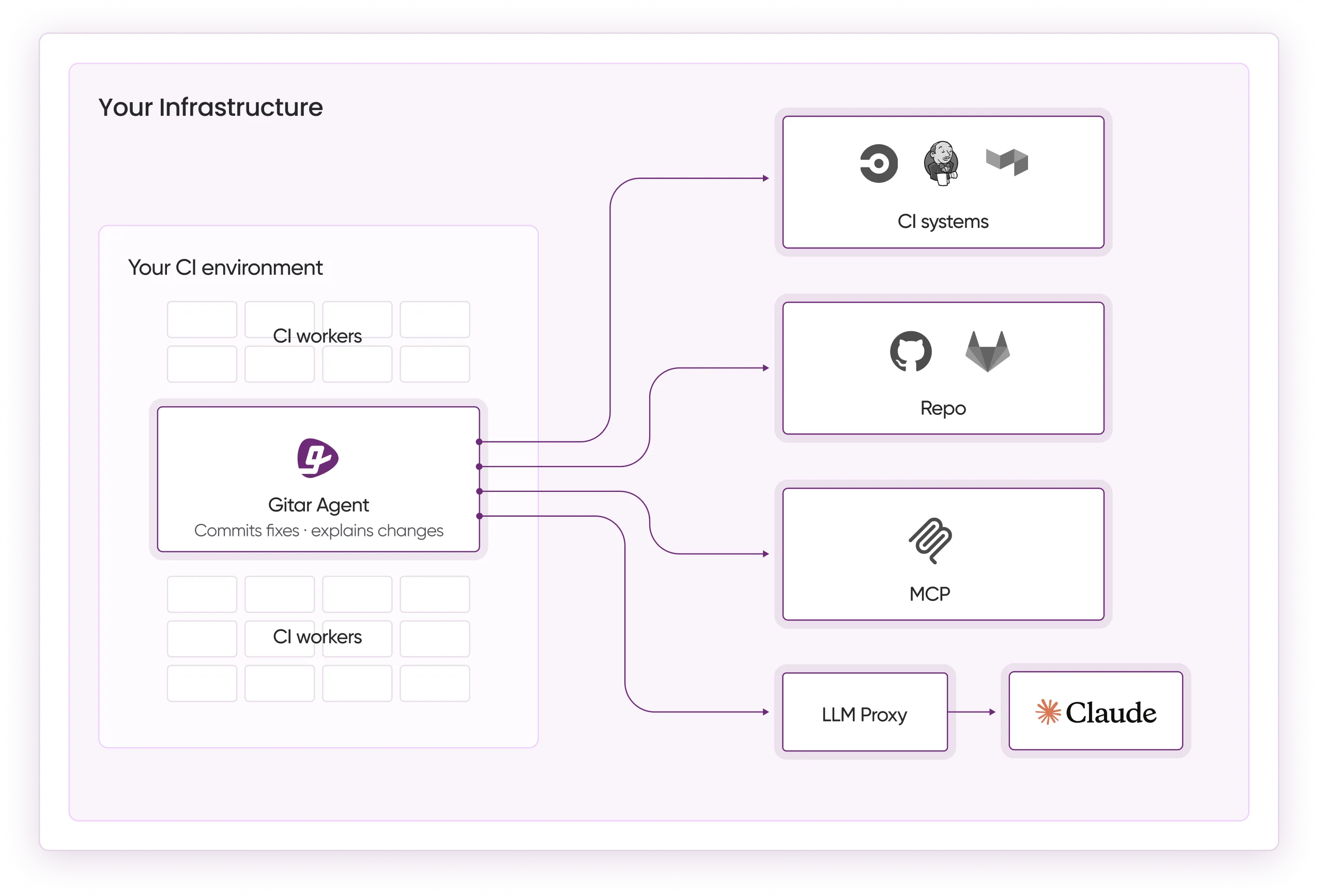

Gitar stands out as the top paid option with its healing engine approach, which fixes code autonomously rather than just suggesting changes. This fundamental capability difference appears in its pricing model: as noted in the comparison above, Gitar’s recent per-seat licensing for Team-tier organizations includes a comprehensive 14-day trial with no seat limits, auto-apply fixes, and CI failure analysis. Teams can evaluate this autonomous approach through that trial period. See the Gitar documentation for implementation details.

The following table compares monthly costs and CI capabilities for popular paid assistants at a 20-developer scale:

|

Tool |

Monthly Cost (20 devs) |

Auto-Fix |

CI Integration |

|---|---|---|---|

|

GitHub Copilot Business |

$380 |

Yes (Autofix) |

Robust |

|

CodeRabbit |

$480-600 |

No |

Limited |

|

Gitar |

14-day trial |

Yes |

Full |

Gitar’s single dashboard comment consolidates all findings, while many competitors flood PRs with notification spam. Experience Gitar’s healing engine firsthand with a full-featured 14-day trial that includes auto-fix and CI analysis.

Why Free Tools Fail Teams: Post-Copilot Bottlenecks in 2026

The AI coding revolution created new bottlenecks: developers generate code three to five times faster, but review capacity has not scaled. A DX survey found 93% of developers use AI coding assistants, yet productivity gains remain capped at 10%.

Free tools often worsen these bottlenecks. They hallucinate in large repositories, rely on per-user limits that do not scale, and lack CI context. CloudGeometry attributes AI hallucinations to missing architectural context, which can break database sharding logic or ignore legacy authentication. These issues compound the review overload and CI failures that teams already face.

The table below connects common bottlenecks with how free tools behave and how paid solutions respond:

|

Bottleneck |

Free Tool Impact |

Paid Solution |

|---|---|---|

|

PR Review Overload |

91% time increase |

Auto-fix, single comments |

|

CI Failures |

Manual debugging |

Autonomous healing |

|

Security Risks |

No compliance |

SOC2, enterprise controls |

Gitar’s CI agent maintains context from PR open to merge and works continuously to keep CI green. Benchmark your repo’s green build time before and after implementation to quantify the impact.

Once you identify which capabilities your team needs to relieve these bottlenecks, the next consideration becomes total cost.

AI Coding Price Comparison 2026: Copilot vs Codeium and Others

Price comparison for team deployments reveals large differences between tools that appear similar at the individual level. GitHub Copilot Business costs $114,000 annually for 50 developers, which sets a benchmark for enterprise budgets.

The table below outlines annual costs and security positioning for popular options at 20- and 50-developer scales:

|

Tool |

20 Developers |

50 Developers |

Security Features |

|---|---|---|---|

|

Codeium |

$2,880/year |

$7,200/year |

Basic |

|

GitHub Copilot |

$4,560/year |

$11,400/year |

IP indemnity |

|

Cursor Teams |

$9,600/year |

$24,000/year |

Per-user allocation |

Gitar achieved SOC 2 Type 2 certification for enterprise security and offers trials that let teams prove ROI before committing to a contract.

After you understand pricing, you can estimate whether autonomous fixes deliver enough productivity to justify a paid rollout.

Team ROI Calculator for Autonomous Fixes in 2026

Teams can calculate the productivity impact of autonomous fixes compared to manual suggestion workflows. Consider a 20-developer team that spends 1 hour each day on CI and review issues.

|

Metric |

Before Gitar |

After Gitar |

Annual Savings |

|---|---|---|---|

|

Daily CI/Review Time |

1 hour/dev |

15 min/dev |

15 hours/day |

|

Annual Productivity Cost |

$1M |

$250K |

$750K |

|

Context Switching |

Multiple/day |

Near-zero |

Focus time |

Platform engineers report cutting YAML configuration toil while still maintaining green builds through autonomous healing.

Decision Matrix: Match AI Coding Assistants to Team Needs

Teams should evaluate tools against their specific bottlenecks rather than picking by brand alone. If PR review floods and CI failures dominate your metrics, start with Gitar’s 14-day trial to experience autonomous fixes. For basic autocomplete needs, free tools can suffice. For enterprise security and compliance, paid solutions become mandatory.

|

Team Need |

Recommended Tool |

Key Benefit |

|---|---|---|

|

PR Review Overload |

Gitar Trial |

Auto-fix, single comments |

|

Basic Autocomplete |

Codeium |

Zero cost |

|

Enterprise Security |

GitHub Copilot Business |

IP indemnity |

If PR review overload is your primary bottleneck, try Gitar’s 14-day trial and measure the velocity impact of autonomous fixes on your own repos.

Conclusion: Move Beyond Free Limits for Team-Scale Velocity in 2026

Free AI coding assistants provide only basic autocomplete, while paid solutions address real team bottlenecks through autonomous fixes, enterprise security, and CI integration. Gitar’s healing engine outperforms suggestion-only competitors by fixing code directly instead of leaving comment threads. The 14-day Team Plan trial lets teams prove value with measurable velocity improvements and more consistent green builds.

Prove the ROI with Gitar’s 14-day Team Plan trial and track your improvements in green build rates, review time, and developer focus.

Frequently Asked Questions

What is the main difference between free and paid AI coding assistants for teams?

Free AI coding assistants provide basic autocomplete and suggestions but lack team-specific features like enterprise security, higher usage limits, CI integration, and autonomous fixes. Paid tools add team collaboration features, security compliance such as SOC2 and IP indemnity, larger context windows, and healing engines that fix code instead of only suggesting changes. For teams of 10-50 developers, paid tools address bottlenecks like PR review overload and CI failures that free tools cannot handle.

How do usage limits in free AI tools impact team productivity?

Free AI coding tools impose usage restrictions that slow team workflows. Gemini Code Assist limits users to 1,000 requests daily, Claude’s free tier allows only 40 messages per day, and GitHub Copilot’s free tier caps at 2,000 completions monthly. These limits force teams to ration AI assistance during critical development phases, create bottlenecks when multiple developers hit quotas at once, and block consistent adoption across the team. Paid tools remove these constraints with unlimited or much higher usage allowances.

Why do free AI coding tools struggle with large team repositories?

Free AI coding tools struggle in large team repositories because they use limited context windows, lack architectural understanding, and cannot maintain state across multiple files and dependencies. They often hallucinate in complex codebases, miss important security patterns, and fail to respect team-specific conventions or legacy constraints. Paid tools provide 1M+ token context windows, persistent memory systems, and architectural reasoning that allow them to work effectively in enterprise-scale repositories with thousands of files.

What security risks do free AI coding assistants pose for teams?

Free AI coding assistants introduce security risks such as exposure of sensitive training data, insecure code suggestions with patterns like SQL injection, missing compliance controls, and potential data leakage through cloud processing. They usually lack enterprise security features like SOC2 certification, IP indemnification, and audit trails. Studies show 45% of AI-generated code contains vulnerabilities, and free tools rarely provide security scanning, compliance reporting, or enterprise-grade data protection that teams require.

How does Gitar’s healing engine differ from suggestion-based AI tools?

Gitar’s healing engine autonomously fixes code issues instead of only suggesting changes. When CI fails, Gitar analyzes failure logs, generates validated fixes, commits them automatically, and targets green builds. Suggestion-only tools leave comments that still require manual implementation, validation, and re-testing. Gitar’s approach removes that manual loop, reduces context switching, and delivers measurable productivity gains by resolving lint errors, test failures, and build breaks while keeping feedback in a single dashboard comment instead of scattered notification spam.