Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways for Your Team

- By 2026, 84% of developers use AI coding tools, which increases PR volume by 91% and forces teams to formalize AI review rules.

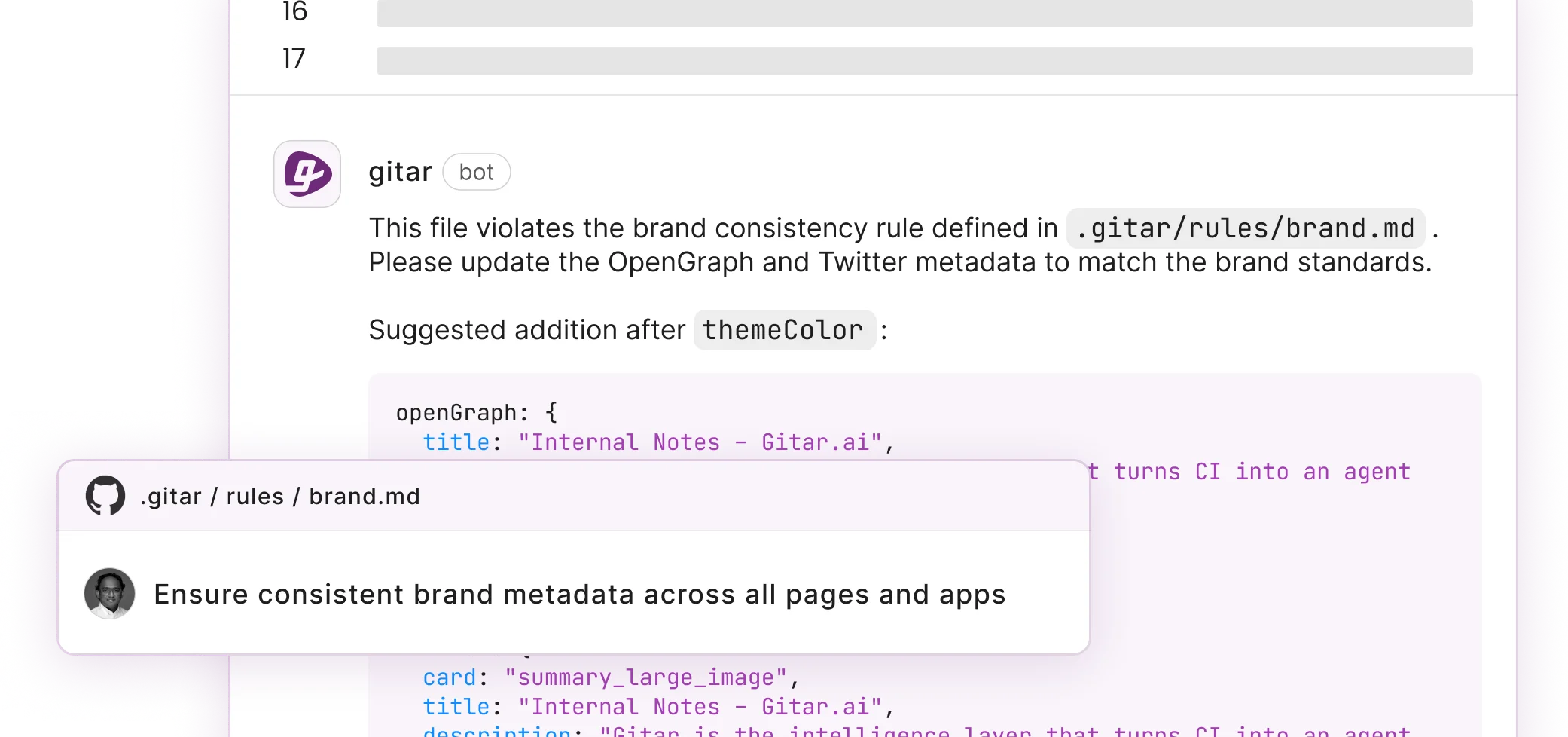

- Gitar stores natural language rules in .gitar/rules/*.md files that apply and validate fixes, instead of only suggesting changes.

- The 7-step setup covers installation, rules directory creation, markdown rule authoring, auto-fix testing, feedback loops, CI configuration, and analytics review.

- Teams see higher ROI from auto-applied, CI-validated fixes and consolidated comments than from suggestion-only tools that still require manual work.

- Start a 14-day Team Plan trial to apply validated auto-fixes across unlimited repositories and modernize your PR review workflow.

AI coding tools now touch almost every pull request, so teams need clear rules that keep reviews fast and reliable. Gitar gives you repository-level control through simple markdown rules that apply fixes, validate them in CI, and keep builds green. The 7-step workflow below shows how to install Gitar, define rules, connect CI, and use analytics to refine results over time. Gitar’s auto-applied fixes and CI validation deliver measurable savings for growing teams, especially when PR volume spikes. You can try the full platform with a 14-day Team Plan trial and see the impact on your own review process.

Repository-Level Rule Files and How They Differ by Tool

AI code review tools use different configuration approaches, from traditional YAML files to modern natural language specifications. The key differentiator is whether the tool merely suggests fixes or actually implements them with validation against your CI pipeline. The table below highlights how Gitar’s auto-fix capability and broad platform coverage compare to tools that only provide suggestions. For details on Gitar’s configuration, see the Gitar documentation.

|

Tool |

Config Type |

Auto-Fix |

Platforms |

|

Gitar |

.gitar/rules/*.md |

Yes |

GitHub, GitLab, CircleCI, Buildkite |

|

GitHub Copilot |

.github/copilot-instructions.md |

No |

GitHub only |

|

Bito |

.bito.yaml |

Suggestions only |

Limited |

|

CodeRabbit |

YAML configuration |

No |

GitHub primary |

Gitar’s natural language rules remove YAML complexity while still supporting the major platforms shown in the table.

Step-by-Step: Customize AI Code Review Rules in 7 Steps

This 7-step guide walks you from installation to a live rule set that applies fixes and integrates with your CI/CD pipeline. Use it as a practical checklist while you configure your first repository and expand to more advanced rules later.

1. Install Gitar Integration

Install the Gitar GitHub App or GitLab integration and start your 14-day Team Plan trial. After installation, Gitar begins posting consolidated dashboard comments on new pull requests so you see a single, evolving summary instead of scattered notes.

2. Create Rules Directory

Create a .gitar/rules/ directory in your repository root. Store all custom review rules in this folder as markdown files so configuration travels with your codebase.

3. Write Natural Language Rules

Define rules using simple markdown syntax that describes when they apply and what actions to take. Here is a security-focused example:

— title: “Security Review” when: “PRs modifying authentication or encryption code” actions: “Assign security team and add label” —

Keep early rules narrow and focused so you can see how they behave before expanding coverage.

4. Test Auto-Fix Output

Create a sample PR with intentional issues to observe Gitar’s auto-fix behavior. The system analyzes failures, generates corrections, validates fixes against CI, and commits working solutions as new changes on the branch.

5. Implement Feedback Loops

Use @gitar refactor to async/await comments to request specific changes and refine how rules act in practice. For Python:

# Before: Synchronous database call def get_user(user_id): return db.query(f”SELECT * FROM users WHERE id = {user_id}”) # After: Gitar auto-refactors to async async def get_user(user_id): return await db.query(“SELECT * FROM users WHERE id = ?”, user_id)

These targeted prompts help the system learn the patterns your team prefers.

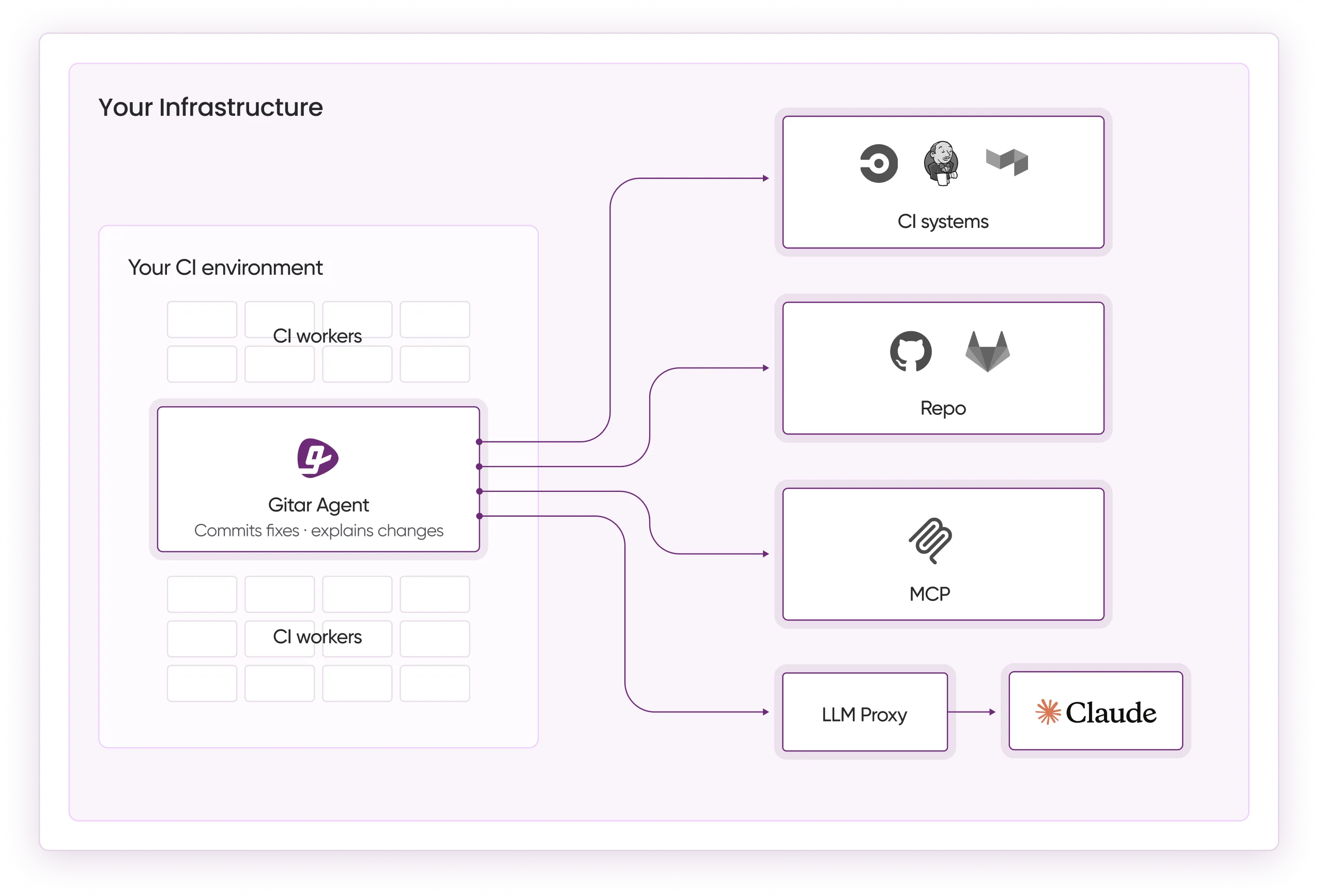

6. Configure CI Integration

Enable CI blocking for critical failures that must never reach production. Allow auto-fixes for routine issues such as linting errors, flaky or simple test failures, and straightforward dependency updates that CI can safely validate.

7. Monitor and Iterate

Use Gitar’s analytics dashboard to track rule effectiveness, CI failure patterns, and team productivity improvements. Refine rules based on real usage so you reduce noise, cut review time, and keep false positives under control.

Now that you have a working rule set, you can compare this workflow with other AI review tools and see how architecture affects day-to-day results.

Gitar vs. Competitors: Impact on Workflow and ROI

The fundamental difference between Gitar and traditional AI code review tools lies in the healing engine architecture that validates and applies fixes rather than merely suggesting them. The comparison below shows how this architecture translates into concrete capabilities and ROI that suggestion-only tools cannot match.

|

Capability |

CodeRabbit/Greptile/Copilot |

Gitar |

|

Auto-apply fixes |

No |

Yes |

|

CI validation |

No |

Yes |

|

Natural language rules |

Limited YAML |

Full .gitar/rules/*.md |

|

ROI (20-dev team) |

$450-900/mo suggestions |

$750K annual savings |

While competitors scatter inline comments across diffs, Gitar consolidates all findings into a single updating comment that reduces notification spam and keeps progress easy to follow.

Start your free trial to automatically fix broken builds and ship higher-quality software faster.

Best Practices and Pitfalls When Defining Rules

Teams that treat AI rules as part of their engineering standards see better outcomes and less review noise. Use the practices below as a rollout plan and watch for the common mistakes that often derail early experiments.

Best Practices:

- Start with simple rules and gradually increase complexity as team confidence builds. This staged approach lets you observe behavior before adding advanced logic.

- Once basic rules work reliably, use severity levels to prioritize critical security and correctness issues over style preferences so engineers focus on high-impact problems first.

- Enable suggestion mode initially to review AI-generated fixes, then transition to auto-commit for trusted fix types after you validate their accuracy.

- Throughout this rollout, focus on structured JSON output with evidence, severity, and confidence scores so you can measure rule quality objectively.

Common Pitfalls:

- Over-specification that creates false positives above the recommended 10% threshold and erodes trust in the system.

- Notification spam from chatty AI tools that fragment attention across multiple comments on every PR.

- Lack of CI context that produces suggestions which fail validation once tests and checks run.

- Generic rules that ignore team-specific patterns, frameworks, and architectural decisions.

Gitar addresses these pitfalls through its single-comment model, CI-validated fixes, and natural language rules that adapt to team preferences without complex configuration.

Advanced Feedback Loops and Multi-Platform Rollouts

Mature teams extend Gitar beyond basic rule checks and use it as a feedback layer across repositories and platforms. This approach turns AI review into a continuous learning system that reflects how your organization actually ships code.

Use @gitar commands for real-time adjustments:

@gitar refactor this function to use dependency injection @gitar add error handling for network timeouts @gitar optimize this database query for performance

The multi-platform setup described earlier ensures consistent rule behavior across all supported environments. The 2026 agentic coding trends highlight hierarchical memory systems that maintain context per line, per PR, and per organization, which helps AI agents learn team patterns over time.

Enterprise deployments run agents inside your CI pipeline with access to secrets, caches, and environment-specific configuration. This setup ensures that proposed fixes match real production conditions instead of passing only in isolated test environments.

Frequently Asked Questions

Can I customize AI code review rules for GitHub Copilot?

GitHub Copilot supports basic customization through copilot-instructions.md files, but these only provide suggestions without auto-fix capabilities. Gitar integrates with GitHub while offering richer natural language rule definition and automatic fix implementation that Copilot cannot match. You can run both tools together, with Gitar handling the review and fix automation that Copilot leaves to developers.

How do .gitar rules automatically fix code issues?

Gitar’s healing engine analyzes CI failures and review feedback, generates fixes with full codebase context, validates solutions against your actual CI pipeline, and commits working code directly to your PR. Unlike suggestion-only tools, every fix is tested to confirm that it resolves the issue without introducing new problems. The system maintains a single updating comment that tracks all changes and resolutions.

What does the 14-day free trial include?

The Team Plan trial provides unlimited access to all Gitar features including auto-fix capabilities, custom rule creation, CI integration across major platforms, PR summaries, security scanning, and the analytics dashboard. There are no seat limits during the trial period, so your entire team can experience the full platform before making a commitment.

Can I create Python and GitHub-specific rules?

Yes, Gitar supports language-specific and platform-specific rules. For Python, you can create rules that enforce async and await patterns, proper exception handling, or PEP 8 compliance. GitHub-specific rules can trigger based on branch protection, required reviewers, or label assignments. The natural language format keeps these customizations accessible without YAML expertise.

Does Gitar work with multiple platforms simultaneously?

Gitar provides native support across the platforms shown in the comparison table, with consistent rule application in every environment. You define rules once and have them enforced regardless of which service hosts your repositories or runs your CI pipelines. This unified approach removes the need for separate tools and configurations per platform.

Conclusion: From Suggestions to Autonomous Fixes

Custom AI code review rules shift development workflows from suggestion-heavy reviews to autonomous fix implementation. Traditional tools often charge premium prices for basic commentary, while Gitar’s natural language rules and healing engine deliver validated fixes that keep builds green.

The move from static YAML files to dynamic, feedback-driven AI rules signals the next phase of development automation. Teams that adopt platforms like Gitar reduce PR cycle times, remove repetitive manual fixes, and enforce consistent code quality across repositories.

Begin your 14-day trial to experience validated fixes and autonomous build healing that suggestion engines cannot provide.