Key Takeaways

- AI coding tools increased PR volume by 98% but review times rose 91%, shifting bottlenecks to validation and CI processes.

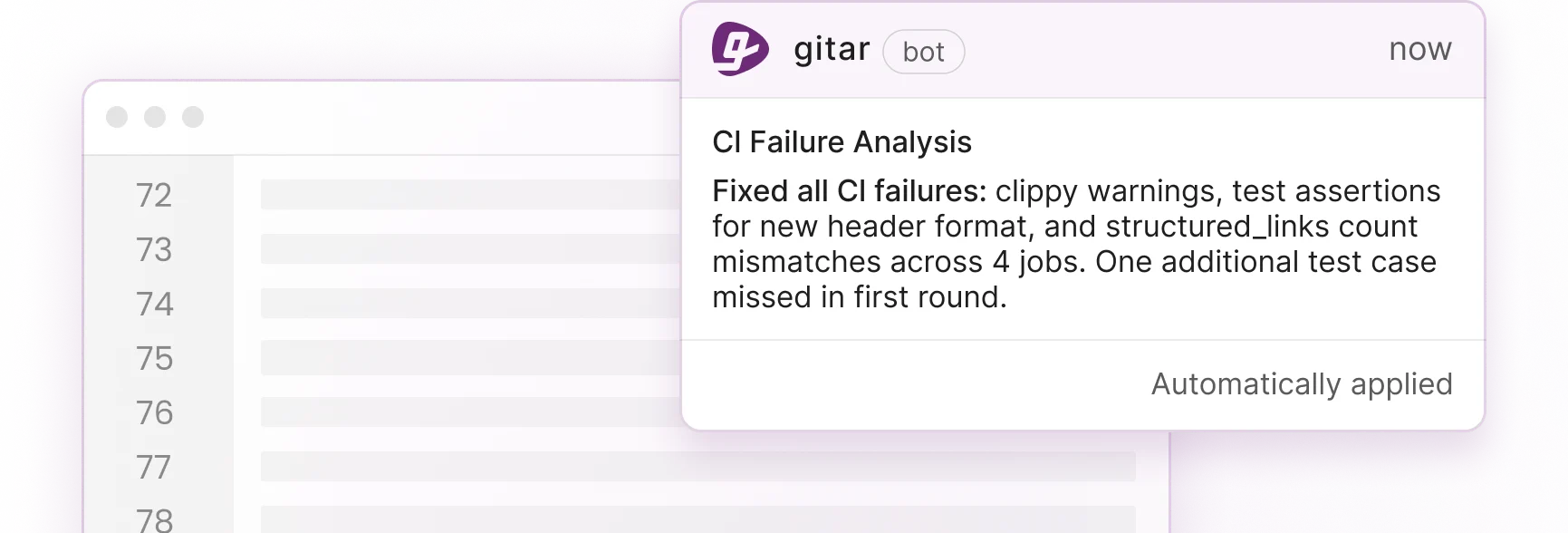

- Gitar’s healing engine auto-fixes CI failures and reviewer feedback, guaranteeing green builds unlike suggestion-only tools.

- Start AI code review in suggestion mode, enforce small PRs under 400 lines, and maintain human-in-the-loop for critical code.

- Automate CI resolution, use natural language rules, and track metrics like cycle time and bug rates for 200-400% ROI.

- Implement these practices with Gitar’s 14-day team trial to eliminate post-Copilot bottlenecks and ship faster.

The Solution: Gitar’s Healing Engine That Guarantees Green Builds

Gitar turns AI code review from suggestion into automation. When CI fails or reviewers leave feedback, Gitar does not just flag problems, it fixes them automatically. The platform analyzes failure logs, generates validated fixes, and commits working solutions directly to your PRs. See Gitar documentation for details.

| Capability | CodeRabbit/Greptile | Gitar (14-Day Team Trial) |

|---|---|---|

| PR summaries | Yes | Yes |

| Inline suggestions | Yes | Yes |

| Auto-apply fixes | No | Yes |

| CI auto-fix | No | Yes |

| Guarantee green builds | No | Yes |

For a 20-developer team spending 1 hour daily on CI and review issues, Gitar cuts this to 15 minutes per developer. That shift saves about $750,000 annually in productivity costs. Install Gitar now, automatically fix broken builds, and start shipping higher quality software faster.

10 Best Practices for Implementing AI Code Review in Teams

1. Run a Pilot in Suggestion-Only Mode First

Start AI code review in suggestion-only mode to build team trust. Configure your AI tool to analyze PRs and provide recommendations without applying changes automatically. This setup lets developers compare AI suggestions with their own judgment and learn how the tool behaves. Gitar’s configurable trust levels help teams increase automation gradually as confidence grows. See Gitar documentation for configuration details. Track suggestion acceptance rates, and note that healthy adoption shows suggestion acceptance rates above 15%.

2. Keep Humans in the Loop for Every AI Change

Require humans to read, review, and understand all AI-generated code before submitting for peer review. Design feedback loops where developers can challenge AI findings and correct them. Gitar automatically implements approved feedback while humans stay responsible for critical decisions. Set confidence thresholds below 90% for business-critical code sections so the system always triggers extra human review.

3. Enforce Small, Focused Pull Requests

Set strict PR size limits to counter AI-driven PR bloat. AI-assisted PRs are 18% larger on average, which makes them harder to review well. Add automated checks that flag PRs exceeding 400 lines of code. Smaller PRs lower cognitive load, improve review quality, and speed up feedback cycles. Configure branch protection rules that require justification for larger changes.

4. Automate CI Failure Resolution, Not Just Detection

Automated CI failure fixes deliver the biggest impact. When lint errors, test failures, or build breaks appear, advanced AI systems should analyze root causes and generate working fixes. Gitar’s healing engine performs this analysis automatically, validates fixes against your full CI pipeline, and commits solutions that guarantee green builds. This approach removes the manual grind of reading CI logs and hand-writing fixes.

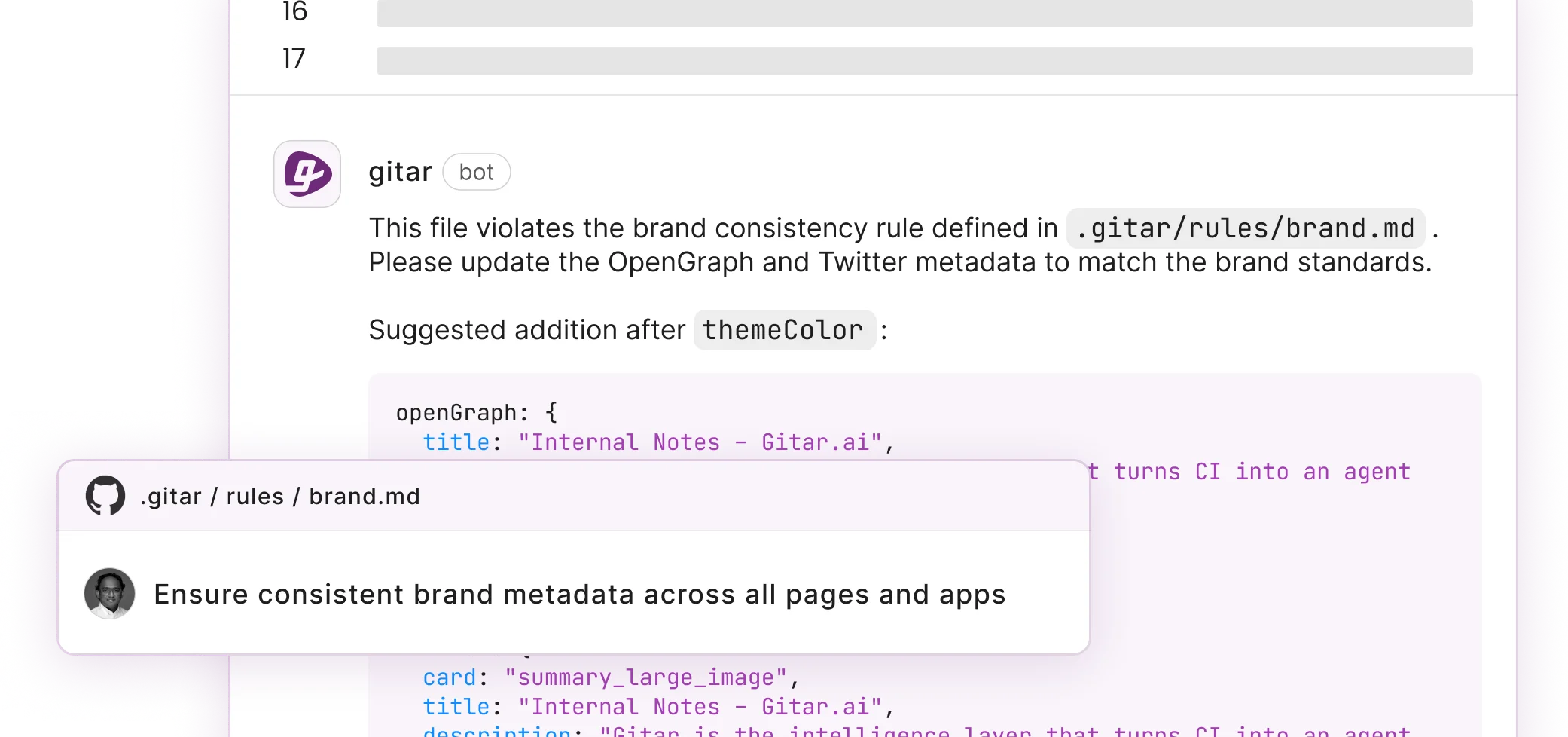

5. Set Clear AI Scope and Guardrails

Define explicit policies for where and how AI can change code. Treat AI-generated code as untrusted by default, with extra scrutiny on authentication, authorization, and state management. Create repository-specific rules using natural language policies. Gitar lets teams define rules in .gitar/rules/*.md files without complex YAML, so governance stays accessible to every contributor. See Gitar documentation for rule configuration.

6. Build Continuous Feedback Loops into AI Reviews

Track override rates, error rates, and time spent on verification to maintain exception logs and retrain models. Monitor how often developers reject AI suggestions and look for patterns in those rejections. Gitar provides analytics dashboards that surface AI performance metrics and highlight weak spots. Use this data to tune AI sensitivity and cut false positives that create alert fatigue.

7. Consolidate AI Feedback into Structured Comments

Reduce notification spam by grouping AI feedback into single, updating comments. Traditional tools scatter inline comments across diffs and flood developer inboxes. Gitar uses a dashboard-style comment that updates in place and shows all findings in one location, including CI analysis, review feedback, and rule evaluations. When issues are resolved, they collapse automatically so the interface stays clean and developers keep a single source of truth.

8. Watch for Alert Fatigue and AI Bias

AI reviewing AI-generated code creates confirmation bias, with logic and correctness issues 75% more common in AI PRs. Counter this pattern by using multiple AI models that can disagree and flag risky areas for humans. Require AI to show its work and analyze entire codebase context, not just individual changes. This practice improves transparency and helps reviewers trust or reject suggestions faster.

9. Scale Workflows with Natural Language Rules

Replace fragile, YAML-heavy CI configuration with natural language rules that more teammates can manage. Traditional CI setups demand YAML expertise and dedicated DevOps support. Instead, let teams write rules like, “When PRs modify authentication code, assign security team and add security-review label.” This approach opens CI customization to more engineers and removes the bottleneck of specialized workflow knowledge.

10. Measure AI Code Review ROI with Hard Numbers

Track cycle time before and after adoption, code review turnaround time, bug rates, and deployment frequency. Industry benchmarks show mid-market companies reach 200-400% ROI with 8-15 month payback periods. Gitar’s dashboard exposes time savings, velocity gains, and productivity improvements so teams can quantify the impact of AI code review.

Overcoming Copilot Bias and Confirmation Issues

AI models that review their own generated code create systematic blind spots. High AI adoption companies had 9.5% of PRs as bug fixes versus 7.5% in low-adoption companies, which shows that more defects slip through faster review cycles. Reduce this risk with diverse validation approaches and consistent human oversight for architectural and high-impact decisions.

Human-in-the-Loop Workflows with Auto-Fixes

Avoid humans as bottlenecks in innermost loops by using agents in outer loops with humans directing improvements to evaluation harnesses. Configure AI systems to handle routine fixes automatically while routing complex or ambiguous changes to human reviewers. This hybrid model keeps quality high while still capturing the speed gains of automation.

Frequently Asked Questions

How should teams handle AI-generated code reviews?

Treat all AI-generated code as untrusted and require human verification before merging. Use tiered review processes so critical systems like authentication and payment processing receive extra scrutiny. Prefer AI tools that provide concrete evidence for their suggestions instead of vague explanations. Keep clear accountability chains where humans remain responsible for all merged code. Configure your AI review system to highlight high-risk areas and require manual approval for changes that touch security or core business logic.

What are the most effective best practices for AI code review based on community feedback?

Developer communities recommend starting in suggestion-only modes to build trust, then enabling automation for low-risk changes. Successful teams shrink PR sizes to offset AI-driven volume increases and rely on consolidated feedback systems to avoid notification fatigue. They also define clear governance policies for different code areas. The most powerful practice is choosing tools that fix issues automatically instead of only pointing them out, because that shift addresses the real scaling bottleneck.

How can teams integrate AI code review without compromising existing quality standards?

Keep current human review requirements and add AI as an extra analysis layer. Configure AI tools to enforce your coding standards and style guides, and use them to catch issues that humans might miss under heavy load. Build feedback loops where developers correct AI mistakes so the system learns your team’s expectations. Start with non-critical repositories to validate the AI’s behavior before expanding into production systems.

Should teams trust automated commits from AI code review tools?

Begin with manual approval for every AI-suggested change so you can evaluate accuracy and build confidence. After trust grows, allow automatic commits for low-risk categories such as formatting fixes, dependency updates, or simple lint corrections. Keep an easy way to revert automated changes and always require human approval for modifications that affect business logic, security, or architecture. Expand automation scope gradually based on demonstrated reliability.

How does Gitar compare to suggestion-only tools like CodeRabbit and Greptile?

Gitar delivers a full automation platform instead of only comments that still need manual work. Suggestion-only tools like CodeRabbit and Greptile charge $15-30 per developer while leaving implementation to humans. Gitar analyzes problems, generates fixes, validates them against your CI pipeline, and commits working solutions automatically. This approach removes the manual toil of interpreting suggestions and applying changes, which creates real productivity gains instead of minor assistance.

What ROI can a 20-developer team expect from implementing AI code review?

Teams often see meaningful time savings within the first month. A 20-developer team that spends one hour daily on CI and review issues can cut that to 15 minutes per developer with effective AI automation. That change represents roughly $750,000 in annual productivity savings. Tools that provide real automation, not just suggestions, unlock this level of ROI because they remove the remaining manual effort.

Conclusion: Reclaim Engineering Velocity with AI Code Review

The AI coding wave moved the bottleneck from writing code to reviewing and validating it. Teams that apply these 10 best practices can scale review capacity without lowering quality. Start with pilot programs, keep humans in the loop, enforce small PRs, automate CI fixes, and measure ROI with concrete metrics.

The crucial decision is choosing tools that solve problems instead of only identifying them. Suggestion engines keep teams stuck in manual implementation cycles. Autonomous platforms like Gitar’s healing engine deliver real productivity gains by fixing issues automatically and guaranteeing green builds.