Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways

- GitHub PR review times surged 91% in 2026 despite AI coding tools, shifting bottlenecks to validation and merging.

- Gitar leads with 5-minute setup, a 14-day unlimited team trial, and auto-fix CI capabilities that heal builds automatically.

- Most “free” tools like CodeRabbit and PR-Agent create $300-900 monthly API costs for teams and only provide suggestions, not fixes.

- Self-hosted options require weeks of configuration, GPU infrastructure, and ongoing maintenance, often exceeding managed solution costs.

- Teams reduce manual work and ship faster with Gitar’s healing engine during a 14-day Team Plan trial.

Top 3 AI PR Review Tools at a Glance

The leading solutions demonstrate vastly different approaches to automated PR reviews. The table below highlights the critical difference: most tools stop at suggestions, while only Gitar provides comprehensive auto-fixing with CI validation during its trial and does so with the fastest setup time.

|

Tool |

Setup Time |

Real Cost/PR |

Auto-Fix CI? |

|

Gitar |

5 minutes |

$0 (14-day trial) |

Yes (Trial) |

|

CodeRabbit OSS |

15-30 minutes |

$0.008-0.015 |

No |

|

PR-Agent |

Weeks of configuration |

$0.01-0.02 |

No |

1. Gitar – Healing Engine That Fixes CI and Review Feedback

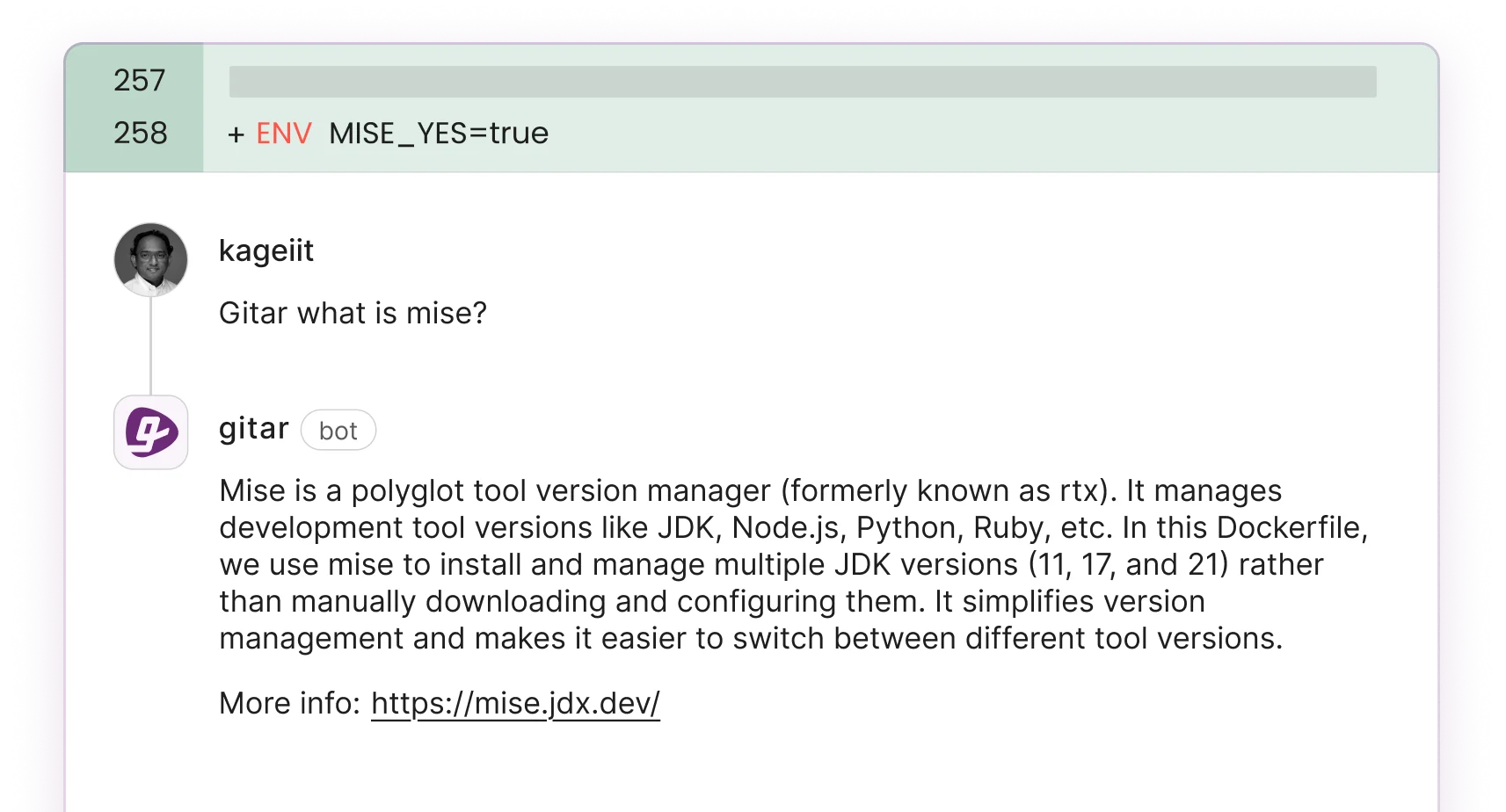

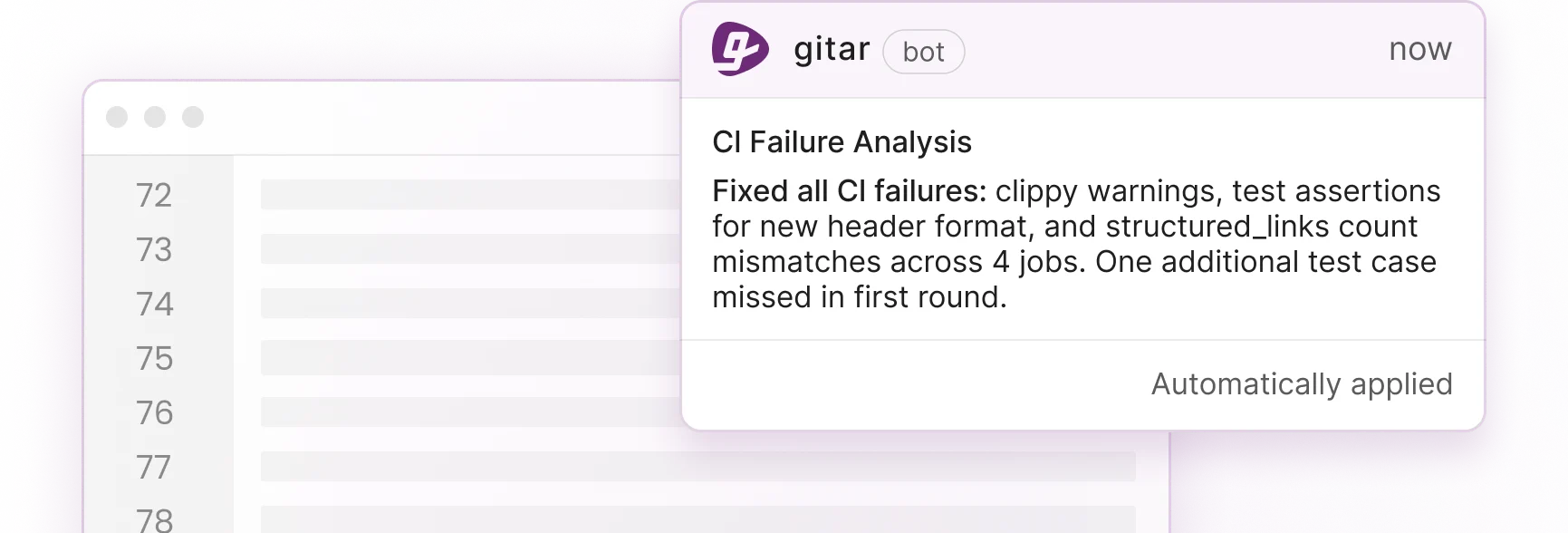

Gitar stands apart by providing a comprehensive 14-day Team Plan trial with unlimited access to its healing engine, the only tool in this list that automatically fixes CI failures and implements review feedback rather than just suggesting changes during the trial. When lint errors, test failures, or build breaks occur, Gitar’s CI agent maintains full context from PR opening to merge, keeps CI green by finding root causes, fixing them, and verifying results. See the Gitar documentation for setup details.

Setup requires installing the GitHub App and enabling the trial, which matches the 5-minute setup time shown earlier. Unlike competitors that flood PRs with dozens of inline comments, Gitar consolidates all information in one living “Dashboard” comment that stays updated in real time, appears only when meaningful, and moves down the activity timeline as changes are made.

Key features include automatic CI failure resolution, review feedback implementation via @gitar mentions, natural language workflow rules, and deep analytics. The platform integrates with GitHub, GitLab, Jira, Slack, and all major CI systems. Recent updates added configurable PR merge blocking based on review severity and inline code comments with verdict badges.

Pros: True automation with auto-fixes during trial, single clean comment interface, comprehensive 14-day trial, CI healing capabilities, cross-platform support

Cons: Trial limited to 14 days

Ideal for: Teams seeking genuine automation that reduces manual work rather than adding more comments

2. CodeRabbit OSS – Deep Analysis with Comment Clutter

CodeRabbit offers a free tier for open-source projects and personal use, providing detailed PR walkthroughs using large language models combined with 40+ code analyzers. In AIMultiple’s 2026 evaluation of 309 pull requests, CodeRabbit achieved 4/5 ratings on correctness and actionability, though scored only 1/5 on completeness.

The tool integrates with GitHub, GitLab, Bitbucket, and Azure DevOps through cloud or self-hosted deployment. Setup involves connecting repositories and configuring review preferences, typically completed in 15-30 minutes. CodeRabbit provides conversational chat interfaces in PR comments for follow-up questions, with high configurability including nitpickiness levels and custom rules.

Pros: Detailed analysis, conversational interface, multi-platform support, learning from team feedback

Cons: Leaves many comments cluttering GitHub timeline, paid plans start at $12-30 per user monthly for team use, suggestions only

Ideal for: Open-source projects and individual developers comfortable with comment-heavy interfaces

3. ai-codereviewer – Minimal GitHub Action for Basic Checks

For teams seeking even simpler setup than CodeRabbit at the cost of fewer features, ai-codereviewer provides a minimalist approach through GitHub Actions integration. This open-source tool focuses on essential code review functionality without extensive configuration overhead. Setup involves adding a workflow file to your repository’s .github/workflows directory.

The tool analyzes code changes and provides feedback through PR comments, supporting OpenAI and compatible API providers. While straightforward to implement, it requires API keys and incurs per-request costs similar to other suggestion-based tools.

Pros: Simple GitHub Actions integration, minimal configuration, lightweight footprint

Cons: Basic feature set, API costs accumulate, suggestions only, limited customization

Ideal for: Small teams wanting basic AI review without complex setup

4. PR-Agent – Self-Hosted Control with Heavy Setup

CodiumAI’s PR-Agent offers comprehensive self-hosted deployment for complete data control. With 9,800 GitHub stars and 188 contributors, this Apache 2.0 licensed tool supports flexible LLM model selection and GitHub Actions integration. AugmentCode’s 2026 evaluation highlights data sovereignty benefits for regulated industries, though notes GPU requirements for performant local models and configuration complexity exceeding non-AI alternatives.

Setup involves configuring deployment infrastructure, selecting AI models, and establishing API connections. Each component requires specialized expertise: infrastructure teams must provision GPU resources, ML engineers must evaluate and tune models, and DevOps must integrate with existing CI/CD pipelines. This multi-team coordination typically requires 6-13 weeks including infrastructure provisioning and integration per documentation. Self-hosting involves infrastructure costs, ongoing maintenance, and keeping models updated, while enabling full customization and avoiding vendor lock-in.

Pros: Complete data sovereignty, flexible model selection, active development community, customizable deployment

Cons: Configuration complexity, GPU infrastructure requirements, ongoing LLM API costs, security vulnerabilities via prompt-injection

Ideal for: Organizations requiring data sovereignty with technical resources for self-hosting

5. GitHub Copilot Code Review – Fast but Shallow Feedback

GitHub Copilot added PR review capabilities in late 2025, analyzing pull requests in under 30 seconds with zero additional setup for existing subscribers. The feature delivers shallow reviews lacking project context from JIRA tickets or Slack discussions, requiring existing GitHub Copilot subscription access.

GitHub Copilot’s free tier limits users to 50 chat requests and 2,000 completions monthly, with basic features only after quota exhaustion. The analysis focuses on style and obvious bugs rather than architectural concerns, with potentially noisy comments requiring careful configuration.

Pros: Zero setup for Copilot users, fast analysis, integrated GitHub experience

Cons: Surface-level reviews, monthly quotas, requires Copilot subscription, suggestions only

Ideal for: Existing Copilot subscribers seeking basic automated feedback

If Copilot’s surface-level reviews and monthly quotas feel limiting, see how Gitar’s healing engine works in your repository by installing the GitHub App in under 5 minutes.

6. SonarQube Community Edition – Proven Static Analysis

SonarQube Community Edition provides static code analysis with GitHub integration through pull request decoration. While not AI-powered in the modern sense, it offers code quality checks, security vulnerability detection, and technical debt analysis.

Setup requires deploying SonarQube server infrastructure and configuring project analysis. The tool excels at detecting code smells, security hotspots, and maintainability issues but lacks the contextual understanding of AI-powered alternatives.

Pros: Comprehensive static analysis, security focus, established enterprise adoption, no API costs

Cons: Infrastructure overhead, not AI-powered, limited contextual understanding, suggestions only

Ideal for: Teams prioritizing security and code quality metrics over AI-powered insights

7. Emerging Local AI Solutions – High-Privacy Experiments

Beyond established tools, several experimental approaches attempt to eliminate API costs entirely through local model deployment, though at significant complexity cost. These solutions use local AI models through Ollama, local GPU deployment, or edge computing approaches and aim to maintain strict privacy.

Examples include custom implementations using Code Llama, StarCoder, or other open-source models. Setup complexity varies dramatically based on chosen architecture, often requiring days of configuration and ongoing maintenance.

Pros: Complete privacy, no API costs, customizable models, learning opportunities

Cons: Experimental stability, high technical requirements, significant setup time, limited support

Ideal for: Technical teams with specific privacy requirements and resources for experimentation

Side-by-Side Cost and Trial Comparison

The table below expands the initial comparison to show how trial limitations and real-world costs scale for a typical 30-developer team, revealing the hidden expenses behind “free” tools.

|

Tool |

Setup (mins) |

Trial Duration |

Auto-Fix CI? |

Platforms |

Real Cost (30-dev team) |

|

Gitar |

5 |

14 days unlimited |

Yes (Trial) |

GitHub/GitLab |

$0 trial |

|

CodeRabbit OSS |

15-30 |

OSS only |

No |

Multi-platform |

$360-900/mo |

|

PR-Agent |

Weeks |

Unlimited OSS |

No |

GitHub primary |

$300-600/mo |

|

GitHub Copilot |

0 |

50 requests/mo |

No |

GitHub only |

$570/mo |

Hidden Costs and Tradeoffs Behind “Free” Tools

The “free” AI PR review landscape conceals significant operational expenses. Open-source tools like PR-Agent incur ongoing LLM API costs when using external providers, plus potential GPU infrastructure requirements for local deployment. Teams frequently discover monthly bills of $450-900 after initial “free” trials end.

Most tools operate as suggestion engines that identify issues but require manual implementation of fixes. Because developers must stop their current work to apply these suggestions, this approach creates additional context switching rather than reducing it. More critically, when suggestions involve fixing CI failures, the manual implementation cycle, from reading the suggestion to writing a fix, pushing changes, waiting for CI, and repeating if wrong, perpetuates rather than resolves the core bottleneck of getting code through CI pipelines. For solo developers, open-source options provide reasonable value. For teams seeking genuine productivity gains, the ROI calculation favors platforms that actually reduce manual work rather than generating more comments to process.

Frequently Asked Questions

What’s the best AI PR reviewer with free trial access for teams?

Gitar’s 14-day Team Plan trial provides the most comprehensive free access, including unlimited users, auto-fix capabilities, and full platform features during the trial. Unlike competitors that limit free tiers to open-source projects or individual use, Gitar’s trial enables teams to evaluate true automation without restrictions.

How do CodeRabbit’s free and paid tiers compare?

CodeRabbit’s free tier restricts usage to open-source projects and personal repositories. Paid plans starting at $12-30 per user monthly unlock team features, private repository access, and advanced analytics. The free tier provides full functionality within its scope but becomes expensive for commercial team use.

Can I run AI code review locally without API costs?

Teams can run AI code review locally through self-hosted solutions like PR-Agent with local models or custom implementations using Ollama. However, these approaches require significant technical expertise, GPU infrastructure, and ongoing maintenance. The total cost of ownership often exceeds managed solutions when factoring in engineering time and hardware requirements.

How do I integrate AI PR review with existing CI pipelines?

Most tools integrate through GitHub Actions, webhooks, or direct API connections. Gitar provides the deepest CI integration, automatically analyzing failures and implementing fixes within your existing pipeline. Other tools typically require manual workflow configuration and do not interact with CI beyond basic status reporting.

Is automated code fixing safe for production repositories?

Gitar’s auto-fix capabilities include validation against your CI environment before committing changes, which ensures fixes actually resolve issues. The system supports configurable approval workflows, allowing teams to start in suggestion mode and gradually enable automation for trusted fix types. All changes are auditable and reversible through standard Git workflows.

Conclusion and Next Steps

The AI PR review landscape divides between suggestion engines that add comments and healing engines that actually fix code. Suggestion engines like CodeRabbit OSS and PR-Agent provide valuable analysis but perpetuate the bottleneck by requiring manual implementation, so developers still write the fix, push it, and wait for CI to validate. Healing engines like Gitar eliminate this manual cycle by automatically resolving CI failures and implementing review feedback, guaranteeing green builds rather than just identifying problems.

For teams drowning in PR backlogs despite AI coding adoption, the answer is not more comments but genuine automation that reduces manual work. Ready to move beyond suggestion engines? Install Gitar and let your CI heal itself.