Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways for Hybrid Code Review in 2026

- AI tools increase code generation 3-5x, while review time jumps 91% due to larger PRs and more defects, costing teams $1M+ in lost productivity each year.

- Manual reviews provide context and mentorship but cannot keep up with AI-driven code volume, while automation delivers speed yet struggles with accuracy and false positives.

- Hybrid workflows win by automating routine issues such as formatting and common security patterns and reserving manual review for architecture and business logic.

- Gitar’s healing engine auto-fixes CI failures with validation and direct PR commits, unlike suggestion-only tools, cutting productivity losses by roughly 75%.

- Implement hybrid workflows today and start healing builds automatically with Gitar’s 14-day trial so your team can ship higher-quality software faster.

The 2026 Reality: AI Coding Boom, Slower Reviews

Current data shows a clear bottleneck in review capacity. Greptile’s internal engineering velocity data shows the median pull request (PR) size grew 33% from March to November 2025, increasing from 57 to 76 lines changed per PR. At the same time, lines of code output per developer rose 76%, from 4,450 to 7,839 lines.

This surge in code volume drives more defects and more review work. Jellyfish data shows engineering teams with high AI adoption had 9.5% of PRs as bug fixes, compared to 7.5% in low-adoption teams. Quality issues stack up, because an industry analysis of 470 pull requests found AI-generated code contained 1.7x more defects than human-written code.

The human impact is large and persistent. METR 2025 randomized controlled trial analysis shows AI workflows increase the reviewer’s burden, because verifying plausibly correct but error-prone AI-generated code takes longer than creating or reviewing code manually. For a 20-developer team spending 1 hour per day per developer on CI and review issues, that overhead represents roughly $1 million in annual productivity loss.

Manual Code Review: Where It Shines and Where It Breaks

Manual code review delivers the most value when teams need human judgment and context. Google’s analysis of nine million code reviews identifies knowledge transfer, not defect detection, as the primary source of code-review ROI. Human reviewers understand business logic and architecture and can mentor junior developers through complex changes.

Manual review also hits hard limits as volume grows. Microsoft and Google research shows that traditional code reviews catch around 60-65% of issues when done consistently. AI-generated code multiplies the workload and pushes these processes past their natural capacity. The following table shows how these constraints appear across four critical dimensions.

|

Aspect |

Strengths |

Limitations |

Impact on Teams |

|

Speed |

Thorough analysis |

1 hour/day/dev average |

91% review time increase |

|

Context |

Business logic understanding |

Timezone delays |

24-48 hour review cycles |

|

Quality |

Mentorship and knowledge transfer |

Reviewer fatigue |

Declining effectiveness |

|

Scalability |

Handles complex decisions |

Cannot match AI code volume |

Bottleneck formation |

Automation in Code Review: Speed, Scale, and False Positives

Automated code review tools such as CodeRabbit and Greptile aim to absorb the volume spike with AI-powered analysis. These suggestion engines scan large PRs quickly and flag common issues like formatting violations, security patterns, and basic logic errors.

Automation still struggles with accuracy and trust. In the c-CRAB benchmark, individual automated code review tools reach pass rates of 20.1% to 32.1% on tests derived from human PR reviews, compared to 100% for human reviewers. False positives remain common, and developers ignore up to 40% of alerts when tools generate too many incorrect warnings.

|

Capability |

Automation Strength |

Limitation |

False Positive Rate |

|

Speed |

Instant analysis |

No fix validation |

20-40% ignored alerts |

|

Consistency |

Uniform standards |

Context blindness |

22% average FP rate |

|

Coverage |

Every line scanned |

Misses nuanced issues |

Varies by tool |

|

Scalability |

Handles volume |

Suggestion-focused output |

Manual implementation required |

The core limitation is clear. Most tools stop at suggestions and comments, even when they offer some one-click apply features. They rarely provide fully autonomous implementation, validation, and direct commits to PRs, so developers still need to oversee and verify many changes.

Automated vs Manual: Direct Comparison for Modern Teams

Given these limitations in both manual and automated approaches, the choice between them is not binary. Each method excels in different scenarios, and teams gain the most value when they combine both into a single workflow.

|

Metric |

Manual Review |

Automation |

Hybrid Recommendation |

|

Speed |

Hours to days |

Minutes |

Auto for routine, manual for complex |

|

Accuracy |

60-65% issue detection |

20-32% pass rate |

Layered validation |

|

Context |

Full business understanding |

Code-only analysis |

Manual for architecture decisions |

|

Scalability |

Limited by human capacity |

Unlimited volume |

Auto handles volume, manual for quality |

The data shows that neither approach alone can meet the demands of AI-assisted development. Teams need automation for speed and coverage and human review for judgment and context.

Playbook: When to Use Manual Review vs Automation

Effective hybrid workflows rely on clear rules for when to use each approach. Hybrid code review, with automated scans first for speed and uniformity followed by manual review for context, mentorship, critical logic, and security, has emerged as the leading practice.

Automation-First Scenarios:

- Formatting and style violations

- Common security patterns such as SQL injection and XSS

- Test coverage checks and basic logic errors

- Dependency vulnerabilities

- Performance anti-patterns

Manual-Required Scenarios:

- Architectural changes and design decisions

- Business logic validation

- Security-critical authentication flows

- API design and backwards compatibility

- Complex algorithm implementations

Risk-based prioritization keeps this model practical. High-stakes changes that affect security, performance, or core business logic stay under human oversight, while routine maintenance and formatting shift to automation.

Why Hybrid Wins and How Gitar Delivers It

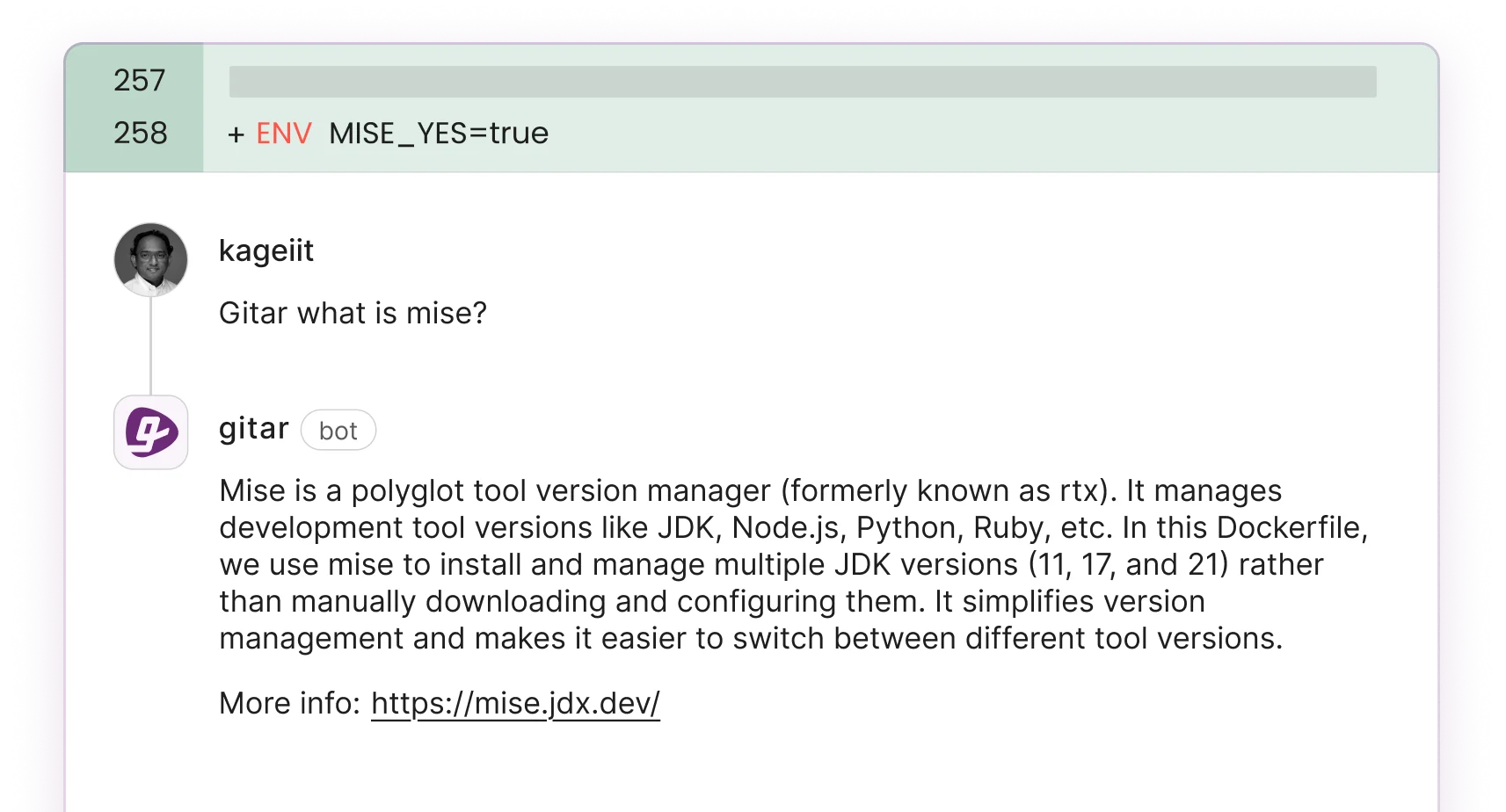

Gitar turns the hybrid model into a practical, daily workflow by fixing code automatically instead of only suggesting changes. When CI fails because of lint errors, test failures, or build breaks, Gitar analyzes the failure logs, generates fixes with full codebase context, validates that the solutions work, and commits them directly to your PR. See the Gitar documentation for a deeper look at the healing engine.

|

Capability |

CodeRabbit/Greptile |

Gitar |

Business Impact |

|

Auto-apply fixes |

Limited one-click |

Yes |

Zero manual implementation |

|

CI failure analysis |

No |

Yes |

Automatic build healing |

|

Fix validation |

No |

Yes |

Guaranteed green builds |

|

Single comment interface |

No |

Yes |

Reduced notification noise |

The ROI impact is substantial. For the same 20-developer team facing that $1M productivity drain, Gitar can reduce losses to approximately $250,000, which represents a 75% improvement. Gitar’s platform includes natural language rules, comprehensive integrations, and detailed analytics that reveal development patterns and bottlenecks. The documentation explains how to configure these integrations and analytics features in detail.

See the difference between suggestions and actual fixes and watch your CI failures heal automatically with Gitar.

Implementing Hybrid with Gitar: Four Practical Phases

Teams succeed with hybrid review when they roll it out in stages that build trust and show value quickly.

Phase 1: Installation and Setup

Install the Gitar GitHub App or GitLab integration and start your 14-day Team Plan trial. The setup guide walks through each step. Gitar immediately begins posting consolidated dashboard comments on PRs, replacing scattered inline notifications with a single, updating interface.

Phase 2: Trust Building

Start in suggestion mode so you can review and approve fixes before they apply. During this phase, Gitar detects and resolves lint errors, test failures, and build breaks while you maintain full visibility into every change.

Phase 3: Automation Enablement

Enable auto-commit for trusted fix types such as formatting violations and simple test failures. Add repository rules using natural language to trigger workflows without complex YAML configuration. The documentation covers rule configuration and examples in depth.

Phase 4: Platform Integration

Connect Jira and Slack for cross-platform context, explore the analytics dashboard for CI pattern insights, and use natural language rules for custom workflows tailored to your team’s needs.

Teams currently paying $450-900 per month for suggestion-only tools like CodeRabbit or Greptile gain better ROI with Gitar, because it resolves problems directly instead of only identifying them.

Conclusion: A Clear Framework for Hybrid Code Review

Modern teams do not need to choose between automation and manual review. They need a hybrid approach that matches AI-era code volume while preserving human judgment where it matters most. For teams working with AI-generated code, Gitar stands out by actually fixing problems instead of just flagging them.

The framework is straightforward. Use automation for speed and consistency on routine issues, reserve manual review for complex architectural decisions, and select tools that deliver real fixes instead of suggestions. Ready to move beyond comments and alerts? Install Gitar and start automatically fixing broken builds so you can ship higher-quality software faster.

FAQ

How does hybrid code review handle the increased volume from AI-generated code?

Hybrid code review absorbs AI-driven volume by automating routine checks such as formatting, basic security patterns, and test coverage while reserving human review for complex architectural decisions and business logic. Gitar’s healing engine extends this model by fixing CI failures and implementing review feedback automatically instead of only flagging issues. This approach lets teams maintain code quality while handling the 3-5x increase in code generation from AI tools without a matching increase in review time.

What is the difference between suggestion engines and healing engines in code review automation?

Suggestion engines such as CodeRabbit and Greptile analyze code and leave comments with recommendations. They may offer one-click fixes and IDE integrations, yet they still require developers to apply, validate, and integrate fixes correctly. Healing engines such as Gitar automatically apply fixes, validate them against CI, and guarantee green builds through direct PR commits. Suggestion engines stop at identification and rely on manual oversight, while healing engines complete the entire fix cycle autonomously.

How can teams measure ROI from implementing hybrid code review approaches?

Teams can track hybrid code review ROI through several metrics. Key measures include reduction in time spent on CI failures and review cycles, decrease in PR cycle time from days to hours, fewer post-deployment bugs and hotfixes, and higher deployment frequency. For a 20-developer team, shifting from purely manual processes to a hybrid approach with tools like Gitar can cut annual productivity losses from $1 million to $250,000, which reflects a 75% improvement in developer efficiency.

What are the security implications of automated code review and auto-fixing?

Automated code review can introduce security risk if tools miss context-dependent vulnerabilities or generate fixes without strong validation. Modern healing engines such as Gitar reduce this risk through layered validation, CI integration that tests fixes in real environments, and configurable trust levels that let teams begin in suggestion mode before enabling auto-commits. The safest approach is to use tools that validate fixes against your actual CI environment instead of suggesting changes in isolation.

How should teams transition from manual-only to hybrid code review workflows?

Teams should transition in stages. Start by installing automation tools in observation or suggestion mode to build trust and measure effectiveness. Next, enable auto-fixing for low-risk issues such as formatting and linting. Then expand automation to more complex scenarios while keeping manual review for architectural decisions and security-critical changes. Finally, refine the balance based on team velocity and quality metrics. Most teams complete this transition in 2-4 weeks and benefit from short training sessions on when to rely on automation versus human judgment.