Key Takeaways

- AI coding tools accelerate code generation 3-5x but create PR review bottlenecks with 91% longer review times and 30% developer time lost to CI failures.

- The “suggestion trap” of manual fix implementation wastes time, while automated code remediation directly applies fixes to guarantee green builds.

- Gitar offers free unlimited AI code review with a 14-day auto-fix trial and outperforms paid tools in CI integration and security vulnerability detection.

- Follow 8 best practices, such as CI-aware remediation, full codebase validation, and natural language rules, to increase automation effectiveness.

- Implement Gitar’s healing engine today at https://gitar.ai/ to remove post-AI bottlenecks and create self-healing CI pipelines.

The Gitar Healing Engine: From Suggestions to Guaranteed Fixes

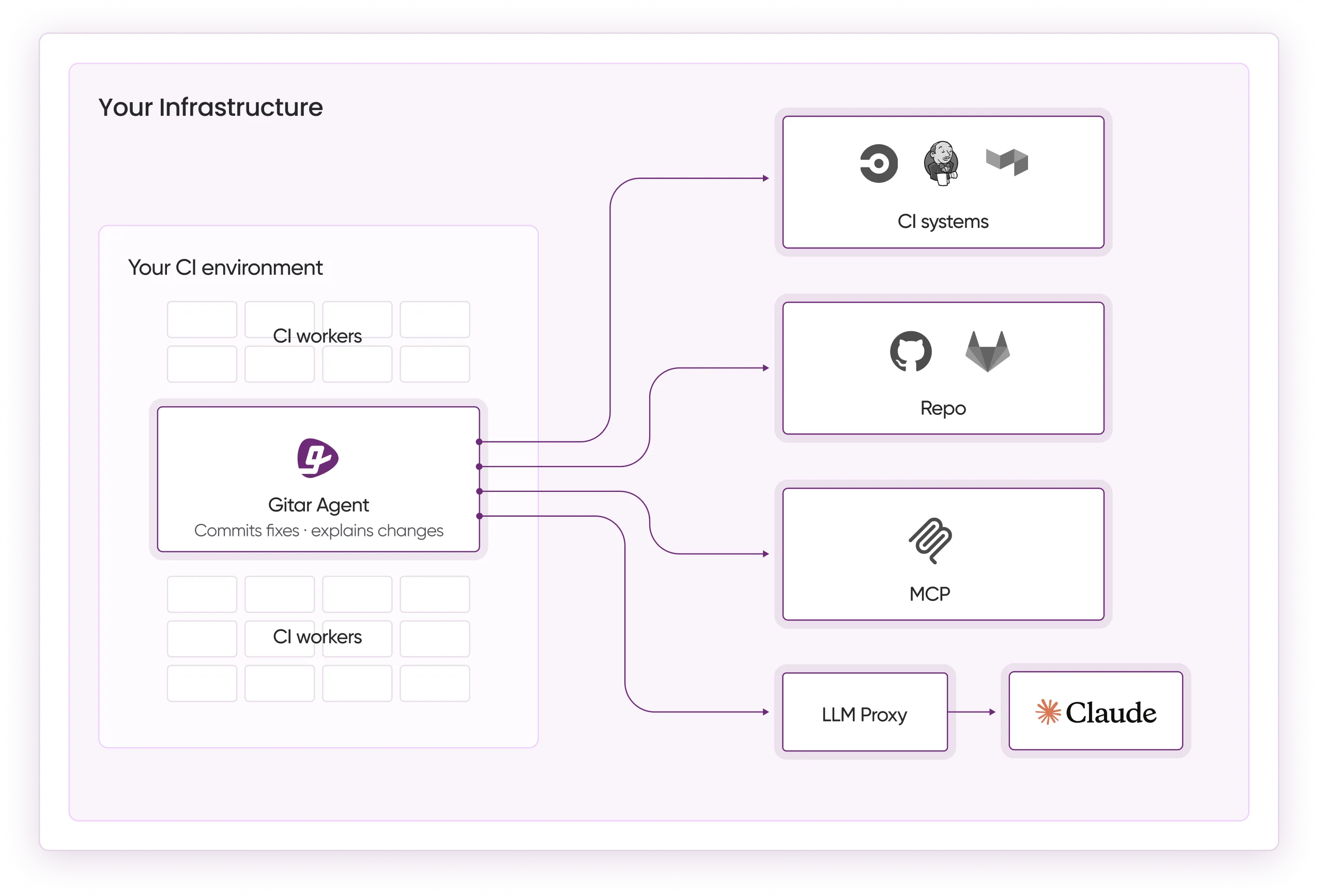

Automated code remediation shifts teams from suggestion engines to healing engines. Instead of leaving comments that require manual work, these AI systems analyze failures, generate fixes, validate them against CI, and commit working solutions that keep builds green.

Gitar positions itself as the free alternative that actually fixes code. Competitors charge premium prices for basic commentary, while Gitar provides comprehensive AI code review at no cost and includes a 14-day free trial of auto-fix capabilities. The platform supports GitHub, GitLab, CircleCI, and Buildkite, and it consolidates all findings into a single updating comment instead of creating notification spam.

|

Capability |

CodeRabbit/Greptile |

Gitar |

|

Auto-apply fixes |

No |

Yes (14-day free trial) |

|

CI auto-fix |

No |

Yes (14-day free trial) |

|

Free unlimited repos |

No |

Yes |

|

Green build guarantee |

No |

Yes |

Gitar has proven reliability at Pinterest scale, handling more than 50 million lines of code and thousands of daily PRs. The platform caught high-severity security vulnerabilities in Copilot-generated code that Copilot itself missed, which shows that expensive tools are not always more effective. Teams at Tigris and Collate report that Gitar’s PR summaries are more concise than competitors, and its unrelated PR failure detection saves time by separating infrastructure flakiness from real code bugs.

8 Automated Code Remediation Best Practices

1. Make CI-Aware Remediation Your Default

CI-aware remediation delivers fixes that actually pass in your real environment. Traditional code review tools analyze diffs in isolation and miss how changes interact with CI pipelines. Effective automated remediation understands the full build environment, including SDK versions, multi-dependency builds, and third-party scans. Gitar emulates your complete CI environment so fixes work in production, not just in a narrow context.

2. Validate Fixes Across the Entire Codebase

Full codebase validation prevents AI-generated fixes from introducing new issues. Benchmark studies show leading AI tools achieving strong bug-catch rates, which highlights the value of comprehensive validation. Gitar’s hierarchical memory system maintains context per line, per PR, per repo, and per organization, and it learns team patterns over time.

3. Centralize Feedback to Reduce Noise

Centralized feedback keeps developers focused and reduces cognitive overload. Notification spam from scattered inline comments distracts reviewers and slows decisions. Best practice is to consolidate CI analysis, review feedback, and rule evaluations into one location that updates in place. When fixes are pushed, resolved items should collapse automatically so teams keep a single source of truth without inbox flooding.

4. Turn Review Comments into Automatic Fixes

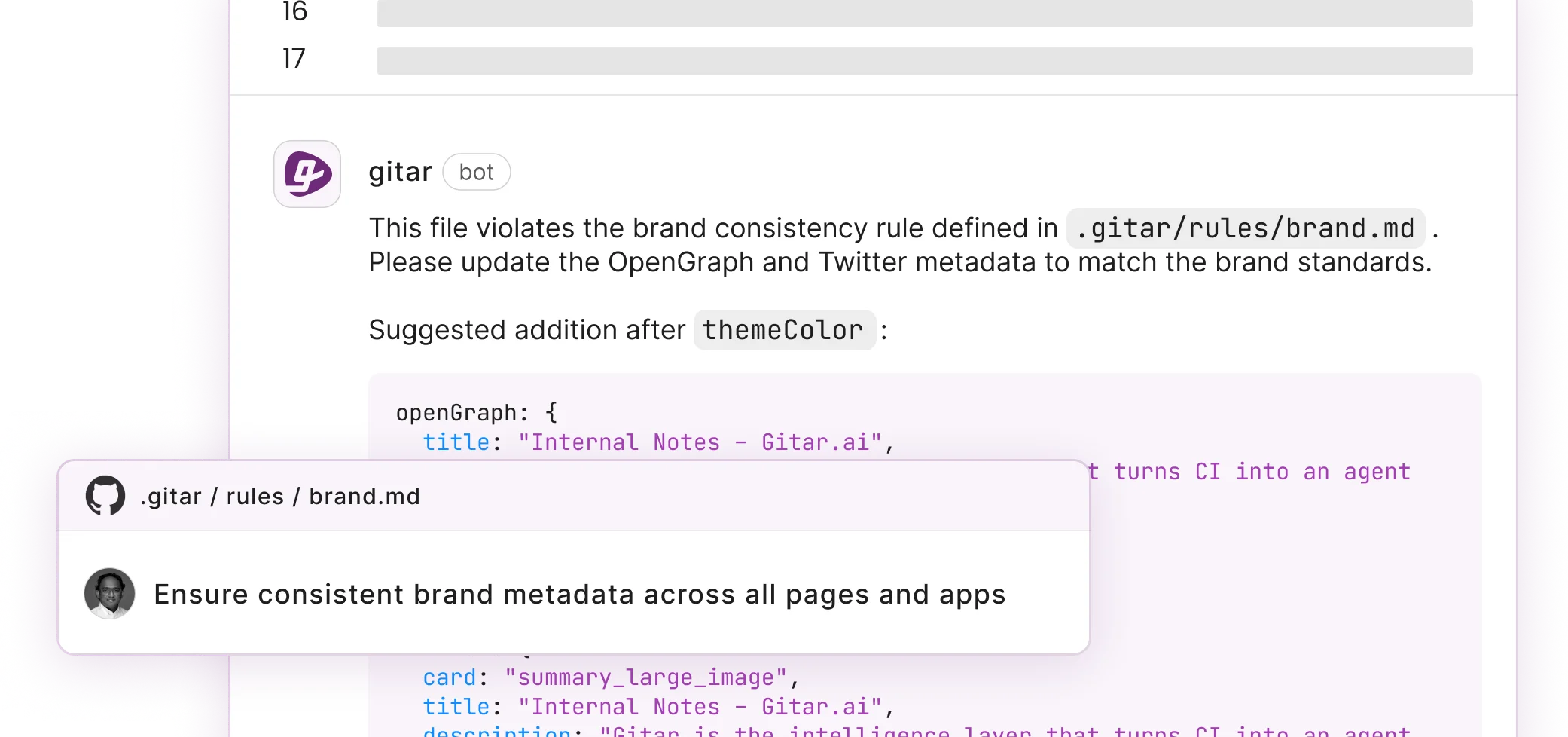

Automatic implementation of review feedback removes a major bottleneck. The most effective remediation systems do not just identify issues, they implement reviewer suggestions directly. When a reviewer comments “@gitar refactor this to use async/await,” the system should apply the change automatically. This approach removes the manual implementation step that slows traditional suggestion-only tools.

5. Use Natural Language Rules Instead of Complex YAML

Natural language rules make workflow automation accessible to every engineer. Complex YAML configurations create adoption barriers and concentrate control in a few DevOps experts. Repository rules written in plain language allow anyone to automate workflows. For example, “When PRs modify authentication code, assign security team and add security-review label.” This pattern spreads CI automation beyond YAML specialists.

6. Grow Trust with Gradual Auto-Commit Settings

Configurable auto-commit modes help teams build trust in automated fixes. Many developers worry about automated commits, especially in critical systems. A phased rollout works best. Teams start in suggestion mode, approve every fix, and track quality. As confidence grows for specific failure types, they enable auto-commit for those trusted scenarios. Fine-grained control over aggression levels lets each team match automation to its comfort level.

7. Use Analytics to Spot CI Failure Patterns

Deep analytics reveal whether failures come from infrastructure or code. Platform teams need systematic pattern recognition to improve reliability. Analytics should categorize CI failure types, track resolution times, and highlight recurring problems. These insights guide proactive improvements to development workflows, test suites, and infrastructure stability.

8. Bake Security Checks into Every AI Fix

45% of AI-generated code samples fail security tests and introduce OWASP Top 10 vulnerabilities, and Java shows a 72% security failure rate in AI-generated code. Automated remediation must include security scanning and validation so fixes do not add vulnerabilities while solving functional issues. Security-aware remediation checks for known patterns, validates changes, and blocks unsafe commits.

Building Trust and Proving ROI with Gitar

Automated remediation succeeds when teams trust the fixes and see clear value. Developer skepticism around automated changes is reasonable because production stability is on the line. Strong validation guarantees and phased rollouts address these concerns. Teams begin with suggestion modes, observe fix quality, and then enable automation for specific, well-understood failure types.

The ROI for automated code remediation is significant for most engineering teams. A 20-developer team that spends one hour daily on CI and review issues loses substantial productivity.

|

Metric |

Before Gitar |

After Gitar |

|

Time on CI/review/day |

1 hour/dev |

15 min/dev |

|

Annual cost |

$1M |

$250K |

|

Tool cost/mo |

$450-900 |

$0 |

Even at 50% effectiveness, automated remediation saves about $375K annually while removing tool subscription costs. Reduced context switching, fewer interrupts, and lower notification fatigue create additional productivity gains that are harder to measure but very real for developers.

Conclusion: Move to Self-Healing CI in 2026

Self-healing CI pipelines solve the post-AI bottleneck that suggestion-only tools leave behind. The AI coding revolution has changed how software is written, yet many teams still pay premium prices for tools that only comment on code and require manual fixes, which keeps the bottleneck in place.

Automated code remediation represents the next stage of this evolution. AI systems now identify problems, apply fixes, validate solutions, and keep builds working. As teams adopt these practices, the constraint moves away from code review and back to innovation and feature delivery.

The future belongs to CI systems that resolve failures automatically, implement feedback, and maintain green builds with minimal human intervention. Teams that embrace automated remediation best practices will ship faster and with higher quality, while competitors remain stuck in manual suggestion cycles.

FAQs: Automated Code Remediation Best Practices

Can AI reliably fix its own bugs and code issues?

AI can reliably fix many of its own bugs when paired with strong validation systems. Modern automated remediation platforms validate 100% of fixes before committing them, which ensures that changes resolve the identified issues without adding new problems. Comprehensive testing against the full codebase context, not just isolated snippets, is essential. Benchmark studies show that well-designed AI systems can achieve more than 80% bug-catch rates while maintaining high precision in their fixes.

How does automated remediation compare to human code review efficiency?

Automated remediation handles routine fixes that consume large amounts of reviewer time. Lint errors, formatting issues, simple logic bugs, and many CI failures fall into this category. These systems can automate about 70% of routine fixes, which frees human reviewers to focus on architecture decisions, business logic, and complex edge cases. The combination of automated remediation for repetitive work and human oversight for complex problems creates a faster and more reliable review process.

What are the best free tools for AI code review in 2026?

Gitar stands out as a leading free option for AI code review in 2026. It offers unlimited code review for public and private repositories with no seat limits and no credit card requirement. Competing tools often charge $15-30 per developer each month for suggestion-only features. Gitar instead provides comprehensive PR analysis, security scanning, and bug detection at no cost, along with a 14-day free trial of auto-fix capabilities. This model makes advanced AI review accessible to teams of any size.

How should teams handle CI integration challenges with automated remediation?

Teams handle CI integration best by choosing platforms that support multiple CI systems and emulate the complete build environment. A staged rollout works well. Teams start with read-only analysis to understand findings and build trust, then enable auto-fixes for specific, low-risk failure types. Modern platforms such as Gitar support GitHub Actions, GitLab CI, CircleCI, and Buildkite, and enterprise options can run agents inside your own CI pipeline for maximum security and context access.

What security considerations are important for automated code fixes?

Security validation is critical because AI-generated code can introduce serious vulnerabilities. Effective automated remediation systems include security scanning that checks for OWASP Top 10 vulnerabilities and other common issues. The system must verify that fixes do not create new security problems while resolving functional bugs. Teams should also maintain audit trails of all automated changes and use approval workflows for security-sensitive code paths, especially in authentication, authorization, and data access layers.