Key Takeaways

- AI adoption has reached 91% among developers, shifting bottlenecks from code generation to PR validation with 1.7× more issues in AI-authored code.

- Traditional AI review tools like CodeRabbit provide suggestions but require manual fixes, so they do not guarantee green builds or faster reviews.

- Gitar’s autonomous healing engine auto-fixes CI failures, implements review feedback, and cuts daily review time by 75% through zero-trust validation.

- Teams can apply 7 best practices including zero-trust policies, context management, architectural guardrails, and natural language rules for scalable AI code review.

- Engineering teams achieve 3x velocity gains with Gitar’s comprehensive capabilities, and you can start your 14-day Team Plan trial today to remove PR bottlenecks.

The 2026 AI Code Review Bottleneck

Senior engineers now face constant context switching when auditing AI-generated code, which drives burnout and erodes deep domain knowledge. This nonstop review load drains focus and long-term understanding of complex systems. Developers generate code 3–5x faster with AI tools, yet sprint velocities stay flat because review capacity has not scaled.

The confirmation bias problem makes this worse. Veracode studies show 45% of AI-generated code contains vulnerabilities. At the same time, developers accept less than 44% of AI-generated suggestions, and 56% of accepted ones need major changes. Teams that rely on AI reviewers to validate AI-generated code create systematic blind spots that suggestion-only tools cannot close.

For a 20-developer team, this bottleneck can cost about $1 million per year in productivity losses. These losses come from CI failures, repeated review cycles, and constant context switching, even after buying expensive tools that only comment on problems instead of fixing them.

Gitar’s Autonomous Healing Engine

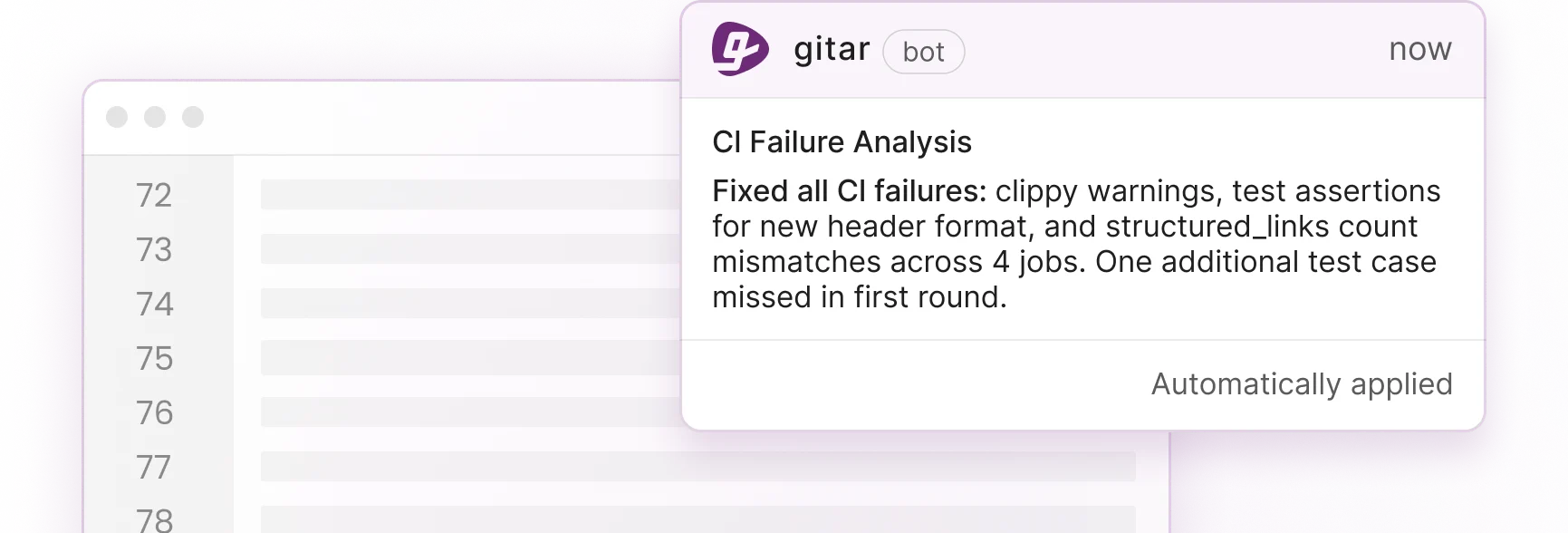

Gitar turns code review from suggestion-based commentary into autonomous problem resolution. Competing tools charge premium prices for inline comments, while Gitar’s healing engine analyzes CI failures, generates validated fixes, and commits working solutions. This closes the manual implementation gap that slows traditional tools.

| Capability | CodeRabbit/Greptile | Gitar |

|---|---|---|

| Inline suggestions | Yes | Yes (Trial/Team) |

| Auto-apply fixes | No | Yes (Trial/Team) |

| Green build guarantee | No | Yes |

| Single comment interface | No | Yes |

The ROI impact is clear. Teams report cutting daily CI and review time from about 1 hour per developer to roughly 15 minutes. That shift represents a 75% reduction in manual toil. Install Gitar now to capture 3x velocity gains through autonomous code healing.

Seven Zero-Trust Best Practices for AI Code Review

1. Treat All AI-Generated Code as Untrusted

AI-generated code should be handled as inherently untrusted because it lacks awareness of system behavior, threat surfaces, and compliance rules. Set explicit review gates for privilege scope, data handling, input trust, and authentication flows. Validate behavior through integration tests instead of relying on surface-level syntax checks.

Gitar’s zero-trust approach validates fixes against your full CI environment before committing them, so your security patterns stay intact. The Gitar documentation explains how natural language rules let teams encode security requirements that trigger automatic reviews whenever sensitive code changes.

2. Use Context and Memory to Review Code Accurately

AI tools have strict context limits and miss business-specific requirements without extra project files and signals. Traditional reviewers also start from scratch on every PR, which hides architectural patterns and institutional knowledge.

Gitar’s hierarchical memory system tracks context at the line, PR, repository, and organization levels. The Gitar documentation covers integrations with Jira and Linear that reveal the business context behind each change, not just the diff. This deeper context helps prevent architectural drift that appears when AI tools lack historical awareness.

3. Enforce Architectural Guardrails on Every PR

AI often lacks repository history and production nuances, which causes architectural and data governance issues. The “Infinite Intern Problem” appears when AI focuses on syntax and speed while ignoring long-term system health and maintainability.

Set automated checks for architectural violations. Flag overly permissive defaults, detect auto-imported libraries without dependency tracking, and validate behavior against system requirements. Gitar catches many of these AI-specific issues through its comprehensive review capabilities.

4. Connect Multiple Tools and Auto-Fix Failures

Effective AI code review depends on coordinating static analyzers, security scanners, and performance profilers while keeping developers fast. Traditional setups create notification overload and force engineers to manually connect findings across tools.

Gitar gathers all findings into a single dashboard comment that updates in place. When CI checks fail from lint errors, test failures, or build breaks, the platform analyzes logs, generates fixes with full codebase context, validates them, and commits working code. This approach removes the context switching that burns out senior engineers during high-volume AI-assisted reviews.

5. Expose Hidden Complexity and Performance Risks

AI-generated code often fails at runtime in surprising places, such as migrations that damage environments. Simple syntax checks miss performance regressions, memory leaks, and scalability problems that appear only under real load.

Create checklists for cryptographic settings, error handling patterns, input validation coverage, and resource management. Gitar’s security scanning, bug detection, and performance review highlight insecure and inefficient patterns that frequently show up in AI-generated code.

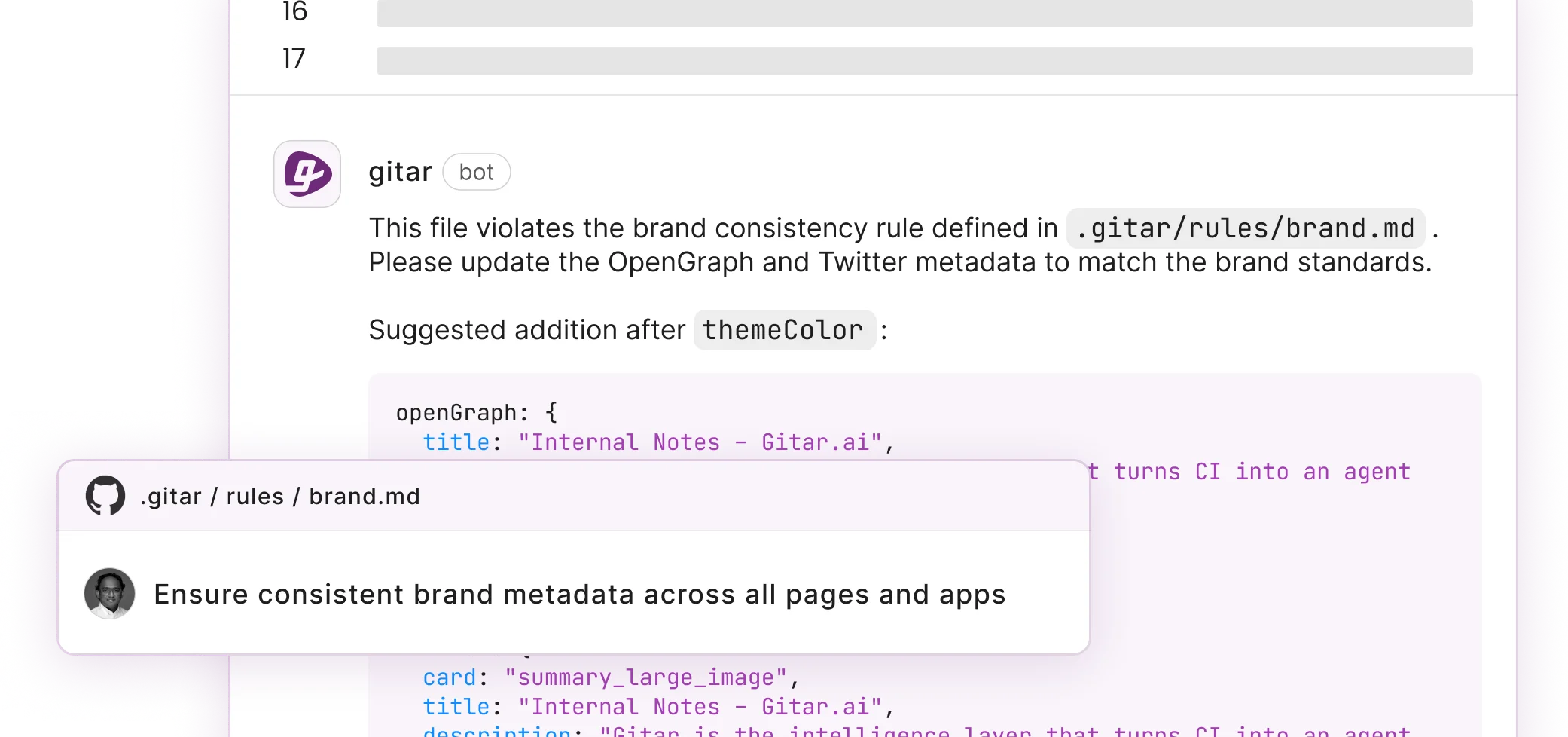

6. Use Natural Language Rules to Scale Reviews

Traditional CI configuration depends on YAML expertise and DevOps support. As AI-generated code volume grows, teams need simple ways to encode review rules without complex scripting.

Gitar’s repository rules use natural language to drive automation. The Gitar documentation includes examples such as this rule for security-sensitive changes:

---

title: "Security Review"

when: "PRs modifying authentication or encryption code"

actions: "Assign security team and add label"

---

This pattern lets any team member capture institutional knowledge and automate workflows without deep CI tooling skills.

7. Measure Engineering Performance in the AI Era

Modern evaluation frameworks separate leading indicators from lagging indicators. Leading indicators include quality of issues caught and developer responses, while lagging indicators include incident counts and throughput.

Track metrics you can act on. Focus on acceptance rates for AI suggestions, fewer pipeline failures, shorter time from PR creation to merge, and developer satisfaction with review quality. Watch for over-reliance patterns where engineers follow AI suggestions without critical thinking.

Four Priority Areas for Senior Reviewers

Senior engineers can protect quality by focusing on four priority areas when reviewing AI-generated code.

- Data validation completeness: Confirm input sanitization, boundary checks, and error handling for every user-facing interface.

- Silent failure detection: Look for code paths that fail quietly and hide real issues, especially in asynchronous workflows.

- Performance implications: Review algorithmic complexity, memory usage, and database query efficiency.

- Maintainability factors: Check readability, documentation, and alignment with existing architectural patterns.

Start shipping higher quality software, faster with Gitar’s deep analysis and autonomous healing.

Frequently Asked Questions

How Gitar Differs from CodeRabbit and Other AI Review Tools

CodeRabbit and similar tools add inline comments and suggestions but leave engineers to implement fixes manually. Gitar’s healing engine applies validated fixes, commits working solutions, and guarantees green builds. While competitors charge $15–30 per developer for suggestion-only services, Gitar offers a comprehensive 14-day Team Plan trial that shows measurable velocity gains through autonomous problem resolution.

How Teams Can Trust Automated Commits from AI

Gitar supports configurable trust levels so teams can adopt automation gradually. You can start in suggestion mode where every fix needs approval, then enable auto-commit for specific failure types as confidence grows. The Gitar documentation explains how fixes are validated against your full CI environment, including SDK versions, multi-dependency builds, and third-party scans, so solutions work in production rather than only in isolation.

How Gitar Handles Complex CI Environments

Gitar handles complex CI setups by emulating your full environment during fix validation. The Gitar documentation details the Enterprise tier, which runs agents inside your CI pipeline with access to secrets and caches. This approach ensures fixes respect your infrastructure constraints. The platform supports GitHub Actions, GitLab CI, CircleCI, and Buildkite across many programming languages.

Metrics That Reveal AI Code Review Impact

Teams should track time-to-merge reduction, CI failure rate improvements, and developer satisfaction scores. Gitar provides analytics, CI failure categorization, and pattern recognition for platform teams. You can monitor both leading indicators such as fix acceptance rates and lagging indicators such as incident reduction over time.

Conclusion: Scaling Past the Post-AI Review Bottleneck

The AI coding wave has moved the main bottleneck from writing code to validating it. Teams that adopt zero-trust frameworks, autonomous fixing, and rich context management can reach the 3x velocity that AI promises, while suggestion-only tools fall short.

The seven best practices in this guide give senior engineers a clear framework for handling AI-generated code at scale. From zero-trust security policies to natural language automation, these approaches address the 91% surge in PR review load while preserving code quality and architecture.

Start your 14-day Team Plan trial at Gitar to experience the shift from suggestion engines to autonomous code healing. Turn your review process from manual grind into intelligent automation and ship higher quality software, faster.