Key Takeaways

- AI code review tools for private repositories introduce risks like data exfiltration, prompt injection, and IP leaks, especially in cloud-based systems.

- AI-generated code contains about 30% more security vulnerabilities, including SQL injection, XSS, hardcoded secrets, and path traversal attacks.

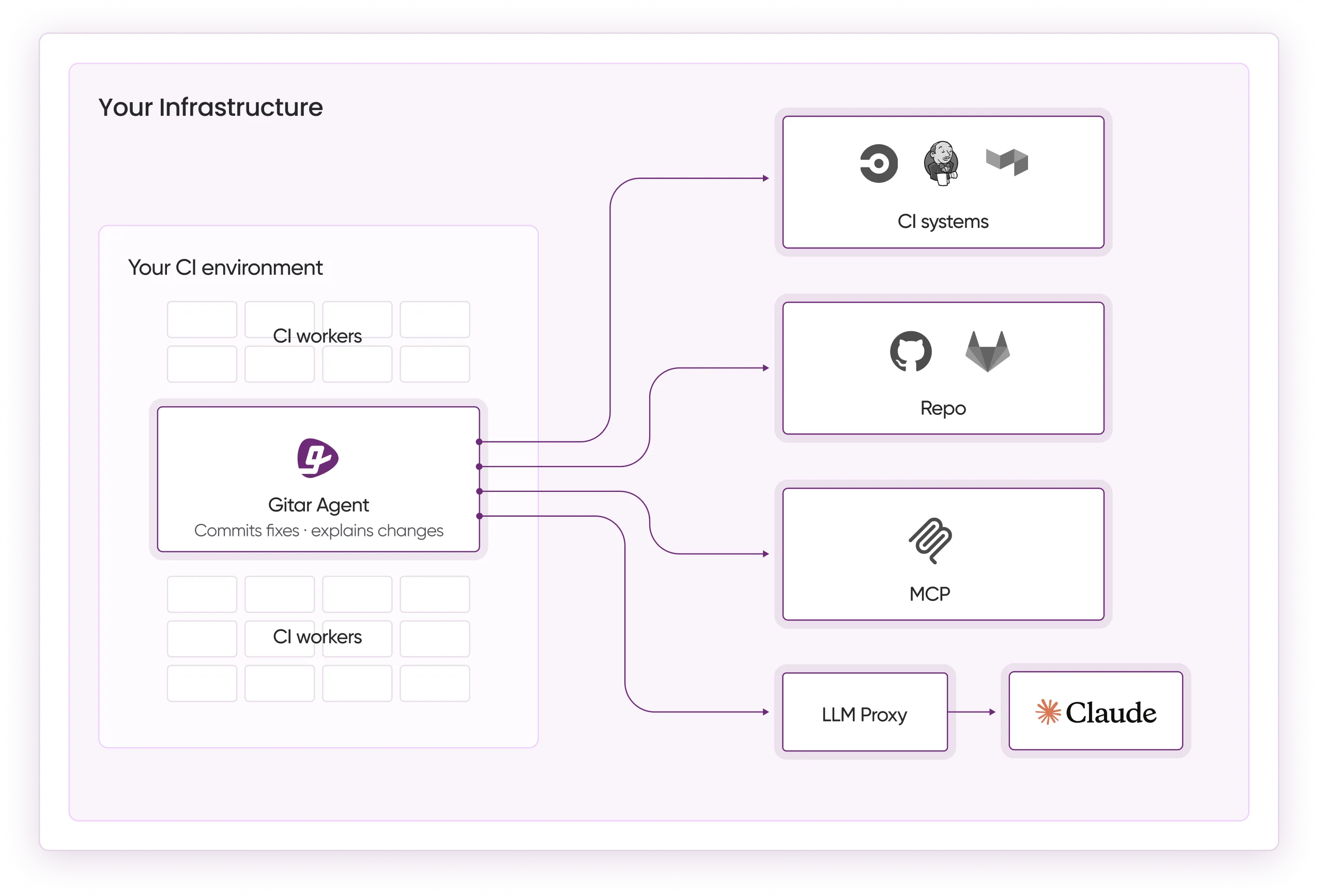

- Self-hosted deployments remove code transmission risks. Gitar’s Enterprise Plan processes everything inside your CI infrastructure.

- Gitar provides validated auto-fixes, CI integration, and no-training policies, outperforming suggestion-only tools like CodeRabbit and Greptile.

- Start your 14-day free Team Plan trial with Gitar at https://gitar.ai/ for secure AI code review on unlimited private repos.

The New Security Problem AI Code Review Introduces

AI code review introduces attack vectors that traditional security models never had to consider. A 2025 analysis identified persistent security vulnerabilities in AI-generated code, including SQL injection attacks where AI tools concatenate user strings directly into queries without parameterization.

The current security landscape now includes several critical vulnerabilities.

Insecure File Handling: AI-generated code often allows path traversal attacks by trusting user-supplied filenames without validation. This behavior can enable malicious executable uploads to production systems.

Hardcoded Secrets: AI models trained on public repositories hallucinate hardcoded secrets or suggest patterns that encourage hardcoded credentials instead of environment variables.

Prompt Injection Vulnerabilities: Security research in 2026 identified over 30 vulnerabilities in AI code review tools that originated from prompt-injection attacks.

These vulnerabilities create a “Security Remediation Cycle.” Teams must run extensive penetration testing and remediation on AI-generated code before production. That extra work often cancels out the speed gains from AI-assisted development.

Copilot, Private Repos, and IP Exposure Risks

GitHub Copilot Business uses a no-training policy for private repositories, yet its cloud-based deployment still creates exposure risks. The 2025 CamoLeak vulnerability showed how private code could be exposed through AI model interactions via prompt injection and CSP bypass in Copilot Chat, even with stated privacy protections.

Developer communities on Reddit regularly raise concerns about AI code review tools accessing private repositories. Independent CodeRabbit research from December 2025 shows AI-coauthored PRs have roughly 1.7× more issues than human-written code. This pattern highlights confirmation bias when AI tools review AI-generated code.

The core problem comes from cloud-based architectures that send code to external servers for analysis. Even with encryption in transit and at rest, this model opens attack surfaces that self-hosted solutions avoid entirely.

Security Reality of AI Generated Code

AI-generated code shows significantly higher vulnerability rates than human-written code. Trust in AI code accuracy dropped to 29% in 2025, reflecting growing awareness of systematic issues in AI outputs.

Security challenges include logic errors that traditional tests miss, race conditions in concurrent code, and poor error handling that exposes sensitive system information. AI models often produce code that looks correct and passes basic checks but hides subtle security flaws that appear only under specific conditions.

Validation becomes essential when AI tools review AI-generated code. Gitar’s approach uses CI-validated auto-fixes that test proposed changes against your real build environment. This process catches vulnerabilities that Copilot and other generation tools miss during initial code creation. See the Gitar documentation for implementation details.

Specific Security Risks for Private Repositories

Forum discussions across Reddit and other developer communities show consistent concern about AI code review security. Teams report incidents where private repository contents surfaced in AI model outputs, even after vendors promised strict data isolation.

The main risks include:

Data Exfiltration: Cloud-based AI tools must send code to external servers. That requirement creates opportunities for interception or unauthorized access.

Model Training Contamination: Even with no-training policies, private code can still influence model behavior indirectly or be reconstructed from model weights in edge cases.

Confirmation Bias at Scale: AI tools that review AI-generated code create blind spots. Both generation and review tools can miss the same vulnerability categories.

Why Self-Hosted Deployment Protects Private Code

Self-hosted deployment offers clear security advantages over cloud-based solutions. Greptile supports on-premises deployment for complete data control in private repositories, meeting SOC 2 compliance. It integrates with GitHub and GitLab to run automated PR and MR reviews through webhooks, with CLI support for CI/CD pipelines.

Self-hosted solutions remove the primary attack vector of sending code to external servers. Tools like PR-Agent support fully local deployments using Ollama with zero external API dependencies. These tools can provide strong security, although they may offer fewer integrated features than platforms that combine review, validation, and automation.

How Gitar Secures AI Code Review for Private Repos

Gitar’s security model addresses the core weaknesses of cloud-based AI code review through Enterprise Plan deployment options, as described in the Gitar documentation. Gitar offers a 14-day free Team Plan trial with unlimited public and private repositories, full PR analysis, security scanning, bug detection, performance review, and auto-fix capabilities for your entire team with no seat limits during the trial.

Most competitors act as suggestion engines that still require manual implementation. Gitar instead provides autonomous fixes that validate against your actual CI environment before changes land in your main branches.

| Capability | Gitar | CodeRabbit | Greptile |

|---|---|---|---|

| Auto-Fix Capability | Yes (Trial/Team) | No | No |

| Private Repo Security (Self-Hosted) | Yes (Enterprise) | No | Partial |

| CI Integration | Yes | No | Yes |

| No-Training Policy | Yes (Enterprise) | Yes | Yes |

Engineering teams at Tigris and Collate report that Gitar delivers concise PR summaries and flags unrelated PR failures, which saves significant development time. Platform ROI calculations show about 75% time savings, equal to roughly $750,000 annually for a 20-developer team.

Install Gitar now at https://gitar.ai/ to automatically fix broken builds and start shipping higher quality software, faster.

7 Practical Best Practices to Secure AI Code Review

1. Implement No-Training Policies: Require AI code review tools to commit in writing that they will not use your private repository code for model training or improvement.

2. Deploy Self-Hosted Agents: Use CI-integrated solutions like Gitar’s Enterprise Plan that run inside your infrastructure. Refer to the Gitar documentation for setup steps.

3. Combine Human and SAST Reviews: Use a multi-layered approach that combines automated tools, manual reviews, and process improvements to secure AI-generated code.

4. Implement Comprehensive Secrets Scanning: Run automated scanning to detect secrets before merging, using methods beyond pattern matching and with clear revocation workflows.

5. Maintain Detailed Audit Logs: Track all AI-generated code, tools used, and review steps. Use these logs to investigate incidents and refine your security practices.

6. Configure Controlled Auto-Commit: Begin with suggestion mode for AI fixes. Build trust, then enable automated commits for specific, low-risk failure types.

7. Use Natural Language Security Rules: Define repository-specific security policies in natural language. Trigger automatic security reviews whenever sensitive code paths change.

Safest AI Code Review Tools in 2026

Based on security architecture, deployment options, and validation capabilities, Gitar currently leads the market for secure AI code review in private repositories. Its CI-agent approach removes the main attack vectors while delivering automation that many competitors do not match.

Other tools provide partial coverage. Greptile offers on-premises deployment with GitHub and GitLab integrations and CI/CD support through a CLI. CodeRabbit focuses on cloud-based suggestions without fix validation. PR-Agent supports fully self-hosted, air-gapped deployments using local models like Ollama, with no external API dependencies.

Frequently Asked Questions

Is AI code review safe for private repos?

AI code review can be safe for private repositories when teams apply the right security controls. Key factors include deployment architecture, data handling policies, and validation mechanisms. Gitar supports unlimited public and private repositories during its 14-day Team Plan trial and offers an Enterprise Plan where the agent runs in your CI pipeline with full access to configs, secrets, and caches. No code leaves your infrastructure in the Enterprise deployment. Details appear in the Gitar documentation.

Does Gitar send code to external servers?

Gitar’s Enterprise Plan runs the agent entirely inside your CI pipeline and infrastructure, with full access to your configurations, secrets, and caches. No code leaves your environment. The Team Plan, which includes a 14-day free trial, supports unlimited public and private repositories with full AI code review features. See the Gitar documentation for more information.

How does Gitar handle CI security?

Gitar uses multiple security layers for CI integration across GitHub Actions, GitLab Pipelines, CircleCI, Buildkite, and other systems. The platform validates all fixes against your actual CI environment before applying changes, which helps prevent new failures from automated fixes. A configurable auto-commit feature lets teams control automation levels, starting with suggestion mode and gradually enabling automated fixes for trusted scenarios. Refer to the Gitar documentation.

What about CamoLeak and similar vulnerabilities?

CamoLeak and similar vulnerabilities mainly affect cloud-based AI tools that send code to external servers for processing. Gitar’s Enterprise Plan uses a self-hosted CI-agent architecture that processes all code inside your infrastructure. The platform never sends your private repository code to external AI services in Enterprise deployments. This design prevents the data exposure risks that enabled vulnerabilities like CamoLeak in cloud-based systems.

Does Copilot read private repos?

GitHub Copilot Business uses a no-training policy for private repositories, so it does not use private code to train its models. However, the cloud-based architecture still sends code to GitHub’s servers for processing, which creates potential exposure risks. GitHub provides security assurances, yet the architecture differs from self-hosted solutions that process code entirely inside your controlled environment.

Conclusion: Secure Private Repos with Proven AI Code Review

Security risks from AI code review for private repositories are real and significant, yet teams can manage them with the right architecture. Cloud-based suggestion engines create exposure risks and require manual implementation of fixes. Gitar’s Enterprise Plan removes these vulnerabilities while delivering comprehensive automation.

Secure AI code review depends on architectures that process code inside your infrastructure, combined with validation that ensures fixes work correctly before they merge. As AI coding adoption accelerates, teams need solutions that scale review capacity without sacrificing security.

Gitar’s CI-agent approach in the Enterprise Plan moves beyond suggestion engines to true healing automation. The 14-day Team Plan trial offers unlimited access to public and private repositories with full features, including security scanning. By processing code with full CI context and providing validated auto-fixes, the platform helps teams scale safely.

Start your 14-day Team Plan trial at https://gitar.ai/ to secure your private repos risk-free. Install Gitar now, automatically fix broken builds, and ship higher quality software, faster.