Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways

- AI coding tools create a “slower before faster” paradox, with PR review times up 91% and developers 19% slower despite feeling faster.

- AI-generated code introduces 75% more logic issues, which widens the trust gap so only 29% of developers fully trust AI output.

- Gitar’s healing engine auto-fixes CI failures and review feedback, while suggestion-only tools still force developers to apply changes manually.

- Adoption typically follows four phases over 1–3 months, and Gitar shortens this curve with zero-setup integration and a proven 45% PR cycle time reduction.

- Teams can save up to $750K annually, and you can start your free trial today to skip the dip and ship faster.

The Problem: Why AI Code Review Feels Slower Before It Gets Faster

The current AI adoption crisis shows up clearly in the numbers. Companies increasing AI adoption from 0% to 100% saw PRs per engineer increase 113%, which overwhelms human reviewers. A “verification tax” consumes time saved writing code on auditing and verification.

This volume surge, mentioned earlier as a 91% review time increase, overwhelms human reviewers who must now process 113% more PRs per engineer. The trust gap compounds the problem. Developers accept fewer than 44% of AI code suggestions, and 75% review every single line of AI output. Only 29% trust AI tools, which is down 11 percentage points from 2024.

This trust deficit stems partly from how traditional AI code review tools operate, because they provide suggestions without fixes. Teams pay $15–30 per developer each month for tools that identify problems but leave implementation to humans. This pattern creates notification spam, constant context switching, and the same manual bottlenecks that existed before AI adoption.

The Solution: Gitar’s Healing Engine That Fixes Code Instead of Just Flagging It

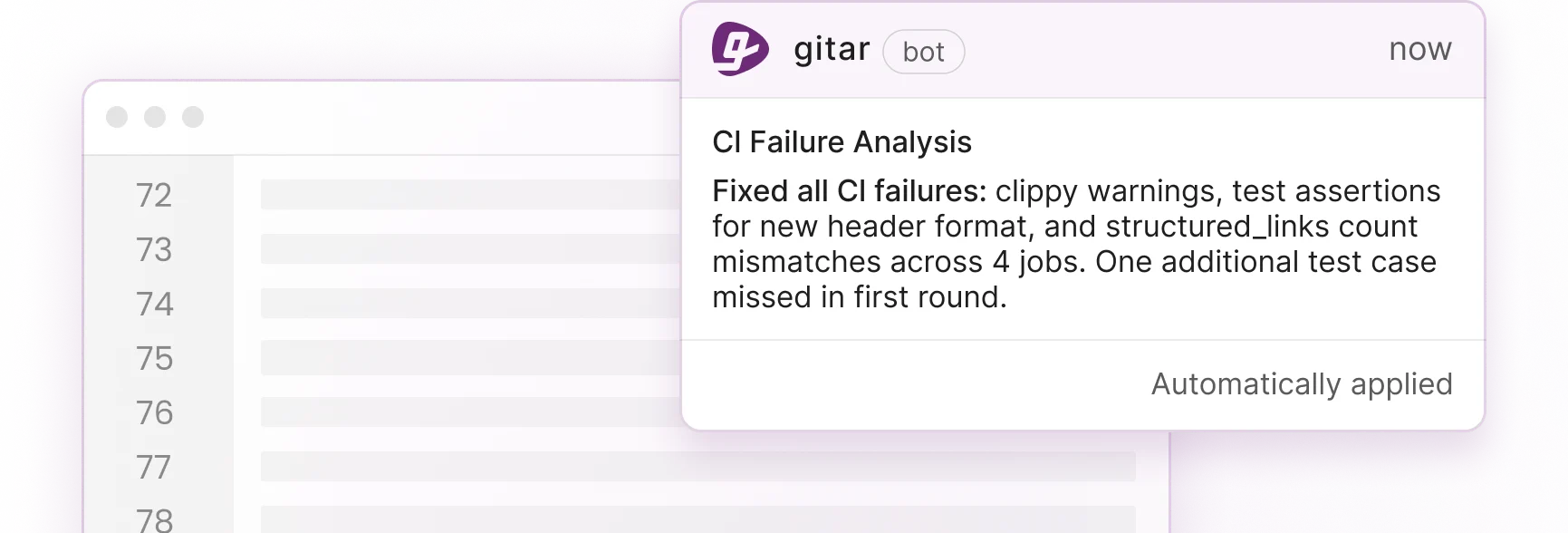

Gitar shifts teams from suggestion engines to a true healing engine that repairs code. While competitors charge premium prices for basic commentary, Gitar automatically resolves CI failures, addresses review feedback, and drives PRs to green builds. The platform includes a comprehensive 14-day free Team Plan trial with no seat limits so teams can experience auto-fixing capabilities before committing. The Gitar documentation explains setup, configuration, and supported workflows in detail.

Key differentiators include:

- Zero-setup GitHub and GitLab integration

- Natural language rules configuration via Gitar’s documentation system

- Native Jira and Slack integrations for seamless workflow automation

- Single-comment UI that consolidates all findings and updates in one place

The fundamental difference becomes clear when you compare core capabilities. Competing tools stop at suggestions, while Gitar automatically applies fixes and analyzes CI failures.

|

Capability |

CodeRabbit/Greptile |

Gitar |

|

PR Summaries |

Yes |

Yes (Trial/Team) |

|

Inline Suggestions |

Yes |

Yes (Trial/Team) |

|

Auto-Apply Fixes |

No |

Yes (Trial/Team) |

|

CI Failure Analysis |

No |

Yes |

Teams like Tigris report Gitar’s summaries are “more concise than competitors,” and Collate highlights the “unrelated PR failure detection” as saving “significant time.” Try Gitar’s auto-fixing capabilities yourself.

Key Phases of the AI Code Review Learning Curve

Teams that understand the typical adoption timeline set realistic expectations and move faster through each stage. The table below maps the four phases most teams experience and shows how Gitar’s architecture removes the main bottlenecks at each step.

|

Phase |

Timeline |

Focus |

Gitar Flattener |

|

1: Installation Dip |

1–2 days |

Setup and first PRs |

Zero-setup app install |

|

2: Trust-Building |

1–2 weeks |

Suggestion mode |

Configurable trust levels |

|

3: Automation Fluency |

2–4 weeks |

Auto-commits |

Healing engine auto-fixes |

|

4: Platform Mastery |

1–3 months |

Rules and analytics |

Jira and Slack plus analytics |

Developers require 11 weeks or over 50 hours with specific AI coding tools to achieve meaningful productivity gains. However, Atlassian achieved a 45% reduction in median PR cycle time shortly after implementing AI code reviews in early 2025, which validates the potential of reaching the later phases in this timeline.

Real Dev Timelines from GitHub and Reddit: Pitfalls and Myths Debunked

Mitchell Hashimoto’s AI adoption journey illustrates a three-phase reality: inefficiency, adequacy, and workflow transformation. His experience mirrors industry patterns where an initial productivity dip gives way to significant gains once teams develop reliable workflows and verification methods.

Common myths persist about the “70% productivity problem.” The reality is more nuanced, because the initial slowdown mentioned earlier coexists with long-term gains. That early dip appears alongside 24% cycle time improvements for teams that reach full adoption.

The key to reaching that 24% improvement faster lies in removing the manual implementation bottleneck. Gitar’s healing engine bypasses traditional blockers by automatically implementing fixes instead of only suggesting them. The single-comment interface cuts notification noise, and auto-fixes prevent the endless review loops that plague suggestion-based tools. Experience the healing engine approach firsthand.

Flattening the Curve: Strategies That Shorten the AI Review Learning Period

Teams flatten the AI code review learning curve when they roll out automation in deliberate stages. The four strategies below align with the phases described earlier and guide teams from cautious trials to confident automation.

- Start with low-risk suggestion mode to build team confidence before you enable auto-commits.

- Invest in prompting and CI training while trust builds so developers can validate AI output effectively.

- Integrate early with natural language rules via platforms like Gitar once developers understand the tool’s capabilities and want to customize behavior.

- Measure DORA metrics throughout all phases to track progress objectively and decide when to move to the next trust level.

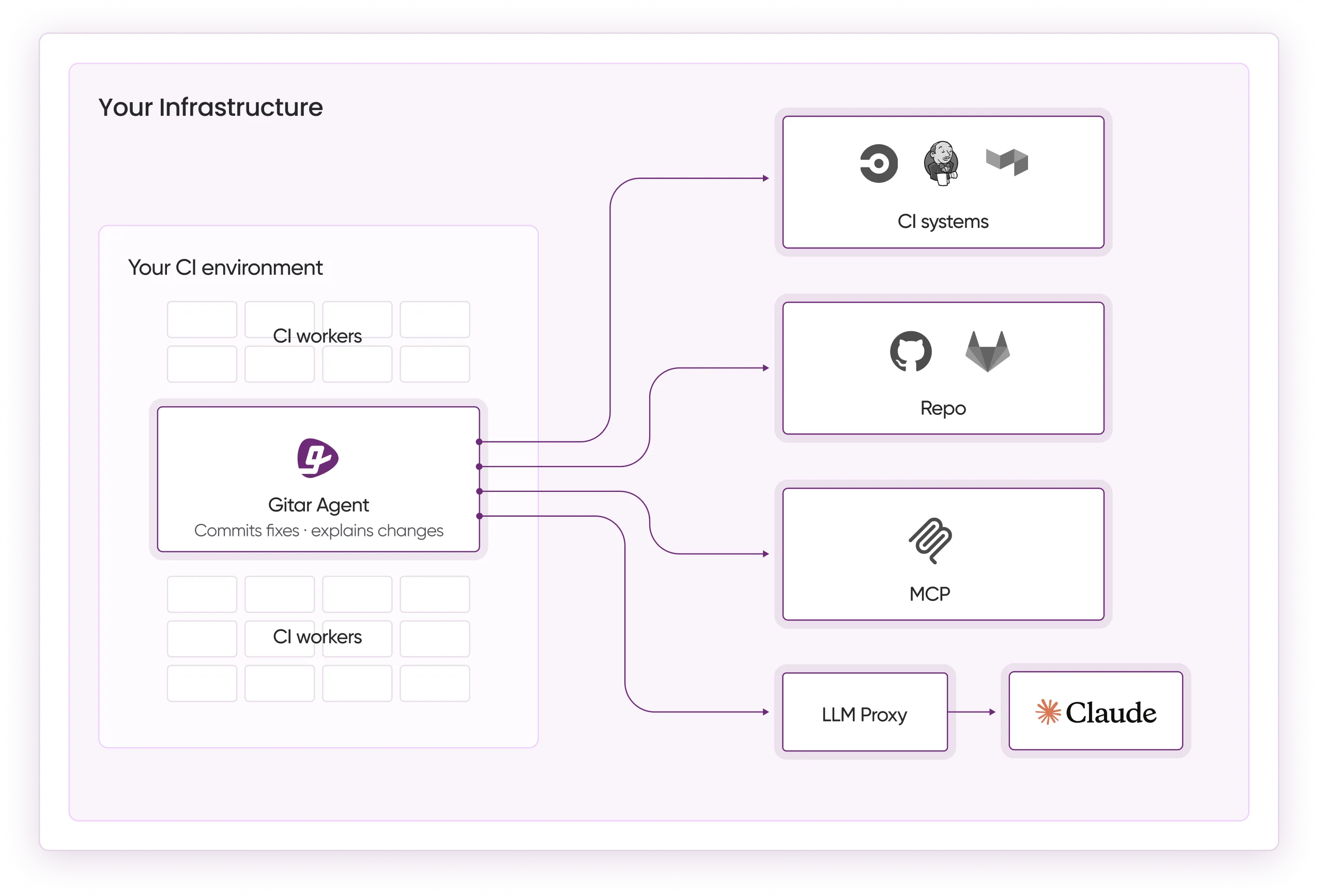

Gitar-specific advantages include CI environment emulation and hierarchical memory context that surpasses competitors. The platform learns team patterns and maintains context across PRs, repositories, and organizations.

These capabilities translate directly into measurable time and cost savings. The table below shows a before and after comparison for a typical 20-developer team.

|

Metric |

Before Gitar |

After Gitar |

|

CI/Review Time per dev |

1 hour/day |

~15 min/day/dev |

|

Annual Cost (20-dev team) |

$1M |

$250K |

|

PR Cycle Time |

91% spike |

Improved |

Frequently Asked Questions

How long until ROI from AI code review tools?

Most teams see initial ROI within 1–3 months, which aligns with the four-phase adoption timeline. Gitar’s auto-fixing capabilities can demonstrate value during the 14-day trial period. Atlassian’s results, the 45% improvement noted earlier, show how quickly teams can benefit, and new engineers using AI code review merged their first pull request five days faster than those without AI assistance.

How do you build trust in auto-commits for AI code review?

Trust grows through configurable automation levels and visible results. Start with suggestion mode where you approve every fix, then gradually enable auto-commits for specific failure types such as lint errors or test failures. Gitar validates fixes against your actual CI environment before committing, which helps ensure changes work in production.

Does AI code review work with complex CI environments?

Yes, modern AI code review platforms like Gitar emulate your complete CI environment, including specific SDK versions, multi-dependency builds, and third-party security scans. The platform supports GitHub Actions, CircleCI, GitLab CI, and Buildkite. Enterprise deployments can run agents within your own CI pipeline with full access to secrets and caches, which ensures fixes work with your exact production configuration.

What causes the initial productivity dip in AI code review adoption?

This productivity dip stems from verification overhead, trust gaps, and learning new workflows. Developers spend time reviewing AI suggestions, implementing manual fixes, and adapting to new tools. Gitar minimizes this dip through auto-fixing capabilities that remove manual implementation steps and single-comment interfaces that reduce cognitive load.

How do you measure success during the AI code review learning curve?

Track DORA metrics including deployment frequency, lead time for changes, change failure rate, and time to restore service. Monitor PR cycle times, CI failure rates, and developer satisfaction scores. Successful adoption shows decreasing cycle times, fewer CI reruns, and improved developer velocity after the initial 2–4 week learning period.

Conclusion: Use Gitar to Master the AI Code Review Learning Curve

The learning curve for adopting AI code review automation tools does not have to trap teams in a “slower before faster” cycle. Industry data shows 91% PR time spikes and an early productivity dip, yet the right platform can flatten these curves from day one.

Gitar’s healing approach, which automatically fixes code instead of only flagging issues, removes the manual bottlenecks that slow suggestion-based tools. With zero-setup installation, configurable trust levels, and comprehensive CI integration, teams can reach velocity improvements that make AI code review adoption worthwhile.

The future belongs to teams that embrace autonomous code review while maintaining quality standards. Install Gitar now to eliminate manual review bottlenecks and experience automatic CI fixes that drive green builds.