Why Enterprise Teams Use Gitar To Unblock AI-Driven PRs

- AI tools accelerate code generation 3-5x but increase PR review times by 91%, creating a $1M annual CI failure cost for 20-developer teams.

- Traditional AI code review tools like CodeRabbit charge $15-30 per developer for suggestions only, which creates notification spam and leaves fixes manual.

- Gitar’s free healing engine automatically fixes CI failures, implements review feedback, and guarantees green builds with enterprise-scale support for 50M+ lines of code.

- Gitar outperforms competitors with validated auto-commits, unrelated failure detection, and single updating comments, cutting daily CI and review time from 1 hour to 15 minutes per developer.

- Transform your post-AI workflow from bottleneck to breakthrough by installing Gitar now for free autofix and unlimited repositories.

The Right-Shift Bottleneck Slowing AI-Accelerated Teams

92% of developers use AI tools in 2025, and Microsoft reports 30% of its code is now AI-written. This acceleration produces more code, more PRs, more tests, more potential failures, and more review cycles. Teams face notification fatigue from suggestion-only tools that scatter inline comments across diffs and flood inboxes with dozens of alerts per push.

The financial impact hits enterprise teams directly. A typical organization loses about $1M annually on CI retries, context switching, and review delays. Developers became 19% slower with AI assistants despite feeling 20% faster, which shows the gap between perceived and real productivity. The bottleneck has shifted from writing code to validating and merging it, so sprint velocities stall even as code generation speeds up.

Healing Engines: From AI Suggestions To Automatic Fixes

Most AI code review tools analyze PRs and leave comments, so they function as suggestion engines that still require manual implementation. This approach misclassifies the problem. Modern teams need healing engines that identify problems and apply working fixes automatically.

Gitar pioneered this healing model with free, comprehensive AI code review that goes beyond suggestions:

- CI failure analysis and automatic fixing of build errors, lint failures, and test failures

- Review feedback implementation through natural language commands

- Single updating comment that consolidates all findings instead of notification spam

- Validation that fixes actually work before committing them

- Enterprise scale supporting 50M+ lines of code with unlimited repositories

When a CI check fails, Gitar analyzes failure logs, generates validated fixes with full codebase context, and commits them automatically. This process completes before developers even notice the failure. Try Gitar’s 14-day free autofix trial to see the difference between suggestions and working solutions.

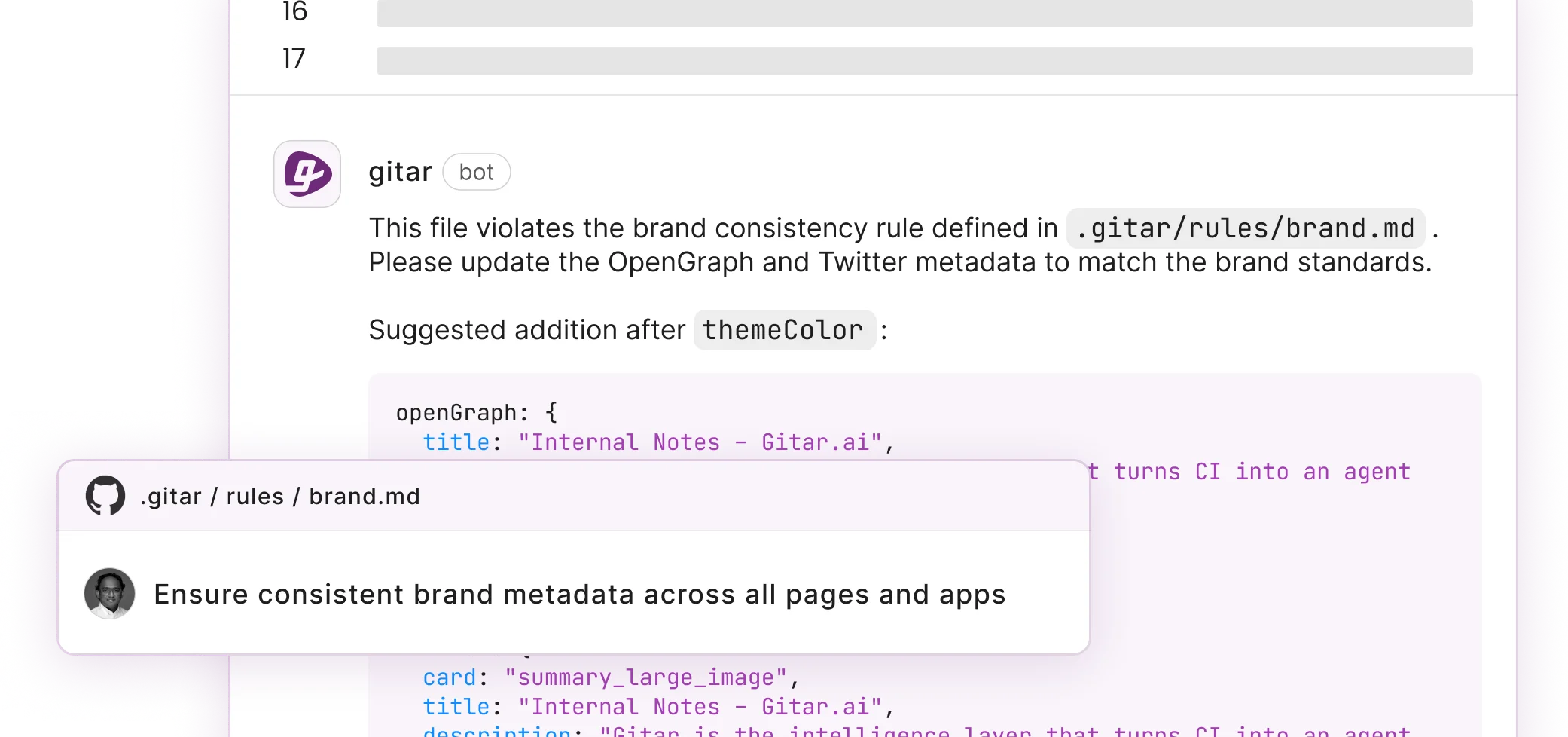

How Gitar Automates Enterprise Workflows With Natural Language Rules

Gitar’s platform extends beyond code review into full development intelligence. Natural language rules in .gitar/rules/*.md files enable workflow automation without YAML complexity. Teams define policies like “assign security team for authentication changes” or “add performance label for database modifications” using plain English.

The platform provides deep analytics on CI failure patterns, which helps platform teams separate systematic issues from one-off problems. Native integrations with GitHub, GitLab, CircleCI, Slack, and Jira keep context inside the tools teams already use. AI-assisted code reviews cut post-deployment bugs by ~25%, and Gitar’s validation approach confirms that fixes work before deployment.

Enterprise customers like Pinterest run Gitar at 50M+ lines of code with thousands of daily PRs. This usage demonstrates production-ready scalability that free alternatives rarely match.

Core Gitar Capabilities: Autofix, Validation, And Noise Reduction

Gitar’s core differentiator is its ability to automatically resolve CI failures instead of only suggesting fixes:

- CI log analysis to understand root causes of build breaks, test failures, and lint errors

- Validated auto-commits that confirm fixes work in your specific environment

- Unrelated failure detection that separates infrastructure flakiness from code bugs

- Single dashboard comment that updates in place and removes notification noise

- Configurable automation levels that range from suggestion mode to full auto-commit

The ROI calculation stays simple. A 20-developer team that spends 1 hour daily on CI and review issues burns about $1M annually in lost productivity. Gitar reduces this to about 15 minutes per developer per day, which cuts costs to $250K while removing tool expenses that competitors charge.

|

Metric |

Before Gitar |

After Gitar |

|

Time on CI/review issues |

1 hour/day/dev |

~15 min/day/dev |

|

Annual productivity cost |

$1M |

$250K |

|

Tool cost |

$450-900/month |

$0 |

Gitar vs. CodeRabbit vs. Greptile: Concrete Feature Comparison

The competitive landscape shows a clear gap between suggestion engines and healing engines. Current AI code review tools focus on workflow automation and suggestions, and their CI failure resolution capabilities differ widely.

|

Capability |

CodeRabbit ($15-30/seat) |

Greptile ($30/seat) |

Gitar (Free) |

|

PR Summaries |

Yes (noisy) |

Yes |

Yes (single comment) |

|

Inline Suggestions |

Yes |

Yes |

Yes + Autofix |

|

CI Auto-Fix/Validation |

No |

No |

Yes (14-day free trial) |

|

Enterprise Scale |

GitHub-only |

Limited |

50M+ lines, cross-platform |

Competitors charge premium prices for basic commentary, while Gitar delivers broader functionality at no cost. The free tier includes unlimited repositories, no seat limits, and comprehensive code review that matches or exceeds paid alternatives. Install Gitar now, automatically fix broken builds, start shipping higher quality software, faster.

Developer Productivity Metrics: Quantifying AI Code Review Gains

Teams should measure AI code review impact with objective metrics instead of relying on subjective feedback. Key metrics include pull request cycle time, throughput, and speed when AI adoption increases. Teams benefit from clear guidelines that balance human and AI review roles while capturing developer sentiment alongside quantitative data.

Teams report 15%+ velocity gains as they adopt AI tools across the software development lifecycle. However, Google’s 2024 DORA report shows a 25% AI usage increase that quickens code reviews but decreases delivery stability by 7.2%. This pattern reinforces the need for validation-focused tools like Gitar that confirm fixes before deployment.

Enterprise case studies show strong ROI from AI code review automation. Enterprise dev teams using Claude Code reported 30% faster pull request cycles. At the same time, agentic remediation frameworks improved successful patch rates from 67% to over 90%, saving about 20 engineering hours per week.

FAQ: Pricing, Trust, CI Coverage, Metrics, And DIY Alternatives

How is Gitar free for enterprise AI code review?

Code review functions as table stakes, which means it serves as the entry point rather than the full product. Gitar’s business model centers on the platform beyond review, including enterprise features, advanced analytics, and custom workflows at scale.

The team believes review should be commoditized, with real value coming from development intelligence and automation. The free tier includes unlimited repositories, comprehensive code review, and no seat limits. Auto-fix features include a 14-day free trial that showcases healing engine capabilities.

Can we trust auto-commits in automated PR review?

Gitar offers fully configurable automation levels. Teams can begin in suggestion mode, where they approve every fix and build trust gradually. After confidence grows, they can enable auto-commit for specific failure types such as lint errors or test fixes.

The platform validates fixes against your full CI environment, including SDK versions, multi-dependency builds, and third-party scans. This approach ensures fixes work in production contexts, not just isolated sandboxes. You control the automation level based on your team’s risk tolerance and requirements.

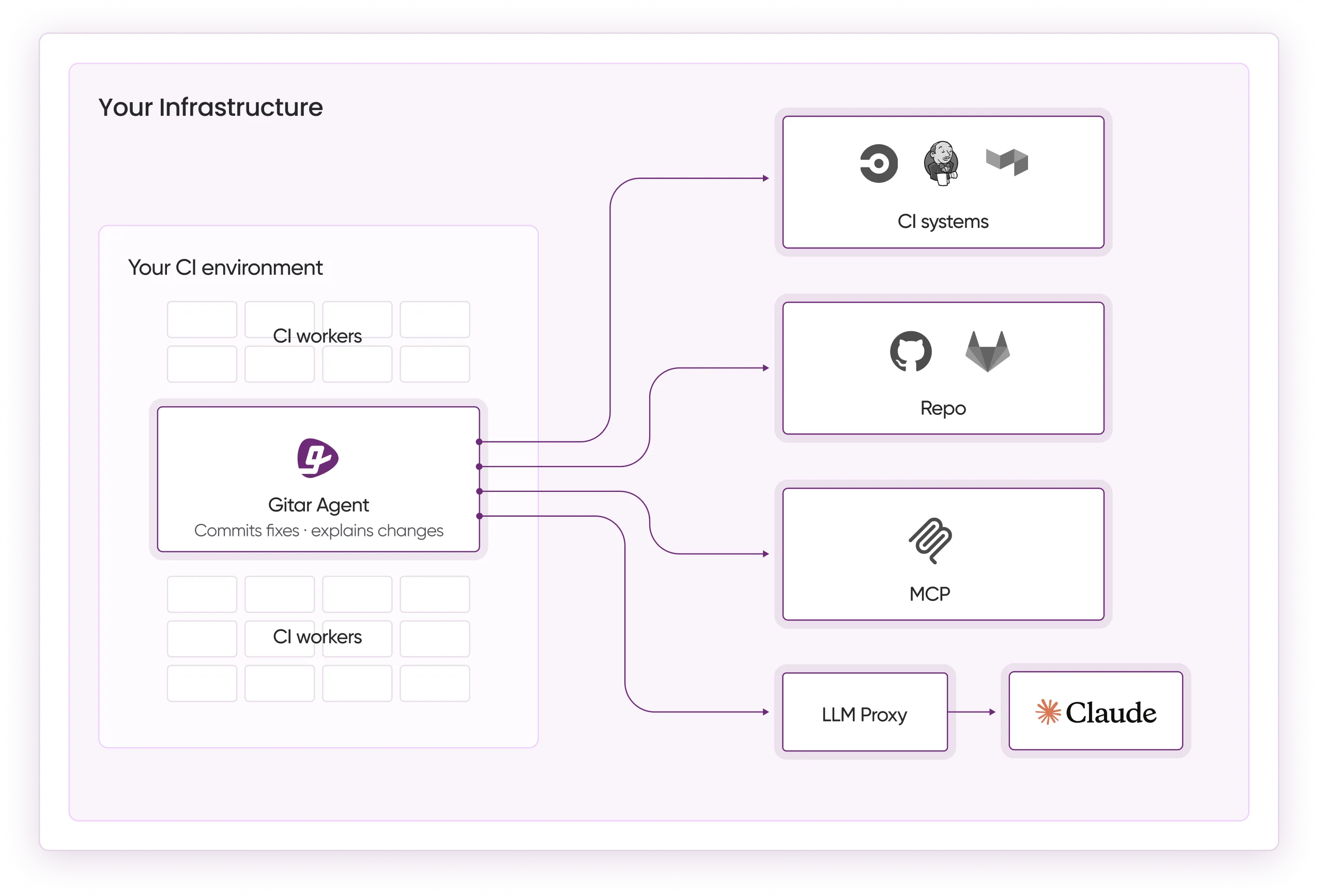

Does Gitar handle complex enterprise CI like CircleCI?

Gitar supports native integrations with GitHub Actions, GitLab CI, CircleCI, and Buildkite. The platform detects infrastructure flakiness versus code bugs, which helps teams avoid wasted time on unrelated failures. The enterprise tier runs the agent inside your own CI pipeline with access to your secrets and caches, which preserves maximum context and security. This architecture supports complex enterprise environments while maintaining zero data retention policies.

How should teams measure AI code review impact?

Teams should focus on objective metrics instead of opinions. Key indicators include pull request cycle time, throughput stability, blocked PRs, review lag, and rework frequency. Leaders should monitor positive impacts such as faster reviews and also track potential downsides such as increased QA bottlenecks or larger change sets.

Baseline measurements before AI adoption provide a reference point, and teams can then track changes over time. Quantitative metrics combined with developer sentiment surveys create a complete view of productivity impact.

What makes Gitar different from DIY LLM integrations?

Custom LLM integrations demand significant engineering effort to build CI context, fix validation, and infrastructure management. Gitar delivers an end-to-end solution with zero setup, full CI pipeline integration, and architecture designed for development workflows.

The platform handles complex scenarios such as force pushes mid-run, concurrent operations, and wave-based execution that DIY solutions often miss. Teams gain enterprise-grade reliability without building and maintaining that infrastructure themselves.

Conclusion: Replace AI Review Bottlenecks With Gitar’s Free Healing Engine

The post-AI coding bottleneck is real, measurable, and expensive. Many vendors charge premium prices for suggestion engines that leave the hardest work to developers, while Gitar takes a different path. The platform offers free code review that fixes problems, validates solutions, and scales to enterprise requirements.

The ROI comparison favors Gitar clearly. Teams save about $375K annually in productivity costs while removing $450-900 in monthly tool expenses. Development workflows shift from reactive suggestions to proactive healing. Enterprise teams like Pinterest already trust Gitar with 50M+ lines of production code.

Install Gitar now, automatically fix broken builds, start shipping higher quality software, faster: https://gitar.ai/