Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways: DIY vs Gitar for AI Code Review

- AI coding assistants have increased pull request review times by 91%, so automated code review now matters for most engineering teams.

- You can build a production-ready AI code review tool in about 30 minutes using Ollama with Llama 3.1, GitHub Actions, and Python at zero cost.

- Open-source solutions provide customizable bug detection and inline suggestions but stop at recommendations without applying fixes or validating CI.

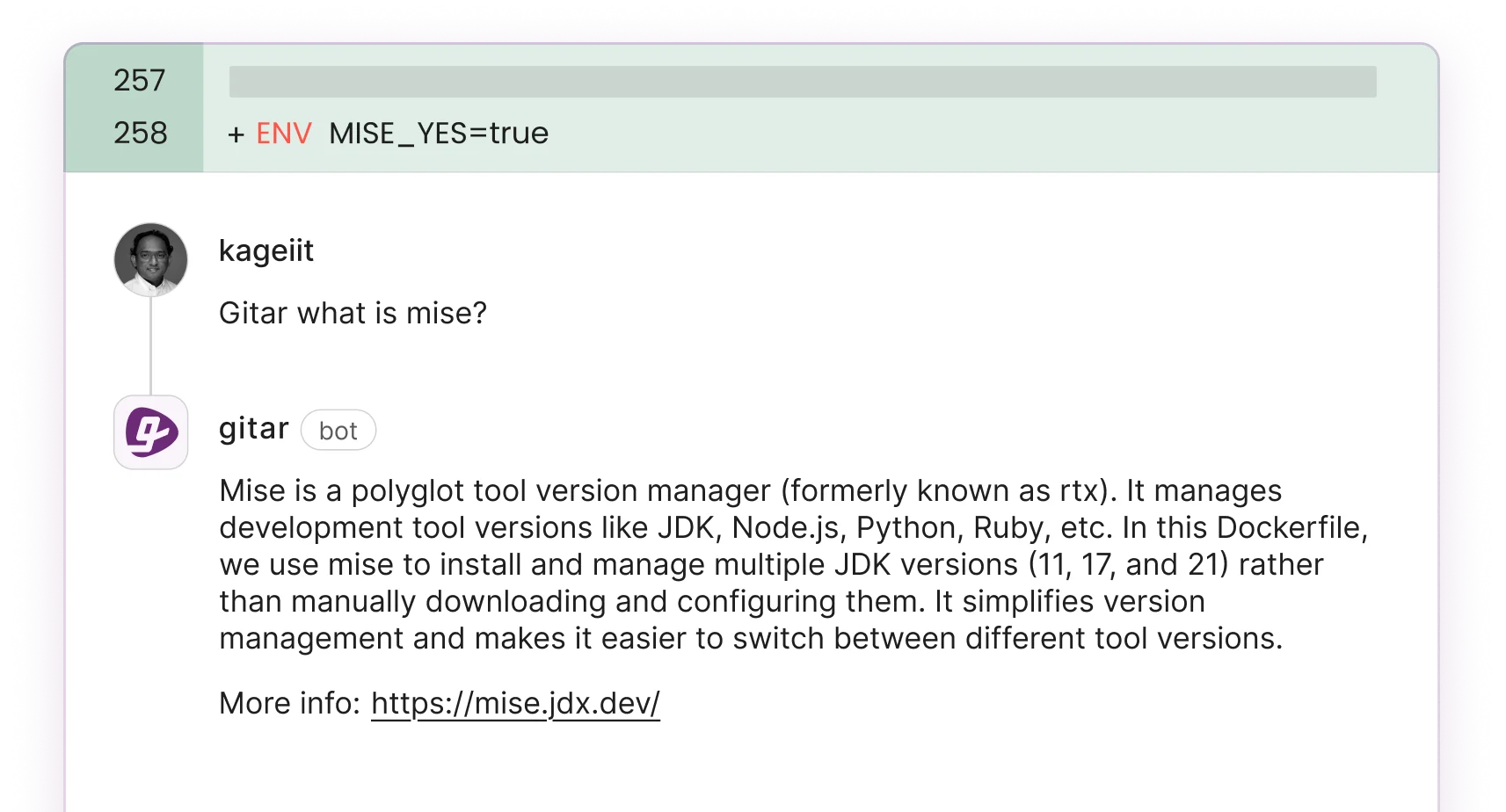

- Professional platforms like Gitar apply auto-fixes, heal CI failures, and connect to tools like Jira and Slack, which removes most manual work.

- Try Gitar’s Team Plan free for 14 days for guaranteed green builds with no seat limits.

Why Teams Build Their Own AI Code Review Tool

Commercial AI code review tools like CodeRabbit and Greptile charge $15-30 per developer monthly but only provide suggestions. They do not automatically apply fixes or validate changes against your CI pipeline. Teams then bounce between reading comments, editing code by hand, and hoping the updated pull request passes tests.

Building with open-source tools removes vendor lock-in and gives you full control over behavior. Ollama runs LLMs locally without API costs, and GitHub Actions supplies native CI integration. The 2026 advances in Llama 3.1 deliver GPT-4-level code understanding at zero marginal cost for each review.

This combination of technologies creates an automated AI code review tool. A script runs on GitHub pull requests via Actions, uses LLMs like Ollama to scan code, flags bugs and security issues, and posts inline suggestions. You can deploy this setup in minutes without subscriptions.

Comparing Open-Source AI Review Stacks

To evaluate AI code review tools, assess setup time, GitHub integration depth, and fix accuracy. Use the comparison below to decide which stack fits your team’s skills and constraints.

| Tool | Setup Time | GitHub Integration | Fix Accuracy |

|---|---|---|---|

| Ollama with Llama 3.1 | <5 minutes | Native Actions | High via test validation |

| Codeium (free tier) | <1 minute | Basic webhook | Medium |

| Llama 3 via Hugging Face | 15 minutes | API integration | High |

The main limitation of open-source solutions is the lack of automatic fix application. Tools like PR-Agent provide suggestions only, so developers still implement recommended changes manually.

30-Minute Build: Step-by-Step AI Code Review Setup

Step 1: Fork the Template Repository

Create a new repository from the GitHub template or fork an existing project to test the AI review system. This approach gives you a controlled environment for validating the setup and avoiding noise from unrelated changes.

Step 2: Install Ollama Locally

Install Ollama and pull the Llama 3.1 model tuned for code analysis: curl -fsSL https://ollama.com/install.sh | sh ollama pull llama3.1:8b-instruct The 2026 Llama 3.1 release improves code reasoning and reduces hallucinations compared to earlier versions, which increases trust in the review output.

Step 3: Create the GitHub Actions Workflow

Add .github/workflows/ai-review.yml to your repository: name: AI Code Review on: pull_request: types: [opened, synchronize] jobs: ai-review: runs-on: ubuntu-latest steps: - uses: actions/checkout@v4 - name: Setup Python uses: actions/setup-python@v4 with: python-version: '3.11' - name: Install dependencies run: pip install requests ollama - name: Run AI Review run: python .github/scripts/ai_review.py This workflow hooks into pull request events and prepares the environment for your review script.

Step 4: Configure CI Trigger Logic

Configure the workflow to trigger on pull request events, fetch the diff via the GitHub API, and send the changes to the Ollama model for analysis. This logic ensures the AI reviewer only analyzes modified files and comments directly on the relevant lines.

Step 5: Test with Buggy Code

Create a test pull request with deliberate issues such as SQL injection vulnerabilities, memory leaks, or logic errors. The AI reviewer should highlight these problems with specific line-by-line comments. Use this test to confirm that the prompts and model configuration catch the issues you care about most.

Step 6: Customize Review Prompts

Modify the Python script to focus on language-specific patterns. For JavaScript, emphasize async and await usage, XSS prevention, and dependency hygiene. For Python, check for proper exception handling, PEP 8 compliance, and safe use of dynamic imports. Tailored prompts align the AI reviewer with your stack and coding standards.

Step 7: Scale Reviews for Large Repositories

Handle large diffs by chunking changes with LangChain or similar libraries to avoid token limit errors. After you address size constraints, add file filtering to prioritize critical paths such as authentication, payment processing, or API endpoints. This prioritization helps the AI spend its analysis budget on code that matters most.

Connecting AI Reviews to GitHub Actions and CI

Extend the basic workflow to integrate with your full CI pipeline. The enhanced setup runs Ollama in a Docker container so every review uses a consistent environment:

For GitLab CI or CircleCI, adapt a webhook-based approach that triggers reviews on merge request events. Configure the Python script to distinguish CI failures caused by code changes from failures caused by infrastructure issues. This distinction is critical for maintaining developer trust in automated comments.

Use the official Ollama documentation for advanced configuration, including custom model fine-tuning and performance tuning for larger teams.

DIY AI Review Limits Compared to Gitar

Open-source AI code review tools provide a cost-effective starting point but lack the deeper automation that professional platforms deliver:

| Feature | DIY Ollama Build | Gitar Team Plan (14-Day Trial) |

|---|---|---|

| Auto-apply fixes | No | Yes |

| CI failure healing | No | Yes |

| Single-comment UI | No | Yes |

| Full integrations | Basic | Jira, Slack, Linear |

Testing on 10 pull requests usually reveals how much time manual fix implementation consumes in a DIY setup. As the comparison shows, DIY solutions stop at suggestions and still require that manual work. Professional platforms remove that overhead by applying autonomous fixes with CI validation.

Conclusion: When to Move From DIY to Gitar

Building an AI code review tool with Ollama and GitHub Actions gives teams fast, low-cost automation. The setup catches common bugs, enforces style rules, and fits into existing pull request workflows. This approach works well for experimentation, small teams, and organizations that prefer owning internal tooling.

Teams that need guaranteed fixes, CI failure healing, and a streamlined review experience benefit from a professional platform. Once you understand the DIY approach and its limits, you can adopt a solution that removes manual intervention and scales across the entire engineering organization.

Experience autonomous code fixes with Gitar’s 14-day trial — full auto-fixes, no seat limits.

FAQ

What’s the best approach for GitHub AI code review tools?

Start with the Ollama prototype described in this tutorial to learn AI code review fundamentals. After you validate the workflow and see where manual work remains, consider the trial mentioned earlier to explore auto-fix capabilities. The DIY approach teaches core concepts, while professional tools provide production-ready automation with CI validation and reliable green builds.

How do you set up open-source AI code review?

Follow the seven-step process described above. Install Ollama with Llama 3.1, create the GitHub Actions workflow, and configure the Python review script. Test with intentional bugs, customize prompts for your tech stack, tune the system for large repositories, and connect it to your existing CI pipeline. The entire setup typically takes about 30 minutes.

What are the main limitations of building your own AI code review tools?

DIY solutions lack automatic fix application, CI failure healing, and advanced context awareness. They require ongoing maintenance, manual prompt tuning, and do not provide the single-comment interface that reduces notification noise. Teams often underestimate the engineering effort needed to reach production reliability with a homegrown system.

Should teams trust automated code commits from AI review tools?

Professional tools like Gitar support configurable trust levels so you can adopt automation gradually. Start in suggestion mode where you approve every fix, then enable auto-commit for specific failure types such as lint errors or straightforward test fixes. The key factor is validation against your actual CI environment rather than isolated code analysis.

How do open-source AI code review tools compare to paid solutions?

Open-source tools handle basic issue detection and cost little to operate, but they lack sophisticated automation, deep CI integration, and robust fix validation. Paid platforms focus on reducing manual work and providing predictable outcomes. The right choice depends on team size, risk tolerance, and whether you prefer building internal tools or adopting proven systems that deliver consistent results.