Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways

-

AI code generation speeds MVP development 3-5x but also increases PR review time due to bugs and CI failures.

-

Gemini 2.5 Flash-Lite leads with generous daily quotas, strong HumanEval performance, and no credit card requirement for production MVPs.

-

DeepSeek V4 keeps costs low at roughly $2-5 per month while still delivering strong SWE-bench performance for moderate usage.

-

Mistral Codestral and GitHub Copilot free tier work best in focused scenarios like self-hosting and low-friction onboarding.

-

Pair any API with Gitar’s healing engine to automate CI fixes and keep builds consistently green.

How To Rank Free AI Code Generation APIs for Production (2026)

Rank free AI code generation APIs by weighting free quotas without credit card requirements at 40%, benchmarked code quality at 30%, production latency under 500ms at 20%, and common failure modes at 10%. Use benchmarks such as SWE-bench Verified and HumanEval to compare accuracy across models. Pull quota and pricing data from vendor documentation like Google’s Gemini quotas, then cross-check with developer forums and your own tests using real production prompts. Confirm that generated code works smoothly with Gitar’s auto-fix capabilities so CI failures get resolved automatically. Combine quota durability, benchmark strength, and integration effort into a single production viability score. Pair Gitar with any API to automatically fix broken builds from your chosen generation provider.

Free AI Code Generation APIs Compared for Production (2026) + Gitar For AI Code Review

This comparison highlights how daily quotas, benchmark quality, and production risks differ across providers, and shows where Gitar’s healing engine removes CI pain that other APIs leave behind.

|

API |

Free Quotas (daily) |

Code Quality Score |

Production Pitfalls |

|---|---|---|---|

|

Gitar (as an AI Code Review & Healing Engine) |

Unlimited during 14-day trial |

Auto-fixes CI failures |

None, keeps builds green |

|

Gemini 2.5 Flash-Lite |

1,000 requests |

85% HumanEval |

15 RPM limit, suited to prototyping |

|

DeepSeek V4 |

API credits vary |

~80% SWE-bench (claimed) |

$2-5/month for moderate use |

|

Mistral Codestral |

Limited free tier |

High HumanEval accuracy |

Rate limits under load |

|

Amazon Q Developer |

Paid Pro tier ($19/mo) |

55% SWE-bench, 85% HumanEval |

Feature limits, AWS ecosystem lock-in |

1. Gemini 2.5 Flash-Lite: Generous Quotas for MVP Development

Google’s Gemini 2.5 Flash-Lite offers 1,000 requests per day with 15 requests per minute and 250,000 tokens per minute, which makes it one of the most generous options for early production apps. The model supports a 1-million-token context window that can handle analysis of large or multi-repo codebases. It achieves the 85% HumanEval benchmark mentioned earlier, with strong results on function generation and API integration tasks. For production use, test quota durability under realistic load, confirm latency stays under 500ms, and add retry logic for rate limits. Pair Gemini with Gitar’s CI auto-fix so generated code issues get resolved without manual patching, which suits indie SaaS teams shipping MVPs without a credit card.

2. DeepSeek V4: Cost Control for Moderate Usage

DeepSeek V4 claims ~80% on SWE-bench Verified with 95.5% HumanEval performance at $0.14 per million input tokens and $0.28 per million output tokens, so typical moderate usage lands around $2-5 per month. The model handles complex debugging and multi-file reasoning particularly well. For production, verify the claimed benchmarks with your own tasks, track token usage patterns, and test on your hardest real-world prompts. Combine DeepSeek with OpenCode CLI to capture most premium capabilities at a fraction of the cost. Integrate DeepSeek output with Gitar so CI validation and automatic fixes keep your pipeline stable.

3. Mistral Codestral: Self-Hosted Control With Open Weights

While DeepSeek focuses on cost at scale, Mistral Codestral focuses on control through open weights and self-hosting. Mistral’s 22B parameter Codestral model, trained on 80+ programming languages, reaches high HumanEval accuracy and offers strong fill-in-the-middle behavior for refactoring. Open weights allow self-hosting and fine-tuning for your domain or stack. Production teams avoid external API dependencies, customize behavior for proprietary codebases, and integrate with tools like Continue.dev and Tabnine. The tradeoffs include hosting and scaling infrastructure, plus a smaller context window than many cloud APIs. This option fits teams with ML infrastructure that prioritize code privacy and model customization.

4. Amazon Q Developer: Deep AWS Workflow Coverage

Amazon Q Developer Pro ($19/month) delivers extensive chat and code completion tuned for AWS-focused development. It specializes in AWS-optimized code, infrastructure-as-code templates, and built-in vulnerability scanning. Benchmarks show roughly 55% on SWE-bench Verified and 85% on HumanEval, with strengths around Lambda, CDK, CloudFormation, and AWS SDK workflows. Production teams benefit from native AWS integrations and included security scanning, although agent and scan limits still apply. The main drawbacks are AWS lock-in, weaker performance on general coding tasks, and the required paid subscription. Combine Q Developer with Gitar’s healing engine to ship higher quality software faster while keeping CI stable.

5. GitHub Copilot Free Tier: Fast Onboarding for New Users

GitHub Copilot’s free tier offers 2,000 code completions and 50 chat messages per month with zero configuration across VS Code, JetBrains, Neovim, and CLI environments. It ranks highly for providing the lowest barrier to entry for casual and beginner developers. Copilot behaves like ultra-fast autocomplete tuned to your personal coding style. For production, the monthly quotas rarely cover active development, chat capacity remains limited, and advanced debugging support is minimal. Treat Copilot as a learning and prototyping tool rather than a primary engine for production code.

6. Cloudflare Workers AI: Edge-Optimized Code Generation

Cloudflare Workers AI extends the conversation from local IDE helpers to globally distributed edge execution. It provides free tier access to code generation models with edge integration, which keeps response latency low for users worldwide. The platform supports multiple open-source models, including Llama variants, and runs them with serverless execution. Production teams gain global edge deployment, tight integration with Cloudflare’s developer platform, and pay-per-request scaling. Tradeoffs include a smaller model catalog than dedicated AI providers, dependency on the Cloudflare ecosystem, and quota limits on the free tier. This option fits teams already invested in Cloudflare that want edge-optimized generation.

7. Hugging Face Inference API: Broad Open Source Coverage

Hugging Face Inference API gives teams a gateway to many open-source code generation models. Options include StarCoder2-15B trained on 600+ programming languages and multiple Code Llama variants. The platform encourages experimentation with new models and supports custom fine-tuning. Production benefits include access to the latest open-source work, community-driven improvements, and reduced vendor lock-in. Constraints involve rate limits on the free tier, variable model availability, and the need for strong ML and DevOps skills to tune performance. This route works best for research teams and developers who want flexibility across architectures.

Production Checklist: Avoid Traps

Start by load-testing API quotas under realistic usage patterns before any production rollout, so you understand how quickly requests burn down. Use that baseline to decide whether free tiers can handle your expected traffic or only cover early testing. Next, implement CI validation for all generated code to catch the 75% logic issues common in AI-generated code.

These checks also help you monitor hallucinations and incorrect implementations through automated tests. As validation data accumulates, plan your scaling path for the moment free quotas fall short of real demand. Gemini CLI with unpaid API keys offers only 250 daily requests to the Flash model, while authenticated access increases that allowance significantly, so always confirm how authentication affects quotas. Avoid credit card surprises by reading quota enforcement and overage policies carefully, then combine Gemini’s generous quotas with Gitar’s automatic fixing capabilities for a safer production path.

Scale Free Generation to Production with Gitar’s Healing Engine

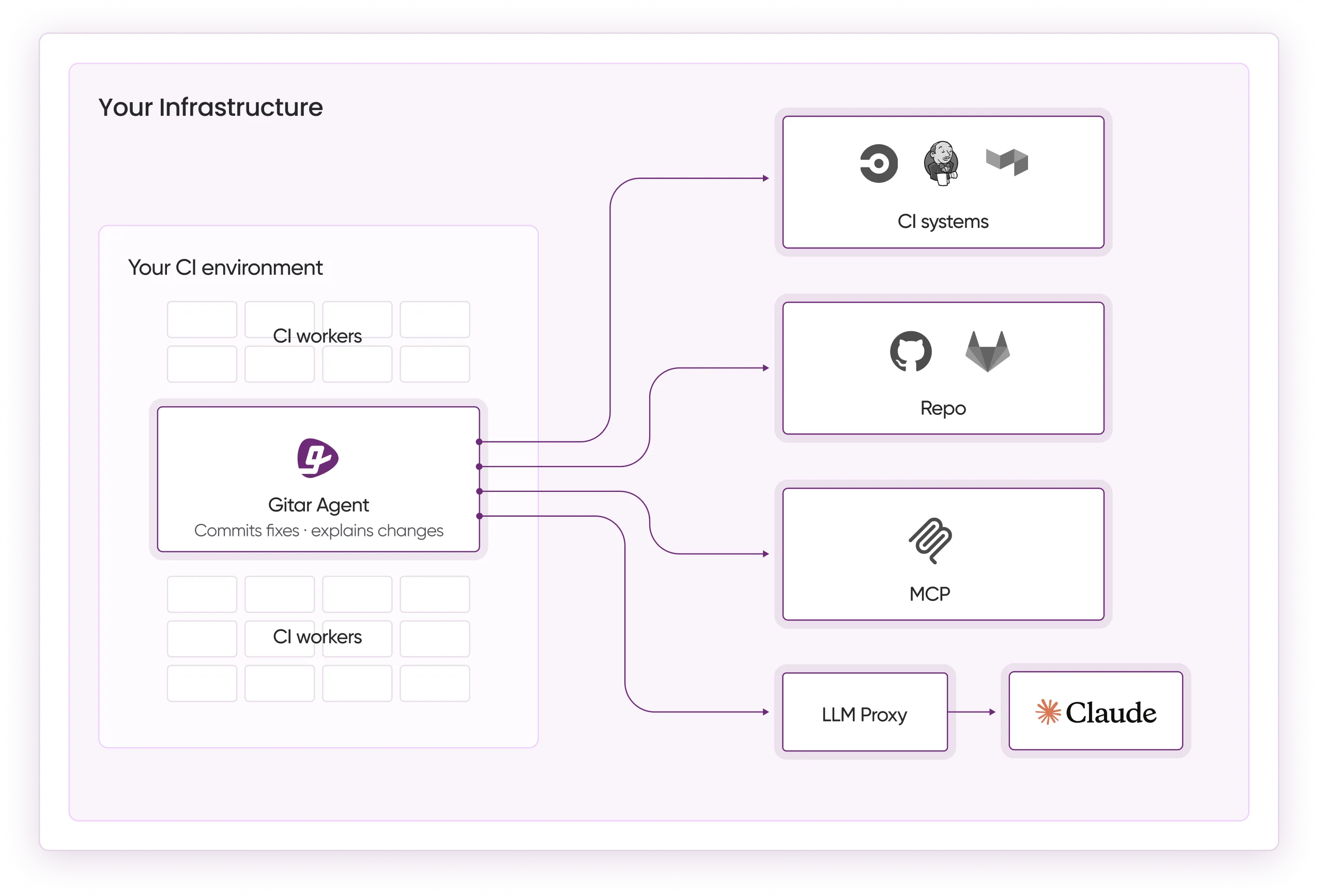

Gitar ranks at the top because it analyzes and automatically fixes AI-generated code issues that break CI, while suggestion-only tools like CodeRabbit still require manual edits. The platform delivers full PR analysis, security scanning, bug detection, and auto-fix capabilities during the 14-day Team Plan trial.

When CI checks fail due to lint errors, test failures, or build breaks, Gitar inspects logs, generates validated fixes, and commits them directly. Setup stays simple: install the GitHub App, enable auto-fix for trusted failure types, and define repository rules using natural language. Teams typically see shorter review cycles, more consistent green builds, and a single clean comment instead of noisy notification threads. Transform AI-generated code into production-ready software with Gitar’s healing engine and keep your pipeline moving.

FAQs

Which AI code generation API works best for production MVPs in 2026?

Gemini 2.5 Flash-Lite offers generous daily quotas and 1-million-token context windows, which makes it a strong fit for production MVP development. Combine it with Gitar’s 14-day Team Plan trial to automatically fix generated code issues and keep CI builds green. This pairing delivers high value for indie developers and small teams that need reliable output without heavy upfront cost.

Do any APIs provide unlimited free quotas for production use?

No provider offers truly unlimited free access that suits production scale. Amazon Q Developer gives broad usage for individual users but focuses on AWS workflows rather than general workloads. Gemini 2.5 Flash-Lite comes closest with its generous daily request allowance. Most production applications eventually move to paid tiers once they outgrow free quotas, which makes cost-conscious options like DeepSeek V4 at roughly $2-5 per month appealing.

How can I connect AI code generation APIs to CI pipelines?

Connect APIs through CI workflow scripts that call generation endpoints, run automated tests on the output, and apply retry logic for rate limits. The missing piece in most setups is automatic fixing of generated code issues when tests fail. Gitar adds that layer through native CI integration that analyzes failures, proposes fixes, and commits them, which removes manual intervention from the loop.

What differentiates Gitar from other code review tools?

Gitar acts as a healing engine instead of a suggestion engine. Competitors like CodeRabbit and Greptile often charge $15-30 per developer for comments that still require manual implementation. Gitar automatically fixes CI failures, applies review feedback, and keeps builds green. The 14-day Team Plan trial unlocks full auto-fix capabilities, workflow automation, and deep analytics without seat limits.

Can I try these APIs without sharing credit card details?

Yes, Gemini 2.5 Flash-Lite, GitHub Copilot free tier, Amazon Q Developer, and several others allow access without a credit card. Their quotas, however, rarely support sustained production workloads. Gitar’s 14-day Team Plan trial also runs without a credit card and provides full platform access so you can measure ROI before committing.

Conclusion

Evaluate free AI code generation APIs with a production lens that covers quota durability, benchmark performance, and integration effort. Gemini 2.5 Flash-Lite’s daily request allowance combined with its strong HumanEval benchmark performance gives teams a solid base for MVP development. The real success factor is pairing any generation API with Gitar’s healing engine so CI failures and code quality issues stop slowing down your releases. Start your 14-day Gitar Team Plan trial to turn free code generation into production-ready software with consistently green builds.