Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways

-

AI code explainers now focus on understanding complex codebases, where single agents often hit context limits on 50k+ LOC repositories.

-

Gitar offers a 14-day Team Plan trial with hierarchical memory, CI failure analysis, and automated fixes through its healing engine.

-

Tools like CodeRabbit and Sourcegraph (Cody) provide strong repository-level feedback and search but do not validate fixes automatically.

-

IDE-based options such as Claude/Continue, Windsurf, and Cursor give individuals deep context but require manual setup or have usage quotas.

-

Evaluate tools using full-repo prompts, then start your Gitar trial today to see production-ready codebase explanations with auto-fixes in your own environment.

How To Rank AI Code Explainers for Complex Codebases

Rank AI code explainers using five criteria: full-repo context retention with hierarchical memory, explanation formats such as summaries or line-by-line analysis, trial access limits, setup complexity, and production workflow integration, including CI analysis.

Production workflow integration matters most for teams that rely on CI, because tools that analyze failures and provide actionable insights save hours of manual debugging. Gitar added CI failure analysis in October 2025, automatically analyzing failures and providing insights, which illustrates this integration criterion in practice. Test each tool against your largest repository to measure context handling and explanation depth in real conditions.

1. Gitar (Top Trial for AI Code Review)

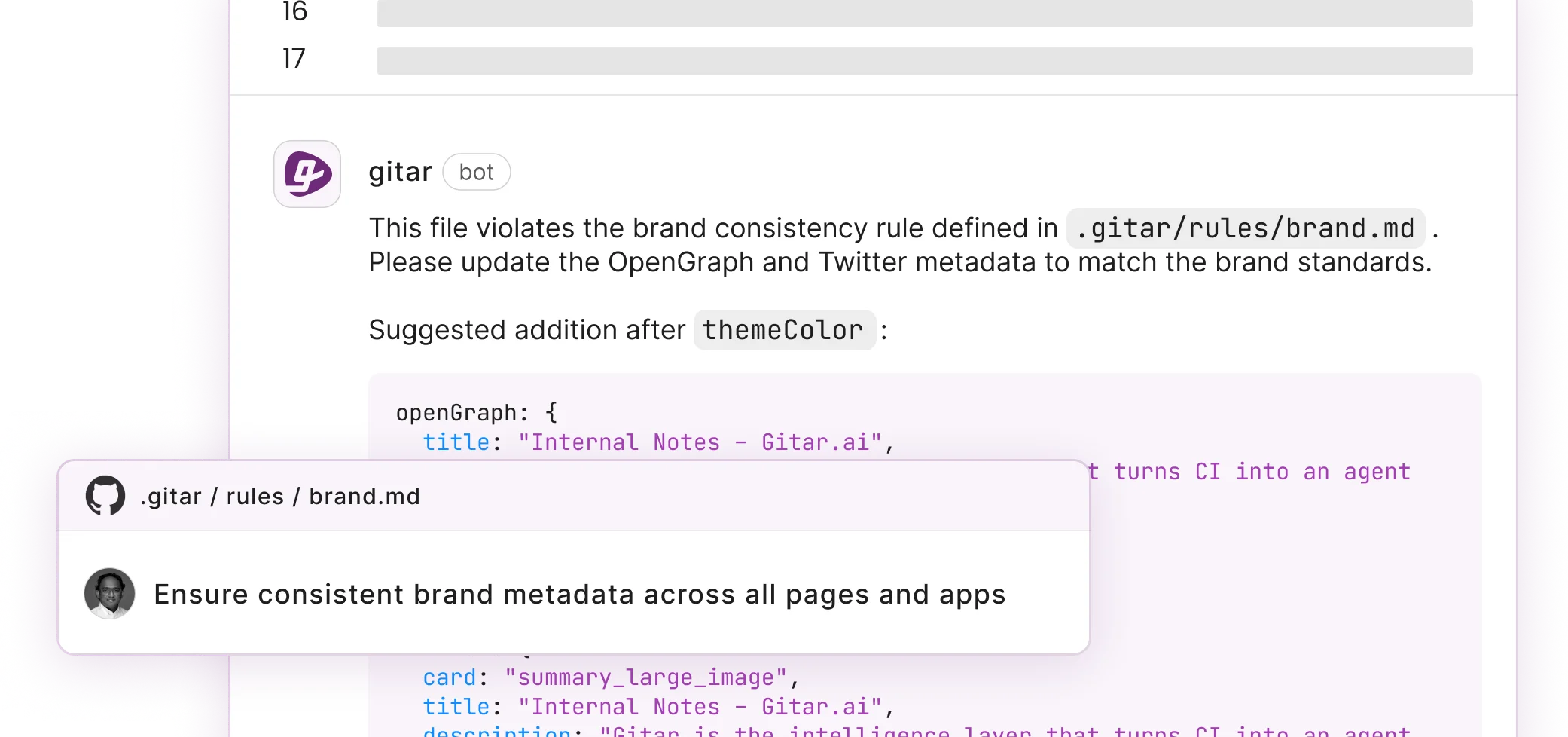

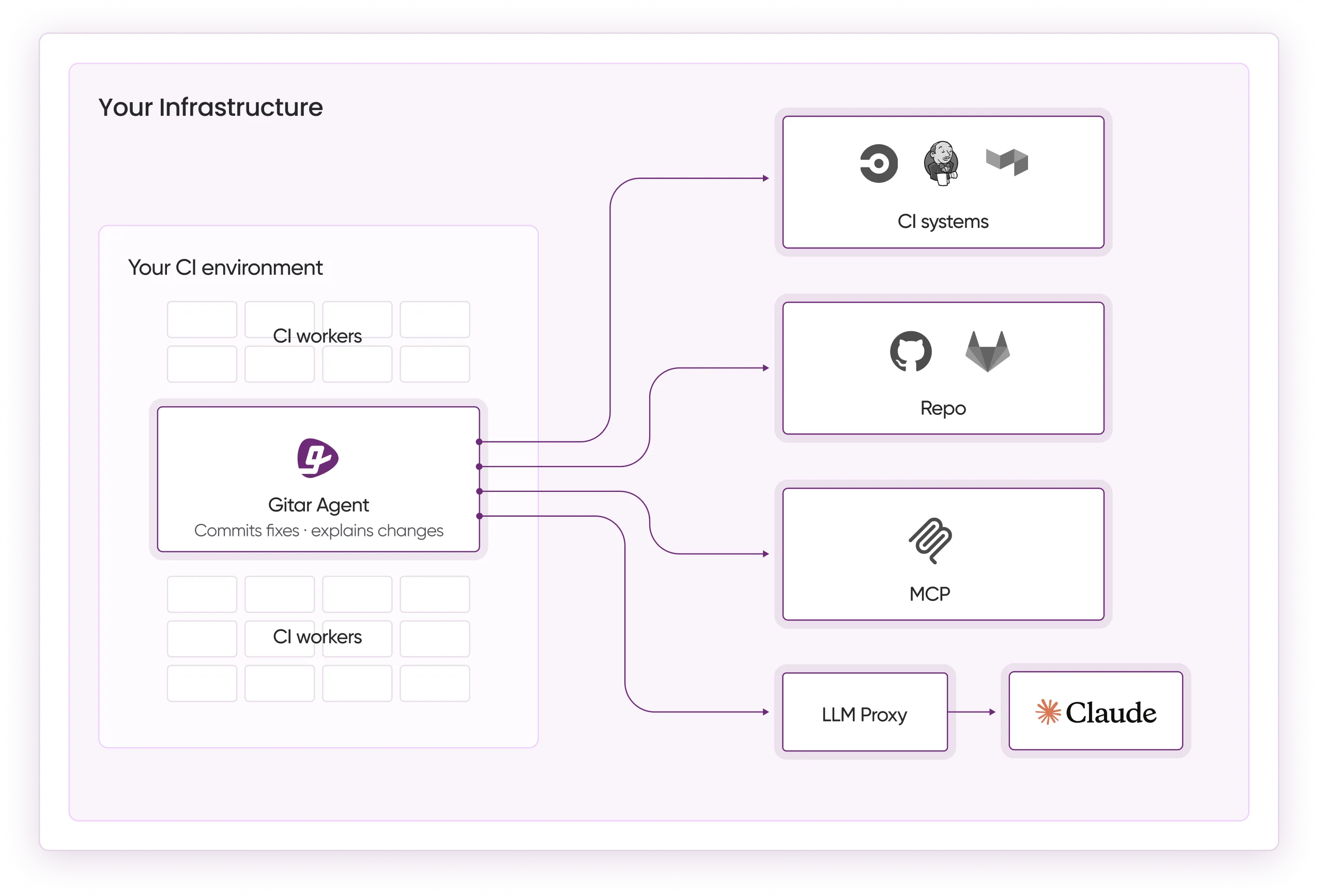

Gitar provides a comprehensive 14-day Team Plan trial for AI code review with automated fixes and CI analysis. The healing engine goes beyond suggestions by resolving CI failures and implementing review feedback directly in your workflow.

Setup: Install the GitHub App, start the 14-day trial, then push a pull request. The dashboard comment appears quickly with a full analysis of the change.

Unique Features: Gitar consolidates all findings into a single dashboard comment, which removes the noise of scattered inline comments. This consolidation relies on hierarchical memory that tracks context per line, per pull request, and per repository, so explanations stay consistent across reviews. That memory enables natural language repository rules that understand your codebase patterns and enforce them automatically. The system validates fixes instead of only suggesting them, and maintains an industry-first living comment that stays updated and moves down the timeline as changes resolve.

Best For: Production teams that need automated CI failure resolution and reliable implementation of review feedback.

2. Claude/Continue

Continue provides an open-source AI coding assistant that connects Claude models to your IDE for everyday development tasks. Continue offers in-IDE autocomplete and sidebar chat for code questions, refactoring, and debugging using local or cloud LLMs.

Setup: Install the VS Code extension, configure API keys, then select a Claude model for your workspace.

Test Results: Claude models offer a 200K token context window, yet context limits emerge on 50k+ LOC codebases where single agents fill 80-90% of available tokens. Continue still works well for focused subsystem analysis and targeted questions.

Best For: Developers comfortable with API configuration who want flexible model selection for specific sections of a codebase.

3. CodeRabbit

CodeRabbit is the most-installed AI app on GitHub and GitLab, offering structured feedback on complex production repositories with 46% accuracy in detecting runtime bugs. CodeRabbit offers unlimited reviews for public repositories, providing context-aware line-by-line feedback with strong repository-level pattern recognition.

Setup: Use a 2-click GitHub integration by installing the app and granting repository access.

Test Results: CodeRabbit performs well at identifying dead code and logical issues across large repositories. It remains limited to suggestion-only feedback and does not validate or apply fixes.

Best For: Open-source projects that need broad review coverage and are comfortable applying fixes manually.

4. Windsurf (Codeium)

Setup: Set up quickly using extensions for nearly every major IDE, with low-latency autocomplete.

Test Results: LocalAimaster’s 2026 benchmarks show a 35-40% autocomplete acceptance rate with a 32% average productivity improvement. Windsurf feels fast and maintains deep repository memory through semantic indexing and AST parsing.

Best For: Individual developers who want rapid code completion with strong codebase context.

See how Gitar’s hierarchical memory compares to IDE-based context in your own repository

5. Sourcegraph (Cody)

Setup: Install the VS Code extension, then connect to Sourcegraph Cloud or a self-hosted instance.

Test Results: Cody excels at project search and architectural navigation across large repositories. Its healing capabilities remain limited compared with fix-first approaches that validate and apply changes.

Best For: Teams with existing Sourcegraph infrastructure that want enhanced code search paired with AI explanations.

6. Aider

Aider offers open-source terminal-based AI pair programming with repository-wide context for developers who prefer the command line. Leveraging Claude’s 200K context window mentioned earlier, Aider achieves a 65-75% autonomous feature completion success rate for backend development.

Setup: Install via pip, configure API keys, then run Aider in the terminal.

Test Results: Aider works well for command-line workflows and automated refactoring across multiple files. It requires comfort with terminal usage and manual management of context.

Best For: Terminal power users who want command-line AI interactions for systematic code changes.

7. Cursor

Cursor’s Hobby plan provides limited Agent requests and Tab completions, featuring a Composer agent for multi-file updates and a Tab feature for instant refactoring. Cursor offers high context awareness through local codebase indexing of thousands of files, which enables multi-file editing via Composer mode.

Setup: Download the Cursor editor, then import existing VS Code settings for a familiar environment.

Test Results: Cursor handles multi-file understanding and architectural changes effectively. Request quotas on the Hobby tier limit continuous heavy use.

Best For: Developers willing to switch editors to gain deep codebase integration and strong multi-file refactoring capabilities.

Comparison Table

The following table highlights the critical differences in trial depth, repository context handling, and CI integration across four leading tools. Use it to quickly match each option to your workflow needs before testing them on your own codebase.

|

Tool |

Trial Depth |

Repo Context |

CI Integration |

|---|---|---|---|

|

Gitar |

Full 14-day Team Plan |

Hierarchical memory |

Auto-fix validation |

|

Claude/Continue |

API costs only |

200K tokens |

Manual setup |

|

CodeRabbit |

Unlimited OSS |

Repository-level |

Suggestions only |

|

Windsurf |

Unlimited individual |

Fast and deep |

IDE-based |

Tools like Sourcegraph, Aider, and Cursor also perform well, yet they focus more on search, terminal workflows, or custom editors than on trial depth and CI integration, so they do not appear in this specific comparison.

Key Considerations and Evaluation Prompts

Test each tool with prompts such as “Map this repository’s authentication flow” or “Explain the dependency chain causing this build failure.” These prompts target full-codebase context awareness, which 63% of developers rank as the most critical feature in AI coding tools. This priority becomes even more important for legacy systems, where understanding complex dependencies matters less than shipping working fixes. For legacy codebases, prioritize tools that provide fix validation instead of suggestion-only approaches.

Test Gitar’s fix validation against your actual build failures.

Frequently Asked Questions

Which AI tool works best for understanding entire complex codebases?

Gitar’s 14-day Team Plan trial provides comprehensive AI code review with automated fixes through the healing engine described earlier. This approach gives deep codebase context while also resolving issues in your real CI environment.

What’s the difference between AI code analyzers and explainers?

Code analyzers focus on detecting issues such as security vulnerabilities or style violations. Code explainers help developers understand how systems work by mapping dependencies, data flows, and architectural patterns. The most effective tools combine both approaches, analyzing problems while explaining the system design that produced them.

Can AI tools handle repositories with 100k+ lines of code?

Most single-agent tools struggle with context limits on large codebases. Gitar’s hierarchical memory system and CI-based analysis handle massive repositories by focusing on the most relevant subsystems. Tools like Claude Code Agent Teams split workloads across multiple agents, while others rely on manual context management for large-scale analysis.

How do these tools integrate with existing CI and development workflows?

Integration patterns vary across tools. Gitar provides native CI analysis and automated fix commits that plug directly into existing pipelines. CodeRabbit connects through GitHub or GitLab apps to add review comments on pull requests. IDE-based tools such as Cursor and Cody operate inside development environments but need separate CI configuration. Choose a tool based on whether you want passive analysis or active workflow automation.

Are these AI explainer tools accurate for production codebases?

Accuracy depends on how each tool validates its output. Tools that only suggest changes cannot confirm their understanding against real builds. Gitar’s healing engine validates fixes by testing them in actual build environments. CodeRabbit reaches 46% accuracy in detecting real runtime bugs. Always compare AI explanations with known system behavior before relying on architectural insights.

Conclusion

Complex codebase understanding requires validated solutions, not just suggestions. Research shows a 13x speedup for software development tasks when AI completes the work rather than only suggesting approaches. Start with Gitar’s comprehensive trial to experience AI code review with automated fixes, then compare alternatives against your specific workflow and validation requirements.

Experience the difference between AI suggestions and validated fixes with Gitar’s Team Plan.