Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways for 2026 Open Source AI Code Review

- 84% of developers now use AI coding tools, and PR review time has increased by 91%, so open source agents help manage the code flood without commercial licensing costs.

- Top tools like PR-Agent (10,500 stars), Aider, Cline, Tabby, and OpenCode (95,000 stars) deliver 15–31% bug detection with GitHub, VS Code, and CLI support plus Ollama-powered local models.

- Open source agents excel at suggestions and privacy but lack auto-fixing, CI validation, and guaranteed builds, which leaves teams with manual implementation work.

- Hands-on testing shows complex setups, VRAM needs (8GB+), and limits in large repos or production workflows compared to autonomous platforms.

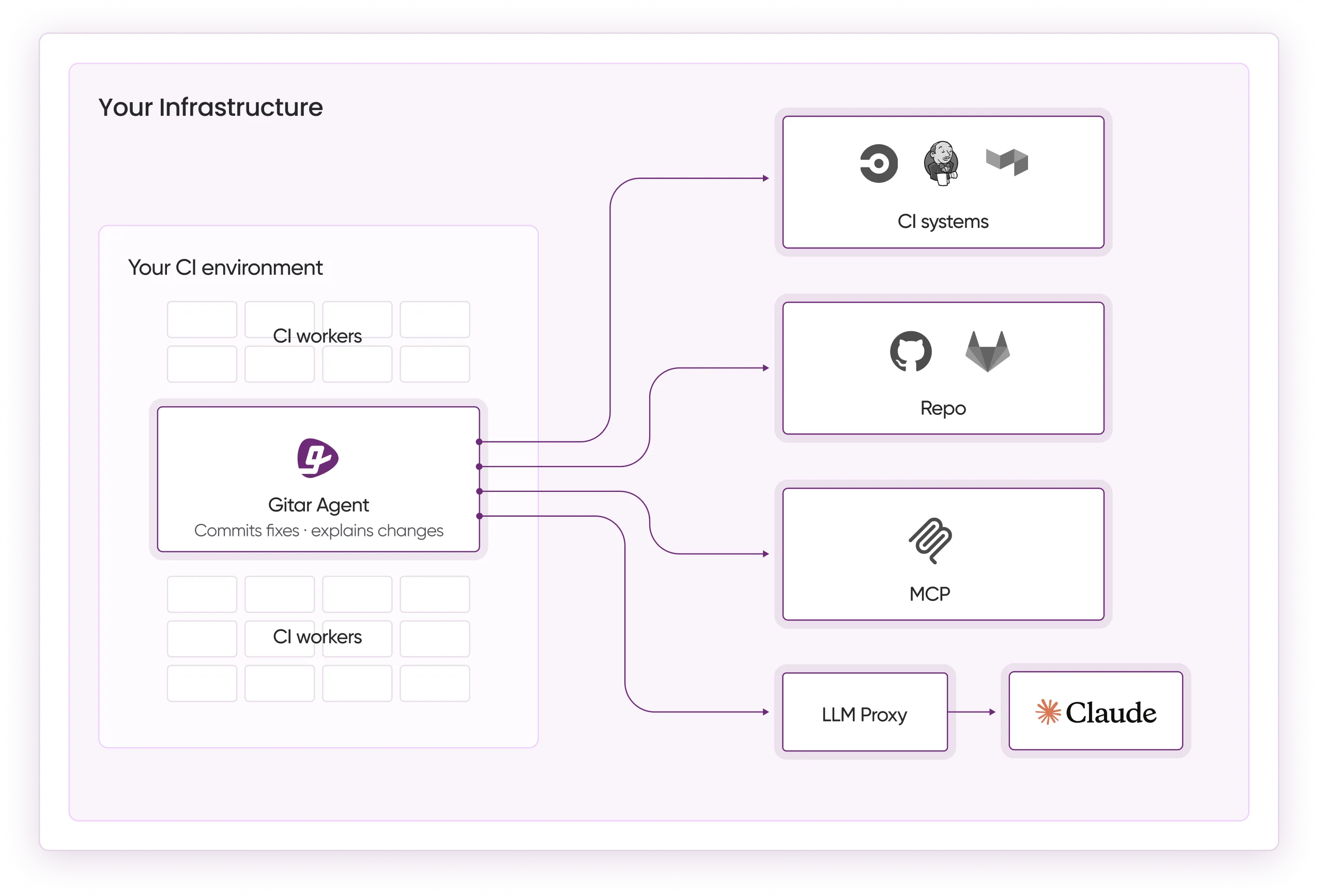

- Teams can scale beyond suggestions with Gitar’s Healing Engine for auto-fixing CI failures, validated commits, and green builds, using a 14-day Team Plan trial.

Testing Methodology and What the Benchmarks Reveal

Our 2026 evaluation tested five leading open source AI coding agents across Python and JavaScript repositories, measuring bug detection accuracy, setup complexity, privacy features, and GitHub integration quality. The tools span a wide maturity range, from PR-Agent’s established 10,500 GitHub stars to OpenCode’s explosive growth past 95,000 stars. Testing revealed detection rates consistent with the 15–31% range noted above, yet every tool still required manual implementation of suggested fixes. This manual implementation gap represents the critical limitation where Gitar’s Healing Engine differentiates itself through automated CI validation and commits.

Top 5 Open Source AI Coding Agents for Code Review

1. PR-Agent (Qodo) – Most Comprehensive GitHub Integration

PR-Agent stands as a leading open source AI code review solution, with comprehensive GitHub and GitLab integration and support for local models through Ollama. With 10,500 GitHub stars and 200 contributors, it provides PR summaries, inline suggestions, and security scanning across multiple programming languages.

Pros: Self-hosted deployment, extensive documentation, active community, multi-platform support

Cons: Unresolved configuration issues blocking local LLM deployments (GitHub Issues #2098 and #2083 open 4+ months), requires 8GB VRAM minimum, suggestions only without auto-apply

Installation: pip install pr-agent && ollama run llama3.2

Best for: Teams requiring comprehensive GitHub integration with self-hosted privacy controls

2. Aider – Superior Git Integration and Terminal Workflow

Aider, ranked #7 in NxCode Team’s March 2026 AI coding tools ranking, excels in git-native workflows with automatic commit generation for every AI edit. This open source terminal-based AI pair programmer has written 72% of its own code and supports model-agnostic operation including Claude, GPT-5, and local models.

Pros: Best-in-class git integration, automatic descriptive commits, architect mode for cost control, multi-model support

Cons: Terminal-only interface, steeper learning curve, struggles with very large codebases, single-agent workflows only

Installation: pip install aider-chat && ollama run llama3.2

Best for: Terminal power users seeking seamless git integration and commit automation

While Aider automates commits, teams needing validated fixes that guarantee green builds can upgrade to Gitar’s autonomous fixing engine with a 14-day trial.

3. Cline (formerly Claude Dev) – VS Code Native Experience

Cline provides a VS Code extension experience with transparent LLM consumption tracking and intuitive chat-based code review. Listed in PackmindHub’s coding-agents-matrix as supporting VS Code extensions, it offers a user-friendly alternative to terminal-based tools while maintaining open source flexibility and supporting VS Code forks, JetBrains IDEs, and CLI.

Pros: Native VS Code integration, transparent usage tracking, intuitive interface, active development

Cons: Requires more setup than some alternatives, smaller community than Aider, requires API keys for strongest performance

Installation: Install via VS Code marketplace, then configure with a local Ollama endpoint.

Best for: VS Code users who prefer GUI-based code review workflows

4. Tabby – Self-Hosted Code Intelligence Platform

Tabby, with 33,000 GitHub stars and 249 releases, offers comprehensive self-hosted code intelligence beyond basic review. While primarily focused on code completion, its review capabilities support local model deployment with strong privacy controls for regulated environments.

Pros: Comprehensive self-hosting, strong privacy controls, high release velocity, broad language support

Cons: Primarily completion-focused, shares the same VRAM requirements as PR-Agent, complex initial setup

Installation: docker run -p 8080:8080 tabbyml/tabby serve –model TabbyML/CodeLlama-7B

Best for: Teams requiring comprehensive self-hosted code intelligence with privacy sovereignty

5. OpenCode – Multi-Model Terminal Agent

OpenCode, ranked #5 in NxCode Team’s March 2026 ranking with over 95,000 GitHub stars, provides provider-agnostic code review supporting 75+ LLM providers across CLI, desktop apps, and IDE extensions. Its dual-agent architecture with “build” and “plan” agents supports fully local models via Ollama for complete offline operation.

Pros: Provider-agnostic, 75+ LLM support, dual-agent architecture, complete offline capability

Cons: Requires technical configuration, quality depends on the chosen model

Installation: curl -fsSL https://opencode.ai/install | bash && ollama launch opencode –model qwen3.5

Best for: Budget-conscious developers seeking maximum model flexibility

Feature Comparison: Open Source AI Code Review Agents

This comparison table highlights the tradeoff between autonomy and setup effort across the five tools. All agents support some form of local privacy, yet their bug detection rates cluster in a similar band while autonomy ranges from suggestions only to dual-agent execution. Use these patterns to match your tolerance for configuration work with your need for hands-off operation.

|

Tool |

Bug Detection |

Local Privacy |

Setup Ease |

Autonomy Level |

|

PR-Agent |

25-30% |

Yes (Ollama) |

Complex |

Suggestions Only |

|

Aider |

20-25% |

Yes (Multi-model) |

Moderate |

Auto-commit |

|

Cline |

15-20% |

Yes (Local models) |

Moderate |

Plan & Act Edits |

|

Tabby |

15-25% |

Yes (Self-hosted) |

Complex |

Completion Focus |

|

OpenCode |

20-28% |

Yes (Offline) |

Moderate |

Dual-Agent Execution |

Ollama Local Setup Guide for Privacy-First Code Review

Ollama provides the easiest path for local self-hosting of open source coding LLMs with these steps:

1. Install Ollama: curl -fsSL https://ollama.com/install.sh | sh

2. Download Model: ollama run llama3.2 or ollama run qwen2.5-coder:32b

3. Configure Agent: Set the local endpoint in your chosen tool, for example export OLLAMA_BASE_URL=http://localhost:11434

4. Verify Setup: Test with a simple code review task

This configuration enables complete offline operation while maintaining code privacy, which is essential for proprietary codebases and regulated environments. However, privacy and local control represent only part of the code review equation.

Open Source Limitations and When to Upgrade to Gitar

Open source AI coding agents provide valuable starting points for automated code review, yet they share critical limitations that affect production workflows. Industry analysis reveals that 30% of AI-suggested code gets rejected, which highlights the gap between suggestions and validated fixes.

Open source tools excel at identifying potential issues but require manual implementation of suggested changes. This manual step becomes particularly problematic because these tools lack CI context awareness, so they cannot validate whether their suggestions will work in your specific build environment. The validation gap compounds at team scale, where coordination challenges mean multiple developers may waste time implementing suggestions that fail in CI due to unaccounted dependencies, environment variables, or integration requirements.

The table below highlights four critical capabilities where open source agents consistently fall short. These capabilities determine whether a tool can autonomously resolve issues or only flag them for manual intervention.

|

Capability |

Open Source Agents |

Gitar |

|

Auto-apply Fixes |

No |

Yes |

|

CI Failure Analysis |

No |

Yes |

|

Validate Against Build |

No |

Yes |

|

Guarantee Green Builds |

No |

Yes |

Gitar’s Healing Engine addresses these limitations by automatically analyzing CI failures, generating contextually appropriate fixes, validating them against your build environment, and committing working solutions. Experience the difference with Gitar’s 14-day Team Plan trial and move beyond suggestions to guaranteed fixes.

Frequently Asked Questions

Best Open Source AI Coding Agent for VS Code

Cline (formerly Claude Dev) provides the most polished VS Code experience with native extension support, transparent LLM usage tracking, and intuitive chat-based workflows. For users who prefer local model integration, Cline can be configured with Ollama endpoints for complete privacy. Continue also offers strong VS Code integration as an open source alternative to Cursor, supporting tab autocomplete and context-aware chat.

Handling Large Repositories with Open Source Agents

Most open source AI coding agents struggle with large codebases because of context window limitations. Tabby performs best for large repositories through its self-hosted architecture and dedicated indexing, while Aider may encounter performance issues compared to commercial solutions with larger context windows. For comprehensive large-repo analysis with memory and context management, Gitar’s hierarchical memory system maintains per-line, per-PR, and per-repo context that improves over time.

Cost Considerations for Open Source AI Coding Agents

Open source tools themselves remain no-cost, but teams should factor in operational expenses including local compute resources, such as the 8GB+ VRAM requirement mentioned for tools like PR-Agent and Tabby, API costs when using cloud models, and engineering time for setup and maintenance. The total cost of ownership often exceeds commercial solutions once you include the manual work required to implement suggested fixes and maintain self-hosted infrastructure.

Integrating AI Code Review Agents with GitHub Workflows

PR-Agent offers the most comprehensive GitHub integration through GitHub Actions and webhook support, while tools like Aider excel at git-native workflows with automatic commit generation. Most tools require GitHub App installation or personal access tokens for repository access. For production GitHub workflows with automated fixing and CI integration, Gitar provides native GitHub Actions support with zero configuration required.

Measuring the Impact of AI Code Review Tools

Teams can track metrics including PR review time reduction, bug detection rates, false positive percentages, and developer satisfaction scores. Open source tools typically provide basic usage statistics, while platforms like Gitar offer comprehensive analytics dashboards that show CI failure patterns, fix success rates, and team productivity improvements with detailed ROI calculations.

Conclusion: Start with Open Source, Scale with Gitar

Open source AI coding agents like PR-Agent, Aider, and Cline provide strong entry points for automated code review, delivering privacy-focused solutions without monthly per-developer licensing. However, the manual implementation gap discussed earlier becomes the critical bottleneck at scale.

For teams ready to move beyond suggestions to guaranteed working solutions, Gitar’s autonomous Healing Engine represents the next evolution in AI code review. Start your 14-day Team Plan trial to experience auto-fixing CI failures and guaranteed green builds.