Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways

- AI coding tools boost PR volume by 98% but increase review times by 91%, costing teams about $1M annually per 20 developers due to review bottlenecks.

- Common pitfalls include alert fatigue from noisy notifications, false positives and negatives, and missing codebase context that causes irrelevant feedback and missed bugs.

- Many tools struggle with CI integration, security scanning for AI-generated code, and separating real issues from infrastructure flakiness, which amplifies technical debt.

- Gitar addresses these gaps with auto-fixes for CI failures, a unified dashboard comment, hierarchical context memory, and natural language rules for repeatable workflows.

- Teams using Gitar cut CI and review time from 1 hour to 15 minutes per developer. Start your free trial to achieve similar time savings.

AI coding assistants accelerate development but strain traditional review workflows. Pull request volume grows faster than teams can safely review changes, which increases costs, risk, and frustration. This article walks through 12 common pitfalls in automated code review and shows how Gitar’s healing engine avoids them.

The 12 Common Pitfalls of Automating Code Review Workflows

1. Alert Fatigue from Notification Overload

The Problem: Traditional AI review tools scatter dozens of inline comments across pull request diffs, which floods developer inboxes with notifications. Current AI-powered code review tools are “noisy” and “disconnected from real-world developer workflows”, creating cognitive overload instead of clarity.

The Impact: Developers ignore important feedback buried in notification spam, so critical issues slip through and skills erode. Constant context switching between many comment threads destroys focus and productivity.

Gitar’s Solution: The single Dashboard comment consolidates all findings in one living document that updates in real time. This approach removes notification fatigue while preserving signal quality.

2. False Positives and Negatives

The Problem: Many automated tools flag minor style issues while missing critical logic bugs. AI-generated code introduces 75% more logic errors compared to human-written code, yet review tools often focus on superficial formatting instead of substantive issues.

The Impact: Developers lose trust in automation when irrelevant suggestions overwhelm them while real security vulnerabilities pass undetected.

Gitar’s Solution: The Judge guardrail system filters irrelevant inputs and collapses duplicate outputs. Only high-value feedback reaches developers.

3. Lacking Codebase Context

The Problem: Without adequate context, AI code reviewers “hallucinate advice that ignores how the system actually works”. Generic models do not understand business logic, architectural patterns, or organizational standards.

The Impact: AI tools suggest solutions that are “locally valid but architecturally incoherent”. Teams then refactor heavily and accumulate technical debt.

Gitar’s Solution: A hierarchical memory system maintains context per line, pull request, repository, and organization. It also pulls product context from Jira and Linear to understand the “why” behind changes.

4. Over-Reliance Eroding Developer Skills

The Problem: Junior developers start depending on automated suggestions instead of learning underlying principles. Experienced developers were 19% slower when using AI tools because they spent extra time validating and correcting AI-generated code.

The Impact: Teams lose institutional knowledge and debugging strength as developers rely on tools they do not fully understand. Long-term technical debt grows quietly.

Gitar’s Solution: Configurable suggestion modes let teams build trust gradually. Teams start with human approval for all fixes, then enable full automation only for clearly defined, trusted scenarios.

5. Automating Broken Processes

The Problem: Teams automate inefficient workflows without addressing root causes. Poor coding standards, unclear requirements, and inconsistent practices get amplified instead of corrected.

The Impact: Automation magnifies chaos, which increases technical debt and process friction.

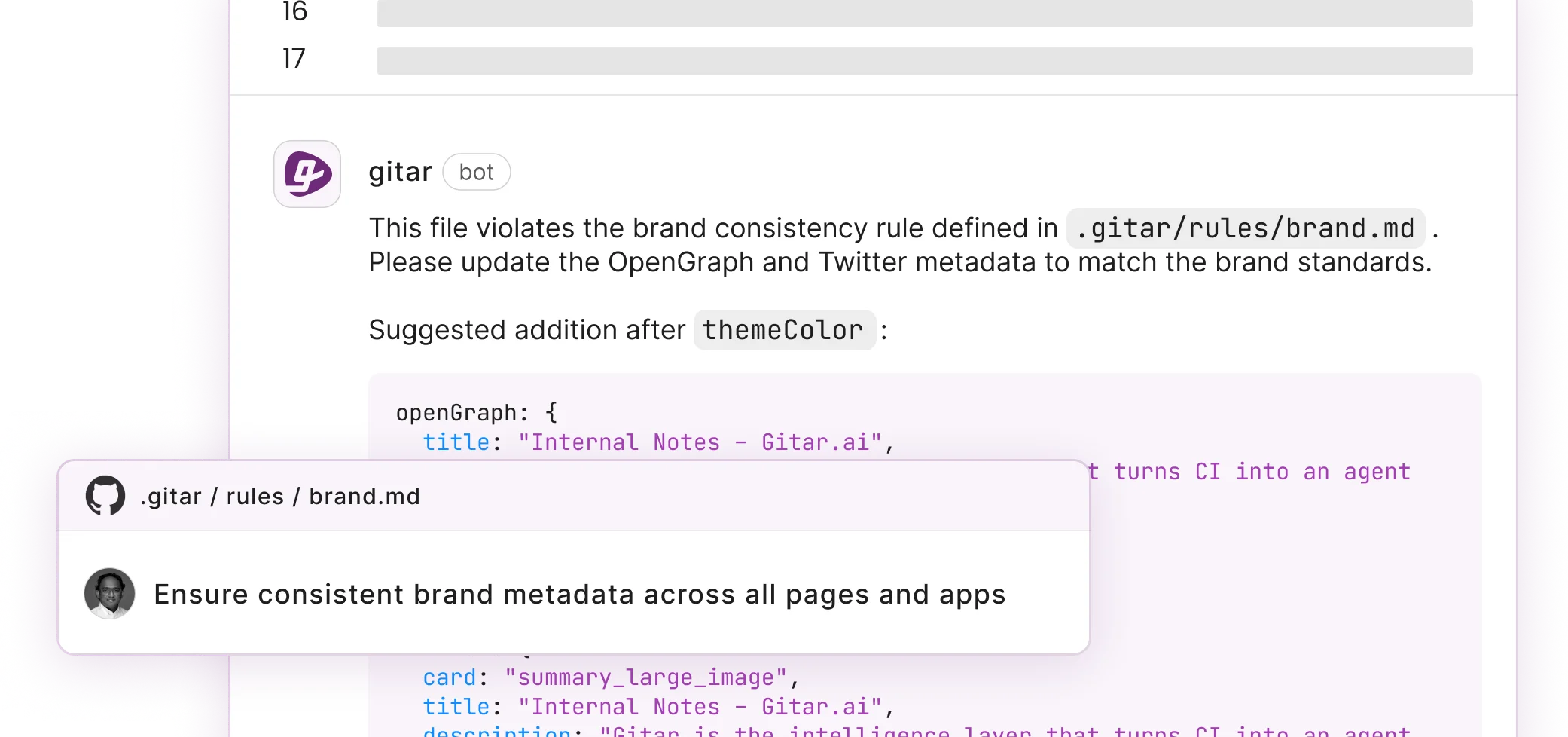

Gitar’s Solution: Natural language rules in repository configuration files (see Gitar documentation) let teams codify best practices without complex YAML or scripting.

6. CI Integration Failures

The Problem: Many review tools operate separately from continuous integration pipelines and miss build failures, test results, and deployment requirements.

The Impact: Developers spend time reviewing code that does not compile while CI failures remain unresolved until manual intervention.

Gitar’s Solution: Native integration with GitHub Actions, GitLab CI, CircleCI, and Buildkite enables automatic analysis and fixing of CI failures before developers even notice them.

7. PR Volume Overload from AI Code Generation

The Problem: As mentioned earlier, the 98% increase in merged pull requests from AI-assisted coding overwhelms human review capacity.

The Impact: Review bottlenecks slow deployment velocity even though development feels faster, which frustrates teams and accelerates technical debt.

Gitar’s Solution: Auto-fix capabilities scale with PR volume. Gitar automatically resolves common issues like lint errors, test failures, and build breaks without human intervention.

8. Security Blindspots in AI-Generated Code

The Problem: Security researchers identified exploitable weaknesses in major coding agents, including data exfiltration and remote code execution vulnerabilities. AI-generated code shows 2.74x higher security vulnerability rates than human-written code.

The Impact: Traditional review processes miss sophisticated security issues embedded in AI-generated code, which creates production vulnerabilities.

Gitar’s Solution: Gitar performs comprehensive security scanning, bug detection, and performance review, then applies auto-fixes for identified issues when safe.

9. Merge Conflicts and Unrelated Failures

The Problem: Distinguishing code-related failures from infrastructure flakiness becomes critical as PR volumes grow, yet most tools cannot make this distinction.

The Impact: Developers waste time investigating unrelated failures while real code issues remain unresolved, which slows overall development velocity.

Gitar’s Solution: Advanced failure analysis separates code bugs from infrastructure issues. Engineering teams report significant time savings from this “unrelated PR failure detection.”

10. Hybrid Model Coordination Gaps

The Problem: The decision layer in AI code review, including which PRs need review, reviewer assignments, and auto-merge policies, remains unsolved beyond basic bug detection.

The Impact: Misalignment between human reviewers and AI tools creates confusion, duplicated effort, and inconsistent quality standards.

Gitar’s Solution: Configurable automation levels define exactly when human review is required and when auto-fixes can proceed independently.

11. Cross-Platform Integration Complexity

The Problem: Teams using multiple platforms such as GitHub, GitLab, and several CI systems face fragmented tooling without unified workflows or consistent experiences.

The Impact: Context switching between tools and interfaces reduces productivity and creates gaps in review coverage.

Gitar’s Solution: A unified platform supports GitHub, GitLab, and major CI systems with consistent interfaces and shared configuration across environments.

12. Missing Workflow Automation Depth

The Problem: Most review tools lack feedback loops and provide no signal on whether suggestions are helpful, accepted, or ignored over time. This gap blocks continuous improvement.

The Impact: Teams cannot refine review processes or measure the impact of automation investments, so workflows stagnate.

Gitar’s Solution: Deep analytics categorize CI failures, identify infrastructure issues, and surface systematic patterns while tracking team velocity improvements.

Why Gitar Eliminates These Pitfalls: The Healing Engine Beyond Suggestions

Competitors like CodeRabbit and Greptile charge $15–30 per developer for suggestion-only tools. Gitar instead provides a comprehensive healing engine that fixes code rather than only commenting on it. This approach addresses a core limitation of existing tools, which identify problems but leave the work to developers.

Gitar’s architecture tackles the pitfalls above through interconnected capabilities. First, auto-fix functionality resolves CI failures and implements review feedback automatically, which removes the manual work that creates bottlenecks. This healing approach appears through the single dashboard comment described earlier, which prevents notification fatigue while keeping feedback organized. Natural language rules then let teams configure these workflows in plain English, so the system adapts to specific standards without complex YAML. Cross-platform support and Jira or Slack integration keep this context consistent across the entire development lifecycle.

See these differentiators in action with a free Team Plan trial.

The table below highlights the core capabilities that distinguish Gitar’s healing engine from suggestion-only competitors.

|

Capability |

CodeRabbit/Greptile |

Gitar |

|

Auto-apply fixes |

No |

Yes |

|

CI auto-fix |

No |

Yes |

|

Green build guarantee |

No |

Yes |

Engineering teams report reducing daily CI and review time from 1 hour to 15 minutes per developer, which translates to $750K annual savings for a 20-person team. To reach similar outcomes, teams need a structured rollout that builds trust while avoiding the pitfalls described above.

Implementation Roadmap to Avoid Pitfalls

Phase 1: Installation and Trial

Install the Gitar GitHub or GitLab app and start your 14-day Team Plan trial. Gitar immediately begins posting dashboard comments on new pull requests, so teams can see the impact on day one.

Phase 2: Trust Building

Run in suggestion mode and review all proposed fixes. This phase lets developers watch Gitar resolve lint errors, test failures, and build breaks while they maintain full control over changes, which prepares them for selective automation.

Phase 3: Automation Expansion

Enable auto-commit for trusted fix types such as formatting and simple CI failures. Add repository rules for workflow automation using natural language configuration, building on the confidence gained in Phase 2.

Phase 4: Platform Integration

Connect Jira and Slack for cross-platform context and explore analytics dashboards for CI patterns. Use custom workflows to address team-specific requirements and deepen automation coverage.

Frequently Asked Questions

Common Mistakes in Code Review Automation

The biggest mistakes include automating broken processes without fixing underlying issues and relying on tools that only suggest instead of fix. Teams also struggle when they ignore contextual awareness across the codebase or adopt noisy tools that create alert fatigue. Another frequent issue is choosing platforms that do not integrate with existing CI and CD pipelines.

Disadvantages of Code Review Automation

Main disadvantages include false positives that waste developer time and weak understanding of business logic that leads to poor suggestions. Skill erosion appears when junior developers become over-dependent on automated feedback. Many tools also create notification spam and integrate poorly with existing workflows, which slows teams instead of speeding them up.

How a Hybrid Code Review Model Works

An effective hybrid model combines automated fixing for routine issues with human oversight for complex work. Automation handles formatting, simple CI failures, and other low-risk tasks. Configurable automation levels then define when human review is required, which allows teams to start in suggestion mode and move toward full automation gradually.

Building Trust in Automated Commits

Teams build trust through transparency and control. They begin with suggestion-only modes where humans approve every change, then enable auto-commits for low-risk scenarios such as lint fixes or formatting. Effective tools explain what changed and why, maintain audit trails for all automated actions, and provide simple rollback options.

Preventing Missed Business Logic Issues

Preventing business logic gaps requires tools that integrate with project management systems like Jira and Linear to understand the intent behind code changes. Strong solutions maintain hierarchical memory across the entire codebase instead of analyzing each pull request in isolation. They also support configurable rules that encode team-specific standards and architectural patterns.

Conclusion

The 12 pitfalls of automating code review workflows, from alert fatigue to security blindspots, reflect how teams adapt to AI-accelerated development. The 91% increase in PR review time can erase productivity gains from AI coding tools, yet the answer lies in better automation, not less automation.

Gitar’s healing engine addresses these pitfalls with auto-fixes, contextual awareness, and integrated workflows that scale with AI-generated code volume. The shift from suggestion engines to healing engines changes code review from a bottleneck into a force multiplier.

Install Gitar to automatically fix broken builds and start shipping higher quality software faster.