Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways

- AI coding tools have surged PR volume by 60%, overwhelming traditional reviews and causing $1M annual losses for mid-sized teams.

- Small PR limits, layered pipelines, and policy-as-code with natural language rules create scalable, reliable review quality.

- Gitar’s healing engine auto-fixes CI failures and reviewer feedback with full test validation, unlike suggestion-only tools.

- Consolidated feedback dashboards, clear prioritization, and review metrics support continuous improvement across teams.

- Start Gitar’s 14-day trial to automate fixes and scale reviews safely in the 2026 AI era.

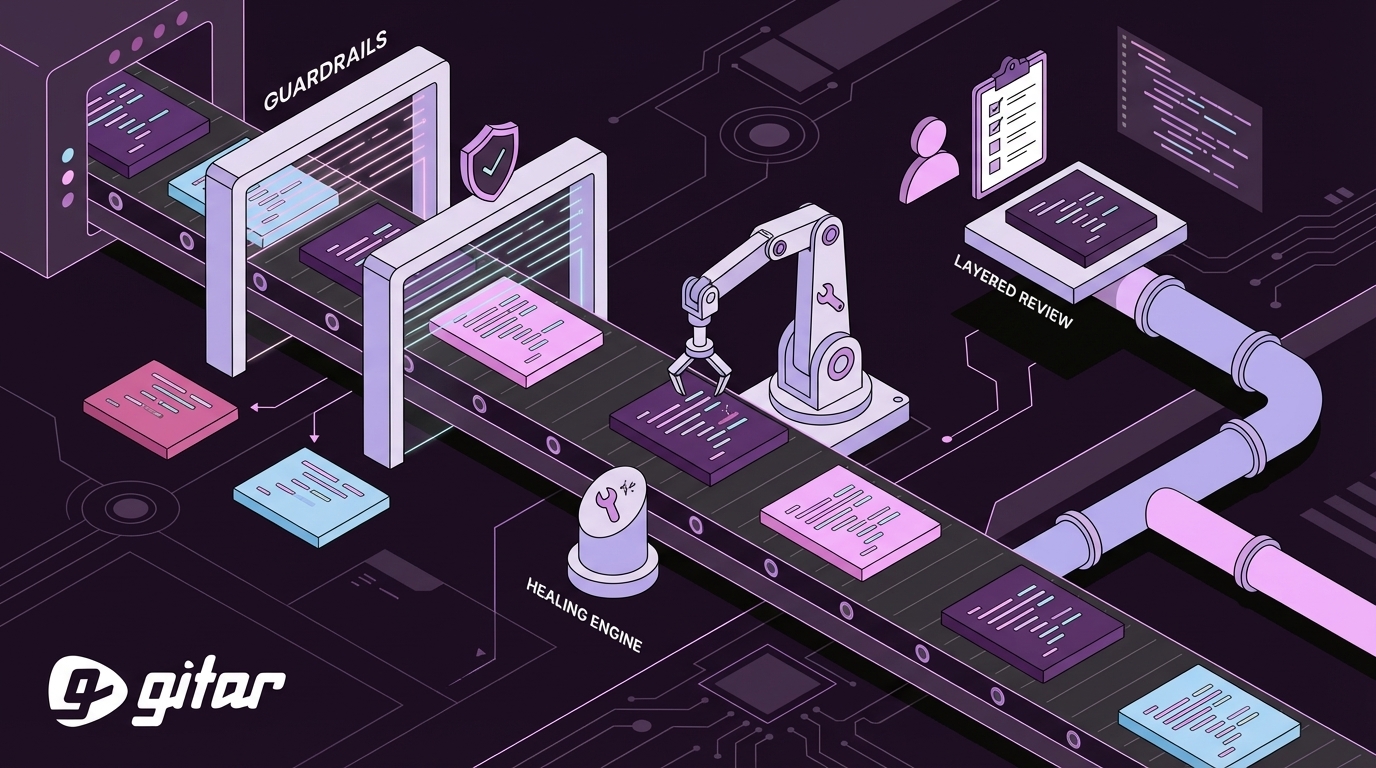

The Problem: Why Guardrails & Policies Matter in 2026

The post-AI development landscape has created a perfect storm of review bottlenecks. Greptile’s State of AI Coding 2025 shows median pull request size increased 33% from March to November 2025, rising from 57 to 76 lines changed per PR. Teams now face a flood of suggestion-only tools that charge $15-30 per seat yet still require manual implementation of fixes. The confirmation bias trap has emerged as AI reviewers using the same models to review AI-generated code miss critical logic issues, creating a false sense of security while actual quality degrades.

The Solution: How Gitar’s Healing Engine Closes the Gaps

Gitar’s healing engine fixes code automatically instead of only suggesting changes. When CI fails or reviewers leave feedback, Gitar analyzes the issue, generates validated fixes, and commits them directly to your PR. Key capabilities include auto-applying fixes with CI validation, single-comment dashboard consolidation, and natural language rules in .gitar/rules/*.md files for policy-as-code automation, all detailed in the Gitar documentation. The platform integrates natively with GitHub, GitLab, CircleCI, and Buildkite, so teams can add healing capabilities without changing their core workflow.

The table below highlights the automation capabilities that separate true healing engines from suggestion-only tools and helps teams evaluate which approach fits their needs.

|

Capability |

CodeRabbit/Greptile |

Gitar |

|

Auto-apply fixes |

No |

Yes |

|

CI failure analysis |

No |

Yes |

|

Single-comment dashboard |

No |

Yes |

|

Natural language rules |

Yes |

Yes |

The platform’s native integrations and healing features make it simple to see real impact quickly. Start your 14-day Team Plan trial to experience the difference between suggestions and actual fixes.

1. Enforce Small PR Size Limits for Better Reviews

The industry standard for code reviews is 200 to 400 lines of code per reviewer to prevent fatigue and missed errors. Implement GitHub branch protection rules that automatically flag PRs exceeding the 400-line limit mentioned earlier. To prevent developers from hitting this hard limit unexpectedly, configure your CI pipeline to warn them when they approach the threshold and suggest breaking changes into smaller, focused PRs. These size constraints reduce cognitive load and significantly improve defect detection rates.

2. Build a Layered Review Pipeline That Matches Risk

An incremental and tiered code review strategy involves multiple distinct stages: Tier 1 fully automated with linting, unit tests, and vulnerability scanners in CI/CD; Tier 2 peer review for logic and readability; Tier 3 senior or security team review for vulnerabilities, performance, and architectural impact. Each tier should have specific SLAs, such as a few hours for peer review and a defined window for senior review, with clear escalation rules when reviews stall. This layered structure creates predictable flow while reserving expert attention for the highest-risk changes.

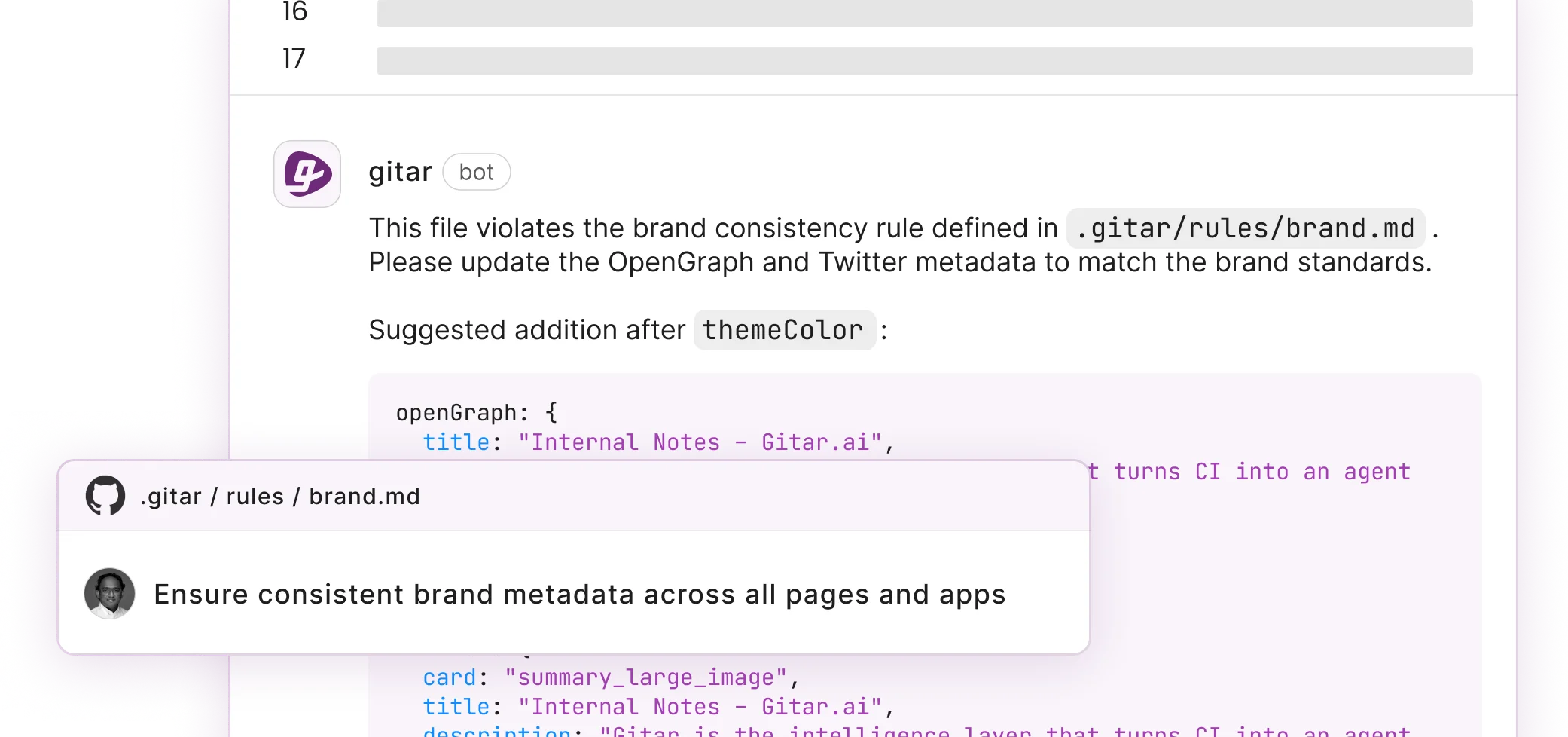

3. Turn Policies into Code with Natural Language Rules

Gitar’s platform uses repository rules to allow teams to express complex workflows and policies as prompts, enabling the agent to reason about context, apply fixes, and automate workflows. Repository rules defined as natural language MD files in .gitar/rules enable automated actions like adding comments or labels on package upgrades. This approach eliminates complex YAML configurations while maintaining audit trails through Git version control. Teams gain consistent enforcement without sacrificing readability or flexibility.

4. Balance AI Automation with Human Oversight

High-velocity development teams determine code review scope based on risk, fast-tracking low-risk changes while applying deeper scrutiny to high-risk changes involving APIs, authentication, sensitive data, or AI-generated code. Reserve automated fixes for non-critical issues such as linting and formatting. Require human approval for architectural changes, security-sensitive modifications, and complex logic updates. This balance keeps velocity high while protecting critical paths.

5. Enable CI Auto-Healing to Cut Context Switching

Traditional CI failures create expensive context-switching cycles that pull developers away from feature work. Gitar’s healing engine eliminates this disruption by automatically analyzing CI failures, generating validated fixes, and committing them without human intervention. Because the system validates every fix against your full test suite before applying changes, developers can trust that red builds will become green ones without their involvement. Teams spend more time shipping features and less time chasing flaky or broken builds.

6. Consolidate Feedback with a Single-Comment Dashboard

Gitar reserves inline comments only for the most critical or actionable lines of code, adheres to a threshold to prevent excess, and allows turning them off entirely while still providing value via the Dashboard. This approach eliminates notification spam while maintaining comprehensive review coverage. All findings, including CI analysis, review feedback, and rule evaluations, update in a single location that reviewers and authors can scan quickly.

7. Use SLA Checklists to Keep Reviews Moving

Tier-specific SLAs include 4 hours for Tier 2 peer review and 24 hours for Tier 3 senior review. Enforce the tier-specific SLAs outlined in your layered pipeline through explicit checklists covering functionality verification, security assessment, performance impact, and test coverage adequacy. Time-boxed code review sessions of 60 to 90 minutes sustain focus and minimize the likelihood of overlooking errors. These practices keep work flowing while preserving review depth.

8. Focus on Critical Issues Before Style Debates

Apiiro recommends prioritizing issues related to security, correctness, and scalability over style in AI-generated code review findings. Gitar’s Judge guardrail filters incoming messages and collapses duplicate outputs, strips internal references, and drops replies that add nothing. Configure tools to fail builds only on critical issues to prevent alert fatigue while maintaining quality standards. Style and minor nits can remain suggestions instead of blockers.

9. Track Review Metrics with Clear Dashboards

Teams should track key performance indicators including review turnaround time, pull request cycle time, post-merge defects, and reviewer participation rates. Policy-as-code provides scalability by automating enforcement for distributed teams and large-scale pipelines, creating a single source of truth that scales with modern cloud-native architectures. Monitor ROI through reduced manual toil, faster delivery cycles, and a rising share of issues resolved automatically.

10. Set Trust Levels and Auto-Commit Policies Gradually

Gitar enabled configurable PR merge blocking based on code review verdict severity on January 29, 2026, with thresholds from Approved to Blocked in Code Review Settings. Start with suggestion mode for new implementations so teams can review proposed changes without risk. Gradually enable auto-commit for trusted fix types such as linting errors and dependency updates as confidence grows. This progressive rollout builds trust while still capturing the benefits of automation.

Common Pitfalls & How Gitar Avoids Them

Teams frequently struggle with noisy suggestion tools that flood PRs with minor warnings. Gitar’s Judge follows a fail-open principle, allowing comments to pass unfiltered if it errors or times out, while maintaining quality through intelligent filtering. Unlike competitors that provide unvalidated suggestions, Gitar’s healing engine validates every fix against CI before applying changes, which guarantees green builds and removes the manual verification cycle.

Frequently Asked Questions

How long should a code review take?

Industry standards recommend 4-6 hours for comprehensive reviews with proper automation in place. This timeframe allows thorough evaluation of logic, security, and architectural concerns while maintaining development velocity. Teams using layered automation can achieve faster turnaround by delegating routine checks to CI pipelines.

What are the best code review guardrails for GitHub?

Essential GitHub guardrails include branch protection rules requiring passing CI checks, pull request size limits under 400 lines, CODEOWNERS files for automatic reviewer assignment, and required approvals from domain experts. Policy-as-code implementations using natural language rules provide additional flexibility for custom workflows.

How should teams resolve code review disagreements?

Teams should establish clear escalation paths with designated decision-makers such as tech leads or architecture review boards. Document resolution rationale directly in PR comments to maintain context. Keep discussions focused on documented principles, test results, and established coding standards rather than personal preferences.

What is policy as code for reviews?

Policy as code transforms manual review guidelines into automated, version-controlled rules stored alongside your codebase. Teams define policies using natural language descriptions in repository files, enabling consistent enforcement across all pull requests while maintaining audit trails through Git history.

How can teams measure code review automation success?

Key metrics include reduced review cycle time, decreased post-merge defects, improved developer satisfaction scores, and quantified time savings from automated fixes. Track the percentage of issues resolved automatically versus those requiring human intervention to refine your automation strategy.

Conclusion: Scale Code Reviews Safely with Healing Automation

The 2026 AI PR explosion demands sophisticated automation guardrails and policies to maintain development velocity without compromising quality. This 10-practice playbook offers a practical framework for scaling code reviews safely while using healing engines that actually fix issues instead of only suggesting improvements. Teams that adopt these practices report significant productivity gains and reduced manual toil. Install Gitar to automatically heal broken builds, raise software quality, and turn your review process into a competitive advantage.