Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways

- AI code review delivers speed, consistency, and scale for mechanical tasks like linting and security scans, cutting review time by 62%.

- Human reviewers add essential context, architectural judgment, and mentoring for complex business logic and long-term system health.

- Hybrid review wins in practice: AI handles 70–80% of routine PRs, humans focus on high-impact work, and teams cut cycle times by 24%.

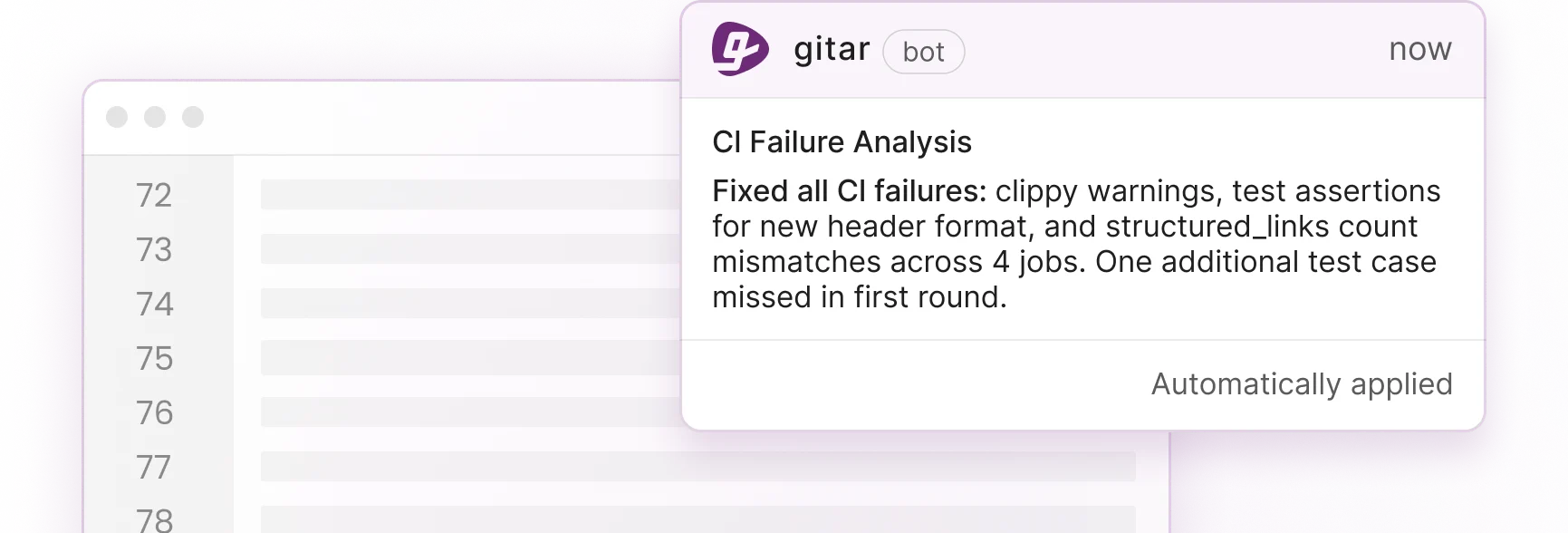

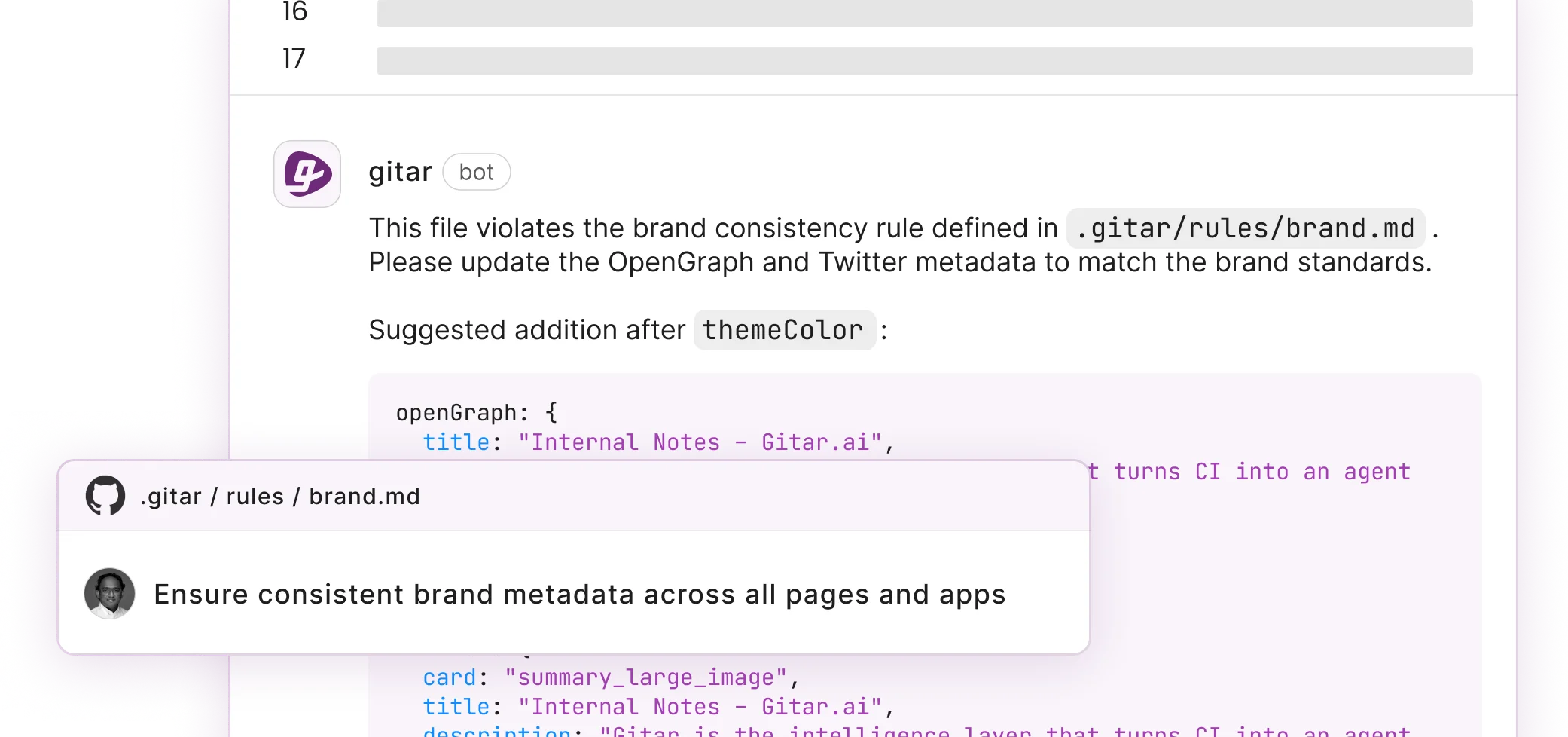

- Gitar’s Healing Engine auto-fixes issues with CI validation and outperforms suggestion-only tools like CodeRabbit that only leave comments.

- Teams using Gitar’s 14-day Team Plan trial can save around $750K annually for a 20-developer engineering group.

AI Code Review Automation in Practice: Where It Shines and Fails

AI code review automation excels at repetitive, mechanical checks and struggles with deeper reasoning. The strongest results appear in routine tasks such as syntax validation, security scanning, and style enforcement. AI-assisted systems reduce review time by 62% for these checks. AI tools run continuously without fatigue, process thousands of lines in seconds, and apply the same rules across every repository.

Significant gaps remain for higher-level work. Independent benchmarks show AI tools miss architectural problems and cross-file dependencies. At the same time, AI-generated code contains 1.7x more issues than human-written code. When AI reviewers inspect AI-generated code using similar models, confirmation bias appears and logic or business-context errors slip through.

|

Capability |

AI Performance |

Best Use Cases |

|

Speed |

10x faster than humans |

Lint errors, formatting |

|

Consistency |

Perfect adherence to rules |

Style guides, security patterns |

|

Scale |

Unlimited concurrent reviews |

High-volume PR processing |

|

Context Understanding |

Limited to diff analysis |

Surface-level issues only |

|

Business Logic |

Poor comprehension |

Not recommended |

AI code review tools fit best in automated CI pipelines, security vulnerability detection, and enforcement of coding standards. They fall short on architectural decisions, business logic validation, and mentoring for junior developers.

Human Code Reviewers: Where People Still Outperform AI

Human reviewers provide depth that AI cannot match. They understand business context, architectural tradeoffs, and team norms. Google’s analysis of nine million code reviews found that knowledge sharing and deeper comprehension create most of the ROI, not defect detection alone.

People excel at nuanced judgment for complex logic, thoughtful mentoring through comments, and alignment with product requirements. They grasp the reason behind a change and judge whether a solution fits the long-term system design.

Human limits still create bottlenecks in AI-accelerated development. Developers lose 5.8 hours each week to inefficient workflows, and PR volume has surged by 91%. Timezone gaps, reviewer fatigue, and cognitive overload slow teams down.

Anthropic’s 2026 research answers the concern about QA roles directly. Humans remain essential for strategic decisions and business logic validation. AI focuses on routine verification while people handle high-impact architectural and product choices.

Seeing these strengths and limits side-by-side clarifies where each approach delivers the most value.

AI vs Human Code Review: Key Differences (2026 Table)

The following comparison highlights a clear pattern. AI dominates speed, availability, and consistency, while humans lead on context, architecture, and mentoring. This complementary split forms the basis of an effective hybrid review strategy.

|

Dimension |

AI Code Review |

Human Code Review |

Winner |

|

Speed |

Seconds per PR |

Hours to days |

AI (10x advantage) |

|

Availability |

24/7 continuous |

Limited by timezones |

AI |

|

Consistency |

Perfect rule adherence |

Variable based on reviewer |

AI |

|

Context Understanding |

Surface-level analysis |

Deep business logic |

Human |

|

Architectural Insight |

Pattern matching only |

System-wide implications |

Human |

|

Mentoring Value |

Generic suggestions |

Personalized guidance |

Human |

|

Cost at Scale |

Fixed platform cost |

Linear with team size |

AI |

|

False Positives |

10–30% depending on tool |

Near zero |

Human |

The data shows complementary strengths. AI handles mechanical work that benefits from speed and consistency, while humans focus on judgment-heavy tasks that require business context and architectural thinking.

Hybrid Code Review Blueprint for 2026

A hybrid strategy that blends AI automation with human oversight delivers both speed and quality. This three-phase blueprint gives teams a clear rollout path.

Phase 1: AI Automates Mechanical Reviews – Use AI for lint errors, security scanning, formatting issues, and CI failures. Install Gitar to automatically fix broken builds instead of only flagging them. This shift removes 70–80% of routine review work. For setup details, follow the integration guides in Gitar’s documentation.

Phase 2: Intelligent Routing – Send complex PRs that touch architecture, business rules, or cross-team dependencies to human reviewers. Keep simple bug fixes and small feature changes in the automated path.

Phase 3: Continuous ROI Measurement – Track review cycle time, defect escape rates, and developer satisfaction. Jellyfish reports $7,500 annual savings per developer when teams implement AI review effectively.

This hybrid model reduces notification noise from chatty AI tools, cuts context switching, and closes the trust gap between suggestions and validated fixes. Organizations with strong AI adoption achieved 24% faster PR cycle times while keeping quality steady.

Why Gitar Outperforms Other AI Code Review Tools

Gitar stands out through its Healing Engine, which fixes code and validates changes in CI before committing. Competing tools such as CodeRabbit and Greptile charge $15–30 per developer each month for comments only. Gitar instead delivers working fixes that pass the pipeline. To see the details, review how the Healing Engine validates and applies changes.

|

Capability |

Gitar |

CodeRabbit |

Greptile |

|

Auto-fix Implementation |

Yes, with CI validation (Trial/Team) |

No, suggestions only |

No, suggestions only |

|

CI Integration |

Full pipeline analysis |

Limited |

Basic |

|

Comment Management |

Single updating dashboard |

Multiple inline comments |

Scattered notifications |

|

Trial Access |

14-day full Team Plan |

Limited free tier |

Basic trial only |

Real-world usage backs this up. Gitar has caught high-severity security issues in Copilot-generated code that Copilot missed. Teams also save time through “unrelated PR failure detection,” where Gitar identifies infrastructure or configuration problems instead of blaming the latest commit.

Gitar supports GitHub, GitLab, CircleCI, and Buildkite, and it uses natural language rules instead of complex YAML. For rollout patterns and platform coverage, explore the deployment and configuration guides.

Proven ROI for 20-Developer Teams

Hybrid code review automation delivers a strong financial case for mid-sized teams.

|

Metric |

Before Automation |

After Gitar Implementation |

Annual Savings |

|

Time on CI/Review Issues |

1 hour/day/developer |

~15 min/day/developer |

$750K for 20-dev team |

|

Context Switching |

Multiple interruptions daily |

Near-zero disruption |

Improved focus time |

|

Failed Deployment Rate |

15–20% due to missed issues |

5–8% with automated validation |

Reduced incident costs |

These gains address concerns about automated commits through configurable trust levels and gradual rollout. Teams begin in suggestion mode, build confidence, then enable auto-commit for specific failure categories.

Step-by-Step Implementation Playbook

Successful hybrid adoption follows a clear four-phase rollout.

Phase 1: Installation and Setup – Install the Gitar GitHub App or GitLab integration. Start your 14-day Team Plan trial to access auto-fix features and custom rules. For platform-specific steps, use the installation walkthrough.

Phase 2: Trust Building – Run in suggestion mode so developers approve fixes before they land. Track Gitar’s performance on lint errors, test failures, and build breaks while the team reviews fix quality.

Phase 3: Selective Automation – Turn on auto-commit for trusted fix types such as formatting and simple lint issues. Expand to security patches and dependency updates as confidence grows.

Phase 4: Full Platform Integration – Connect Jira and Slack, define natural language workflow rules, and use analytics to refine automation policies.

This staged approach keeps humans in control while removing mechanical toil that slows delivery.

Conclusion: Hybrid Review with Gitar Matches 2026 Reality

Modern teams need hybrid code review that blends AI speed with human insight. Pure AI review misses critical context, and human-only review cannot keep up with AI-driven code volume. Gitar’s Healing Engine moves beyond suggestion-only tools by delivering validated fixes that keep builds green. Start your 14-day Team Plan trial and ship higher quality software faster.

FAQ

How do AI code review tools handle false positives compared to human reviewers?

AI code review tools often generate 10–30% false positives, depending on the platform and configuration. Human reviewers usually produce near-zero false positives because they understand context. Gitar reduces this issue by validating fixes in CI, so changes run against the real build environment before they land (Trial and Team plans). Teams get the best results when AI handles mechanical tasks such as formatting or lint errors and humans review complex logic. Modern AI platforms also learn from team feedback and steadily lower false positive rates.

What is the difference between suggestion-only AI tools and auto-fix platforms like Gitar?

Suggestion-only tools such as CodeRabbit and Greptile analyze code and leave comments about potential issues. Developers then implement fixes manually and hope they pass CI. Auto-fix platforms like Gitar generate code changes, validate them in CI pipelines, and commit working solutions automatically. This approach removes the loop of reading comments, writing patches, pushing commits, and waiting for CI. Instead of paying $15–30 per developer for comments that still require manual work, teams get automated resolution of mechanical issues while humans retain control over strategic decisions.

How should engineering teams measure ROI from hybrid AI-human code review?

Teams should measure ROI through time savings, quality improvements, and developer satisfaction. Track review cycle time, CI failure resolution speed, and hours saved from automated fixes. Monitor defect escape rates, security vulnerability detection, and post-deployment incidents to gauge quality. Use surveys to capture reduced context switching and frustration with repetitive work. Financial models typically compare about $3,000 in annual tool cost per developer against roughly $7,500 in time savings. Keep an eye on the review time ratio, which compares time spent reviewing AI fixes to time spent writing code from scratch, and aim to keep it below 1.5x.

Can AI code review tools integrate with existing CI/CD pipelines and development workflows?

Modern AI code review platforms integrate across the full development toolchain. Gitar connects with GitHub, GitLab, CircleCI, Buildkite, Jira, Slack, and other common tools. This integration gives AI access to build logs, test results, and dependency data that it needs for accurate diagnosis and fix validation. Natural language rules replace complex YAML, so teams can define automation policies without deep DevOps skills. Cross-platform support prevents lock-in to a single vendor. For supported platforms and configuration options, review the integration and workflow guides.

What security and compliance considerations apply to AI-powered code review automation?

Security-focused organizations need AI tools that protect code and meet compliance standards. Enterprise-grade platforms offer on-premises deployment so AI agents run inside existing CI infrastructure and code stays within company boundaries. SOC 2 Type II and ISO 27001 certifications help regulated industries validate compliance. Key requirements include data residency controls, detailed audit logs for automated changes, and configurable approval workflows for sensitive repositories. Teams should confirm that AI tools can access secrets and configuration files required for accurate CI analysis while still respecting security boundaries. Starting in suggestion-only mode allows gradual trust building before enabling automated commits.