Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways

- AI code review tools flood developers with notifications, increasing PR review time by 91% even as coding gets 3-5x faster.

- DIY options like GitHub API scripts and LLM summarization partially consolidate comments but lack CI validation and automatic fixes.

- Gitar gathers all automated feedback into a single updating dashboard comment, which removes noisy inline suggestion clutter.

- Gitar’s healing engine fixes CI failures, validates changes, and connects with Jira and Slack, cutting review time by roughly 50–60%.

- Teams recover more than $750K in annual productivity; start a 14-day Gitar Team Plan trial to clear PR bottlenecks quickly.

The Problem: AI Code Review Noise Is Killing Your Velocity

The AI coding surge created a new bottleneck in software delivery. Eighty-four percent of developers now use AI tools that write 41% of all code, which drives a huge spike in pull requests. GitHub reports 82 million pushes per month and a 91% increase in PR review time.

Teams using GitHub Copilot and Cursor code 3-5x faster, yet sprint velocities stay flat. Comment spam from multiple automated review tools causes the slowdown. CodeRabbit, Greptile, and similar platforms scatter dozens of inline suggestions across each diff. Every push triggers a new wave of notifications. AI-coauthored PRs have 1.7x more issues than human PRs, which multiplies the noise.

For a 20-developer team, this pattern creates about $1 million in annual productivity loss from context switching and review delays. Tools built to speed development now bury teams in unactionable feedback.

Before jumping to solutions, you need to understand which consolidation approach fits your specific situation and toolchain.

Assess Your Setup: Decision Tree for Comment Consolidation

Start by mapping your current pain points. Teams facing notification fatigue from several AI review tools need aggregation as soon as possible. Teams with simpler pipelines may get short-term relief from DIY GitHub API scripts, while complex CI environments usually require a managed platform.

Consider your team size, tool stack, and tolerance for manual maintenance. These factors determine whether you can sustain a DIY approach or should move to a platform. Given the higher issue rate from AI-generated code mentioned earlier, consolidation becomes critical for maintaining review quality without overwhelming developers.

Step-by-Step DIY Methods and Their Limits

Here are five DIY approaches that consolidate automated code review comments, along with their tradeoffs.

1. GitHub API Python Aggregation

import requests import json def aggregate_pr_comments(repo, pr_number, token): headers = {‘Authorization’: f’token {token}’} # Fetch review comments comments_url = f’https://api.github.com/repos/{repo}/pulls/{pr_number}/comments’ response = requests.get(comments_url, headers=headers) comments = response.json() # Group by file and line grouped = {} for comment in comments: file_path = comment[‘path’] if file_path not in grouped: grouped[file_path] = [] grouped[file_path].append(comment[‘body’]) return grouped

2. LLM-Powered Comment Summarization

Use ChatGPT or Claude to process grouped comments with prompts such as: “Summarize these code review comments into actionable categories: security, performance, style, bugs.”

3. GitHub Actions YAML Workflow

name: Consolidate Review Comments on: pull_request_review: types: [submitted] jobs: consolidate: runs-on: ubuntu-latest steps: – name: Aggregate Comments uses: actions/github-script@v6 with: script: | const comments = await github.rest.pulls.listReviewComments({ owner: context.repo.owner, repo: context.repo.repo, pull_number: context.issue.number }) // Process and post summary

4. Reddit-Style Comment Filtering

Create browser extensions or userscripts that collapse repetitive suggestions and highlight unique feedback, which reduces visual clutter in the PR view.

5. Basic ML Comment Clustering

Apply similarity algorithms to group related suggestions and remove duplicates across different tools, so reviewers see fewer repeated comments.

Limitations of DIY Approaches

These approaches require constant maintenance, lack deep CI integration, and provide no guarantee that suggested fixes actually work. See the Gitar documentation for examples of validated automation patterns that avoid these pitfalls.

Why Teams Move to Platforms

Manual consolidation scripts eventually turn into technical debt. Teams outgrow ad hoc solutions and need platforms that aggregate feedback, validate fixes, and apply changes automatically.

Move beyond manual scripts with Gitar. Install in about 30 seconds and let the healing engine handle consolidation and fixes.

The Solution: Gitar’s Single Updating Comment and Healing Engine

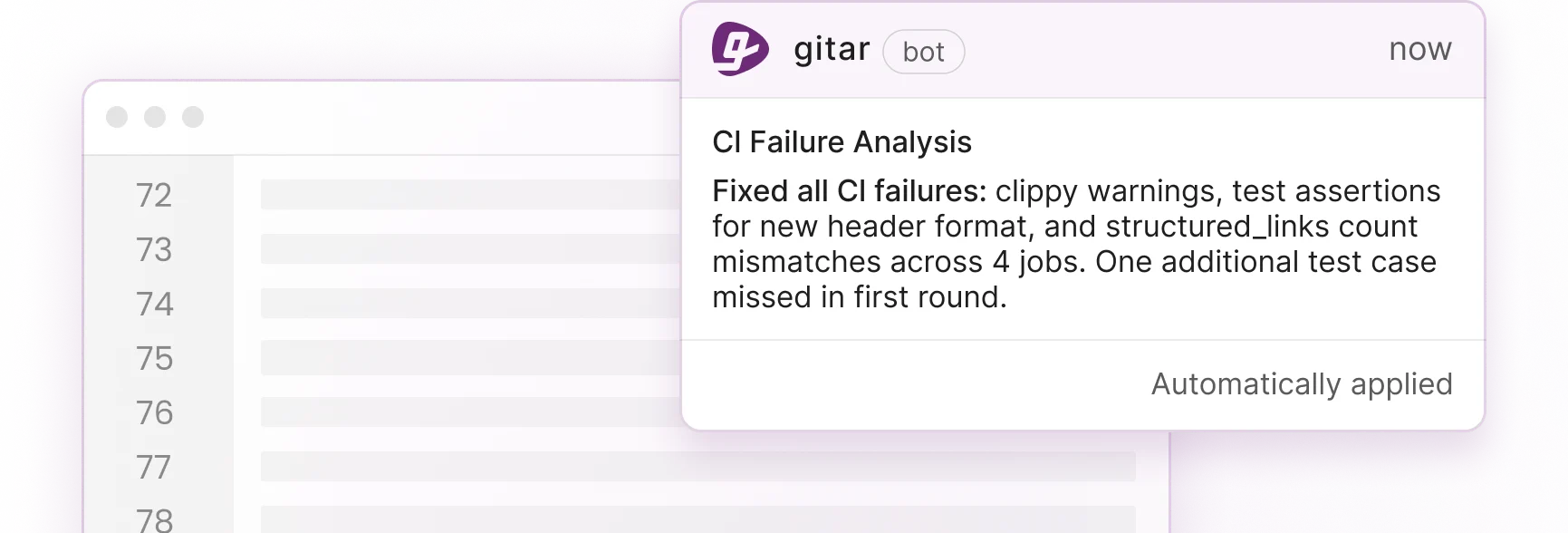

Gitar replaces scattered inline suggestions with one dashboard comment that updates in place as issues change. CodeRabbit and Greptile charge $15–30 per developer for suggestions that still demand manual work. Gitar’s healing engine instead fixes CI failures, implements review feedback, and validates changes against your full environment. See the Gitar documentation for setup details and feature coverage.

The following capabilities make this consolidation effective in real teams.

- Automatic fixes for CI failures, lint errors, and test breaks

- CI validation that confirms each fix passes your pipeline

- Jira, Slack, and Linear integration for shared context across tools

- Natural language rules stored in .gitar/rules/*.md files

- A single updating comment that removes notification spam

The Gitar website includes setup guides for GitHub, GitLab, and major CI platforms. Teams can start a 14-day Team Plan trial with full access and no seat limits.

Implement Gitar: Four-Phase Workflow for Your Team

Phase 1: Installation (30 seconds)

Install the Gitar GitHub App or GitLab integration. The platform immediately starts posting consolidated dashboard comments on new PRs. Refer to the Gitar documentation for step-by-step installation guidance.

Phase 2: Suggestion Mode

Begin with manual approval for all fixes. Review Gitar’s analysis of CI failures, security issues, and code quality problems inside the single updating comment.

Phase 3: Auto-Commit

Enable automatic fixes for trusted categories such as lint errors and formatting issues. Gitar validates each fix against your CI environment before committing changes.

Phase 4: Custom Rules

Add repository-specific automation using natural language rules:

— title: “Security Review” when: “PRs modifying authentication or encryption code” actions: “Assign security team and add label” —

The following comparison table shows why Gitar’s approach delivers more value than DIY scripts or competitor tools. Only Gitar combines a single updating comment with automatic fixes and CI validation.

|

Capability |

DIY Scripts |

Competitors |

Gitar |

|

Auto-apply fixes |

No |

No |

Yes |

|

CI auto-fix |

No |

No |

Yes |

|

Single comment |

Partial |

Noisy |

Yes |

See the difference with a 14-day Gitar trial. Get full auto-fixing and consolidation in your own repos without seat limits.

Proven ROI and Customer Results

Teams that consolidate automated feedback cut review time by about 50–60%. Sixty percent of companies cite CI pipeline failures as the main cause of project delays, so faster, reliable fixes matter directly to delivery dates.

Here is how Gitar recovers the million-dollar productivity loss described earlier for a 20-developer team.

|

Metric |

Before Consolidation |

After Gitar |

|

Daily CI/review time |

1 hour per developer |

15 minutes per developer |

|

Annual productivity cost |

$1,000,000 |

$250,000 |

|

Context switching |

Multiple interrupts daily |

Near-zero |

Engineering teams at Tigris and Collate report that Gitar’s PR summaries are “more concise than Greptile/Bugbot.” They also note that unrelated PR failure detection saves “significant time” by separating infrastructure issues from code bugs.

FAQ

How do I consolidate automated code review comments on GitHub?

Use GitHub API scripts to aggregate comments by file and line, then apply AI summarization to group related feedback. For full consolidation with automatic fixes, Gitar provides a single updating dashboard comment that removes notification spam while validating and applying changes.

Can I trust automated fixes from AI code review tools?

Gitar supports configurable trust levels. Start in suggestion mode where you approve every fix, then enable auto-commit for specific failure types such as lint errors. The platform validates all fixes against your CI environment before applying them, which keeps changes production-ready.

How does consolidation handle complex CI environments?

Gitar emulates your complete environment, including SDK versions, multi-dependency builds, and third-party security scans. The Enterprise tier runs agents inside your own CI with access to secrets and caches, so generated fixes work in your actual production setup.

What is the difference between Gitar and CodeRabbit for comment consolidation?

CodeRabbit posts suggestions in scattered inline comments that still require manual implementation. Gitar consolidates all feedback into one updating comment and applies validated fixes automatically. You pay $15–30 per seat for suggestions with CodeRabbit, while Gitar delivers comprehensive automation through its healing engine.

How does consolidation address the Reddit complaint about AI review tool noise?

The single updating comment approach removes notification floods by collapsing resolved items and keeping one source of truth. Instead of dozens of notifications per push, developers receive concise, actionable summaries that update in place as issues resolve automatically.

Conclusion: Clear Your PR Bottleneck with Gitar

The AI coding wave shifted the bottleneck from writing code to reviewing it. Teams generating 3-5x more code with AI tools now drown in review comment noise and lose about $1 million in yearly productivity for every 20 developers. DIY consolidation scripts help a little but lack the validation and automation required for durable efficiency gains.

Gitar’s healing engine consolidates automated feedback into a single updating comment and fixes CI failures while implementing review suggestions. It validates each change against your production environment. Teams achieve the 50–60% review time reduction mentioned earlier and see about 90% fewer context-switching interrupts.

Start your 14-day Gitar Team Plan trial to reclaim lost review hours, fix broken builds automatically, and experience consolidated, auto-fixed PRs in about 30 seconds.