Key Takeaways

- AI code generation speeds up delivery 3-5x but shifts the bottleneck to PR reviews, with review times up 91% from issues in AI-generated code.

- AI delivers speed, consistency, and scale for mechanical checks, while humans excel at contextual judgment, business logic, and complex bug detection.

- Most AI tools only suggest changes and need manual fixes, miss most of the logic issues, and struggle with domain context and CI validation.

- Hybrid workflows use AI for routine fixes and humans for architecture, cutting CI and review time from 1 hour to 15 minutes per developer daily with Gitar.

- Gitar’s Healing Engine auto-implements and validates fixes against your CI, guaranteeing green builds, so start your free Gitar trial today to accelerate shipping.

AI and Human Code Review Performance Snapshot

|

Capability |

AI Review |

Human Review |

Winner |

|

Speed |

10x faster analysis |

Hours to days |

AI |

|

Consistency |

Never fatigued |

Variable quality |

AI |

|

Context Understanding |

Limited business logic |

Deep domain knowledge |

Human |

|

Error Detection |

Misses 75% logic issues |

Catches complex bugs |

Human |

|

Scalability |

Unlimited capacity |

Team size bottleneck |

AI |

The data shows a clear split between strengths. AI handles mechanical checks and speed, while humans own contextual judgment. AI generates 75% more logic errors than human-written code, especially around edge cases and business rules.

AI Code Review Strengths and Gaps

Pros:

- Runs reviews about 10x faster than human cycles

- Delivers consistent checks across every PR

- Handles style enforcement and syntax checking reliably

- Scales with your repositories without extra headcount

- Flags many obvious security patterns

Cons:

- Misses business context and domain-specific logic

- Introduces confirmation bias when reviewing AI-generated code

- Suggestion-only output still needs manual implementation

- Cannot confirm that fixes actually pass your CI

- Struggles with architecture and system-level tradeoffs

Developer forums repeatedly call out AI’s context blindness. AI often hallucinates logic when it reviews complex business rules or integration patterns that depend on system behavior, not just syntax.

Human Code Review Strengths and Limits

Pros:

- Understands business requirements and real-world constraints

- Provides architectural vision and system design expertise

- Finds subtle logic flaws and tricky edge cases

- Applies team conventions and coding standards correctly

Cons:

- Review fatigue reduces quality over time

- Cognitive bias and inconsistent standards across reviewers

- Timezone gaps slow reviews in distributed teams

- Cannot keep up with AI-accelerated code generation volume

- Consumes expensive senior developer time on routine checks

The scalability crunch is already visible. Teams report 91% increases in review time as AI tools flood repositories with code that still needs human validation. GitHub and GitLab threads describe teams “drowning in PRs” as human review capacity becomes the main brake on delivery speed.

Real-World AI vs Human Review Pain Points from Reddit

Developer communities describe a consistent hybrid struggle. AI-generated PRs create 1.7x more issues overall and 4x more code duplication, so human reviewers often spend more time debugging AI output than they saved during initial generation.

Common Reddit complaints include:

- “AI generates plausible but broken logic that passes initial review”

- “Spending more time fixing AI code than writing it myself”

- “Review tools suggest fixes that don’t actually work in our CI”

- “Notification spam from AI tools commenting on every line”

The verification tax keeps growing. Time spent verifying AI’s plausible-but-incorrect code often exceeds writing it correctly from scratch. Experienced developers then move slower because they must validate every suggestion.

Why Gitar’s Hybrid Model Outperforms Suggestion Tools

Gitar delivers a true hybrid solution that combines AI speed with guaranteed fixes. Competing tools like CodeRabbit and Greptile charge $15-30 per developer for suggestion-only workflows, while Gitar’s Healing Engine automatically implements and validates fixes against your CI pipeline. You can review the details in the Gitar documentation.

|

Capability |

CodeRabbit/Greptile |

Gitar |

Winner |

|

Auto-apply fixes |

No |

Yes (Trial/Team) |

Gitar |

|

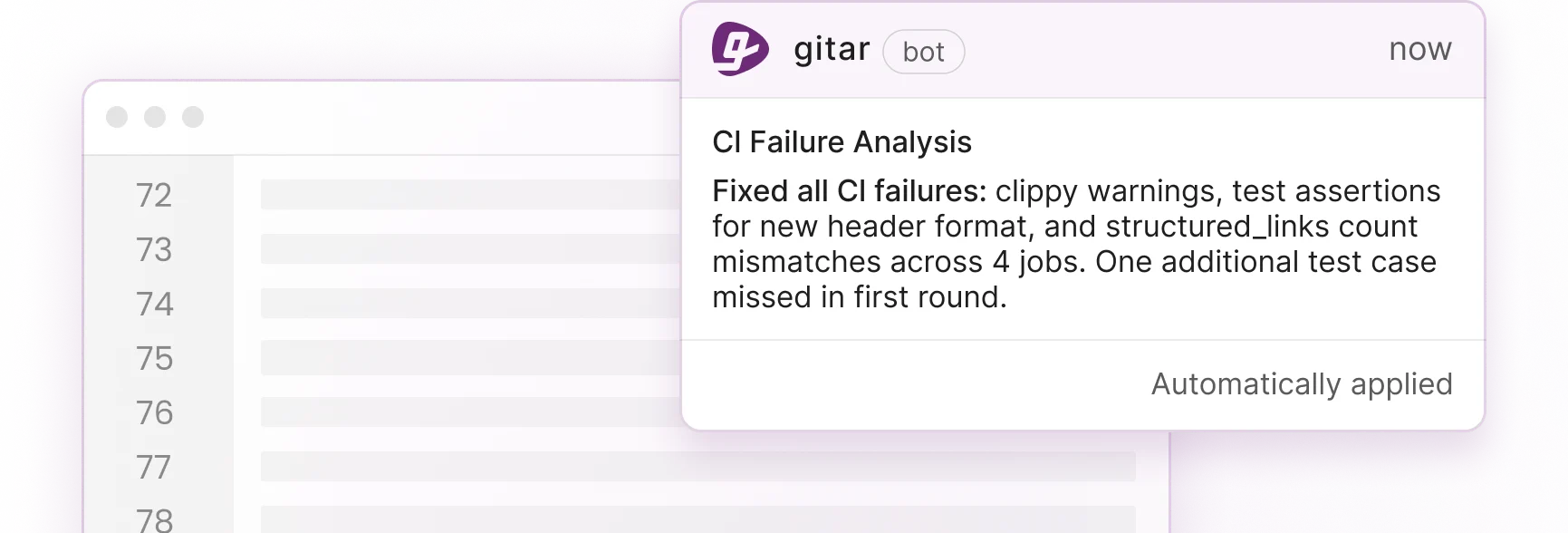

CI failure analysis |

No |

Yes |

Gitar |

|

Green build guarantee |

No |

Yes |

Gitar |

|

Single comment interface |

No |

Yes |

Gitar |

Teams see strong ROI from this approach. Many save about $750K per year by cutting developer time on CI and review issues from 1 hour per day to 15 minutes per developer with Gitar. Gitar includes a full-featured 14-day free Team Plan trial so teams can measure velocity gains before paying.

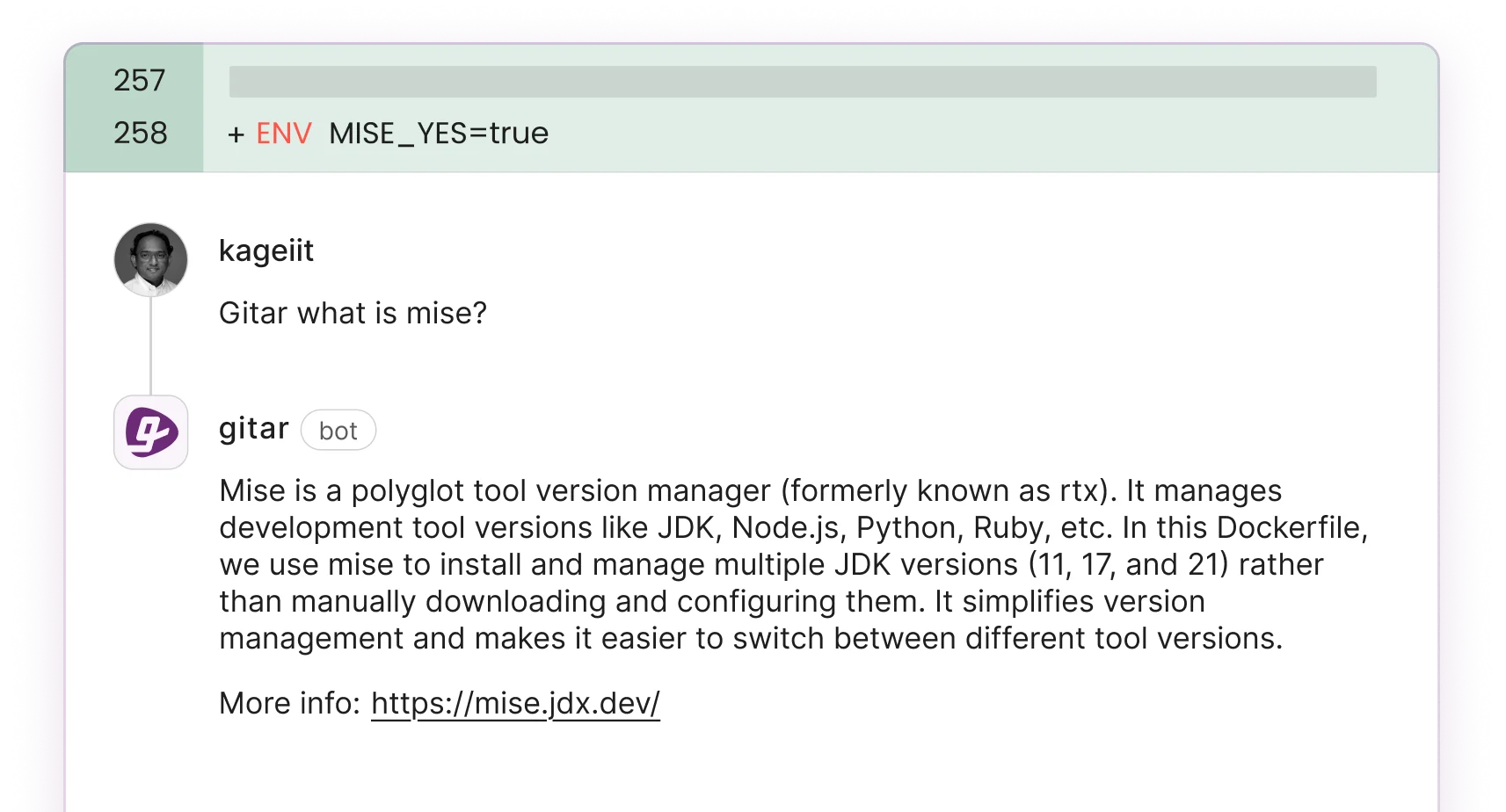

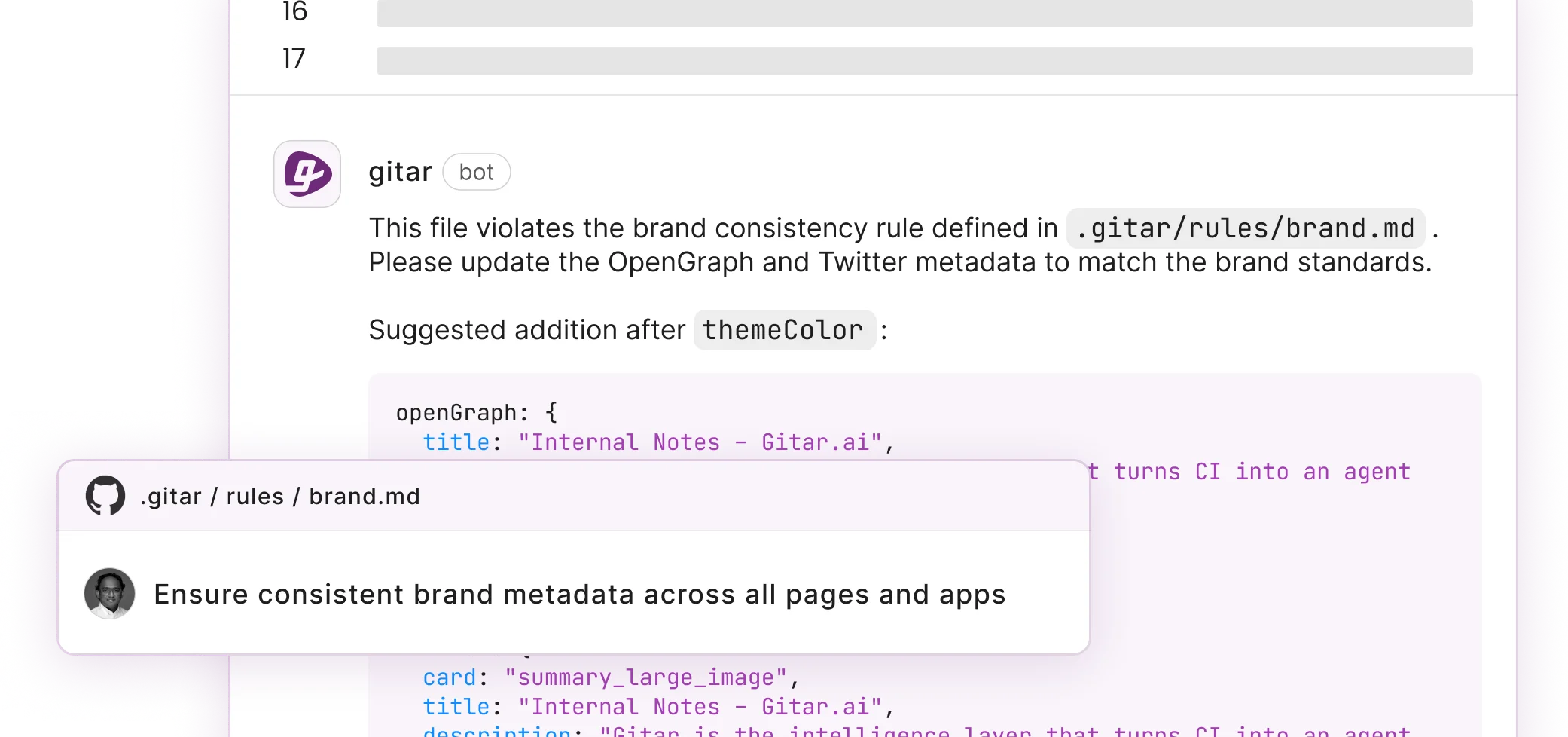

Gitar behaves very differently from suggestion engines. It validates every fix against your real CI environment. When a test fails or lint error appears, Gitar reads the failure logs, generates a contextual fix, validates the change, and commits the solution automatically. Natural language rules let teams shape workflows without complex YAML files.

Rolling Out a Gitar-Powered Hybrid Workflow

Teams see the best results when they adopt Gitar in phases.

Phase 1: Installation

Install the Gitar GitHub or GitLab app and start your 14-day Team Plan trial. Gitar immediately posts consolidated dashboard comments on new PRs. Follow the steps in the Gitar documentation for installation.

Phase 2: Trust Building

Start in suggestion mode and approve each fix. Watch Gitar clear lint errors, test failures, and build breaks while you keep full control.

Phase 3: Automation

Turn on auto-commit for trusted fix types such as formatting and simple test updates. Add repository rules for workflow automation using natural language descriptions.

Phase 4: Platform Integration

Connect Jira and Slack for richer context across tools. Use analytics to spot CI patterns and improve your development pipeline. Start your AI code review hybrid trial today.

This hybrid workflow assigns mechanical fixes to AI and reserves architectural decisions for humans. Teams report less context switching and fewer noisy notifications compared with traditional suggestion-based tools.

The future of code review relies on AI and humans working together through platforms that fix code instead of only commenting on it. Install Gitar now, automatically fix broken builds, and ship higher quality software faster. The 14-day trial includes full Team Plan features with no seat limits, so you can prove the value of autonomous code review before investing.

Hybrid Code Review FAQ

Hybrid AI-Human Review vs Pure AI or Pure Human

Hybrid review combines AI speed with human judgment and reduces each side’s weaknesses. AI handles fast, consistent checks and catches mechanical issues such as syntax errors, style violations, and common security patterns. AI still struggles with business context, architecture, and complex logic validation. Human reviewers bring domain knowledge and contextual judgment but face scalability limits and fatigue. Hybrid systems assign AI to first-pass checks and automatic fixes, while humans focus on architecture, business logic, and strategic decisions. This split keeps quality high while improving throughput.

Common AI Code Review Failure Modes and Mitigations

AI code review tools often fail on context loss, where they miss business requirements or integration patterns, and on logic errors in edge cases and recursive flows. They also suffer from confirmation bias when reviewing AI-generated code and cannot confirm that suggested fixes work in production-like environments. Teams can reduce these risks with staged rollouts that begin in suggestion-only mode, human oversight for complex business logic, tools that validate fixes against real CI pipelines, and clear escalation paths when AI is uncertain. AI works best as a strong assistant, not a replacement for human judgment.

Expected ROI from Hybrid AI-Human Workflows

Engineering teams usually see large productivity gains from hybrid workflows. Most savings come from cutting time spent on routine CI failures and review loops. A 20-developer team that spends 1 hour per day per developer on CI and review issues burns roughly $1M in annual productivity. Hybrid systems can reduce this to about 15 minutes per developer per day, saving around $750K annually with Gitar. Teams also benefit from faster PR merges, less context switching, fewer production bugs from stronger automated validation, and higher developer satisfaction as repetitive work drops.

Comparing Leading AI Code Review Tools

Recent benchmarks show wide performance differences between AI code review tools. Vendors are usually measured on precision, recall, and overall F-score. Leading tools reach about 50-65% precision and 40-60% recall, with tradeoffs between false positives and missed issues. Critical bug detection rates vary, with top tools catching 50-60% of critical issues while weaker tools detect under 20%. The strongest options pair solid precision with reasonable recall, highlight actionable findings instead of noisy style comments, and include ways to validate that fixes truly resolve the problem.

Choosing Between Suggestion-Only and Auto-Fix Platforms

Teams should weigh risk tolerance, CI complexity, and desired automation when choosing tools. Suggestion-only tools feel safer because humans approve every change, but they still require manual implementation and cap productivity gains. Auto-fix platforms unlock more efficiency but need strong validation to ensure fixes work. Key factors include CI integration depth, the ability to validate fixes in your real build environment, fine-grained controls for automation levels, rollback options for problematic changes, and total cost given that suggestion tools still consume developer time. Many teams begin in suggestion mode and then increase automation as trust grows.