Written by: Ali-Reza Adl-Tabatabai, Founder and CEO, Gitar

Key Takeaways for Engineering Leaders

- Free AI code review tools use shallow context, which causes more logic bugs by analyzing only PR diffs, not full codebases.

- High false positive rates create notification fatigue and waste about 1 hour per developer every day.

- Missing CI/CD integration drives project delays because teams must debug build failures manually without automated fixes.

- Scalability breaks due to usage caps, monthly GPU costs, and slower performance for active teams.

- Gitar delivers autonomous fixes, full CI integration, and about $750K annual savings for a 20-person team, so start your 14-day Team Plan trial today.

The Problem: Free AI Code Review Tools Break at Team Scale

AI-powered code review promised faster pull request checks, fewer bugs, and better code quality. Reality looks different for most teams. Free AI code review tools amplify this pressure with shallow analysis and no real support for team-scale workflows.

For a 20-developer team, lost productivity can reach $1 million per year when engineers spend an hour each day wrestling with CI failures, long review cycles, and irrelevant AI comments. Free tools promise efficiency but add complexity, widening the gap between AI code generation speed and human review capacity.

10 Critical Limitations of Free AI Code Review Tools for Teams

1. Shallow Codebase Context Causes More Logic Bugs

Free AI code review tools usually inspect only pull request diffs and ignore the broader architecture. This gap becomes severe when AI-generated code duplicates logic or breaks existing patterns.

Teams often discover logic errors weeks after deployment because free tools miss cross-service dependencies and architectural violations. Without hierarchical memory, every review starts from zero context and cannot learn from earlier decisions or maintain consistency across related changes.

2. False Positives Flood Inboxes and Slow Reviews

Free tools often post dozens of inline comments on a single pull request, cluttering GitHub timelines with low-value feedback.

Across free tools, teams accept only a fraction of AI suggestions, so most recommendations waste developer time. This poor signal-to-noise ratio forces aggressive filtering and custom rules, which still leave engineers tired and skeptical while risking missed real issues.

3. Missing CI/CD Integration Triggers Project Delays

Most free AI code review tools operate separately from CI pipelines and cannot read build logs, test results, or deployment errors. When CI checks fail, these tools rarely help explain root causes or propose fixes that match the actual build environment.

Developers must jump between AI comments, CI dashboards, and local debugging, which multiplies resolution time. Failed builds then stack up, and teams face cascading delays because every fix requires manual investigation and trial-and-error patches.

4. Security Gaps and Data Exposure Risks

Security researchers identified multiple vulnerabilities in AI tools through prompt-injection attacks, which creates a real risk for teams using free open-source options. External APIs often receive proprietary code, even when the tool runs self-hosted and still depends on OpenAI-style keys.

Free tools rarely ship with deep security scanning or dedicated security models, so they miss patterns that paid platforms catch. They also lack compliance features such as audit trails and data residency controls, which makes them a poor fit for regulated industries.

5. Hard Usage Limits at 50+ PRs per Week

Free and low-tier plans impose strict test and usage limits, which break down for teams handling more than 50 pull requests per week. As repositories grow and reviews become more complex, performance degrades, and teams hit rate limits at the worst possible moments.

6. Inconsistent Outputs Undermine Trust

Stochastic language models often return different feedback for identical code changes. Developers report that varied LLM outputs require cross-checking, which highlights reliability issues in free AI reviews.

This inconsistency erodes confidence and forces manual validation of every suggestion. Teams struggle to enforce stable coding standards when the review tool gives conflicting advice on similar patterns.

7. Missing Collaboration and Workflow Features

Free AI code review tools rarely integrate with Slack, Jira, or workflow automation systems. Feedback stays locked inside GitHub or GitLab, which prevents shared visibility across engineering, product, and QA teams.

Developers must coordinate manually between AI comments, human reviews, and project requirements. This fragmented view increases coordination overhead, slows decisions, and makes it harder to see which issues truly block a release.

8. Blind Spots Around AI-Generated Code Patterns

Many free tools rely on the same models that generate the code they review, which creates confirmation bias. Code duplication increased with AI generation, yet review tools trained on similar data often miss these systemic problems.

Logic and correctness issues appear more often in AI-generated pull requests, but free tools lack detectors for AI-specific artifacts. Repetitive structures, partial error handling, and context-mismatched solutions slip through reviews and surface later as production bugs.

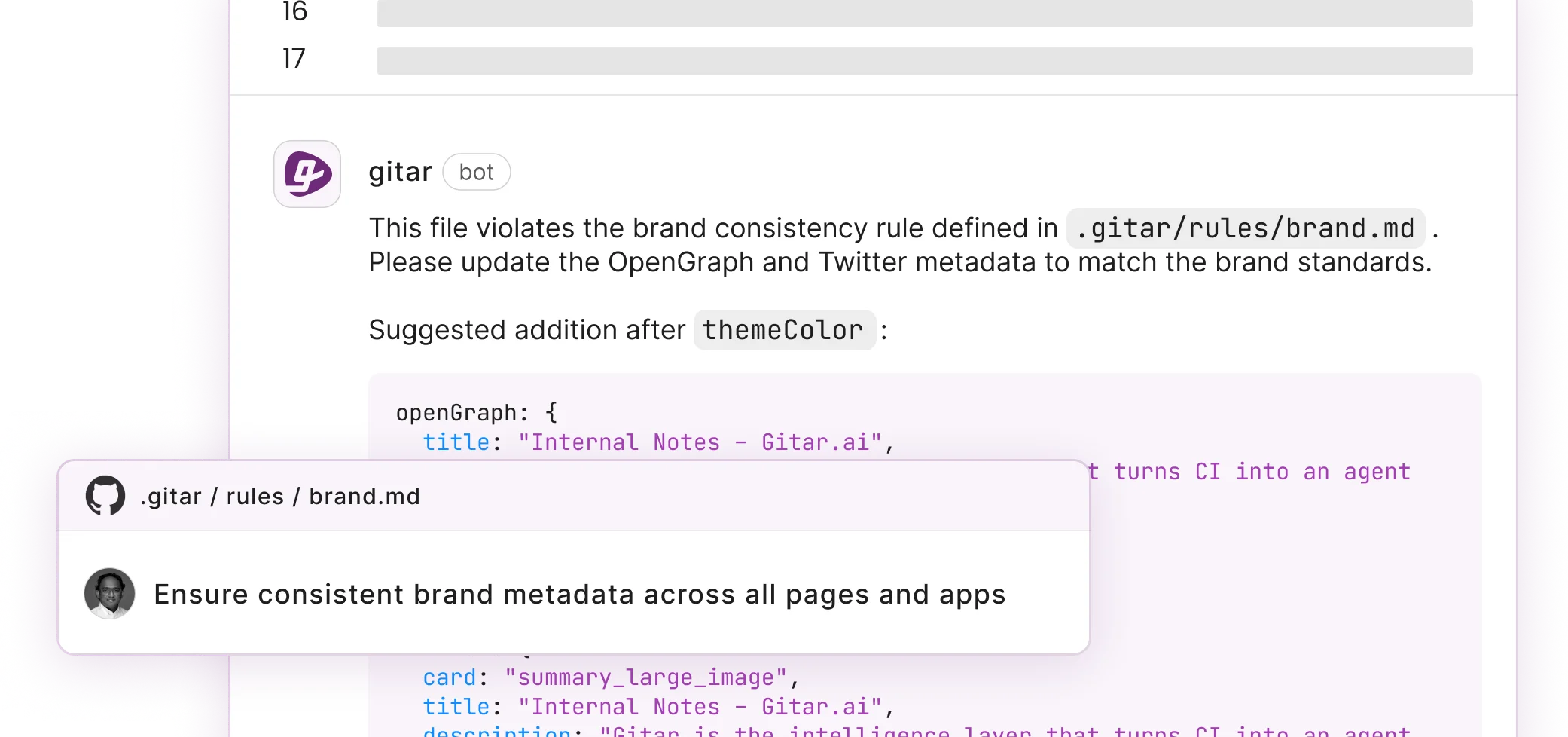

9. No Real Support for Team Rules and Standards

Free AI code review tools usually ship with generic rules that ignore your team’s architecture, domain, and business logic. Custom rules often require complex YAML files or extra scripts that few teams maintain over time.

Institutional knowledge stays in senior engineers’ heads instead of in the review system. New hires receive inconsistent guidance, and teams waste time re-arguing style and architecture choices that a smarter platform could enforce automatically.

10. Negative ROI Compared to Manual Review

When you combine false positives, missed issues, setup friction, and infrastructure costs, free AI code review tools often cost more than manual review. Teams spend extra hours validating AI output, tuning configuration, and fixing problems that automation should prevent.

Teams can avoid this drag by moving to a platform that actually fixes code. Start your Gitar trial today and use autonomous code repair that improves throughput instead of slowing it.

Gitar’s Healing Engine: From Comments to Commits

Gitar shifts code review from suggestions to autonomous fixing. The 14-day Team Plan trial includes full pull request analysis, security scanning, bug detection, performance review, and auto-fix for your entire team with no seat limits.

You can define natural language rules instead of wrestling with YAML, as described in the Gitar documentation. Gitar connects with GitHub, GitLab, CircleCI, and Buildkite and supports unlimited repositories during the trial.

Teams report faster reviews from summaries that are “more concise than Greptile” and from automatic detection of unrelated PR failures that previously consumed hours of debugging. Gitar’s hierarchical memory learns your patterns and keeps context across pull requests, repositories, and organizational decisions.

|

Capability |

Free Tools |

Gitar |

Business Impact |

|

Auto-Apply Fixes |

No |

Yes (Trial/Team) |

45min → 15min per PR |

|

CI Integration |

No |

Yes |

60% fewer delays |

|

Team ROI |

Negative |

$750K annual savings |

Measurable productivity |

AI Coding at Scale: The Review Gap for Teams

AI code generation now moves faster than human review capacity. AI code generation speeds output while human review capacity stays flat, which creates a persistent “review gap”. AI-generated changes keep getting larger and more complex, which demands deeper reviews that free tools cannot support at scale.

Teams feel this as notification overload, constant context switching, and declining trust in automated feedback. They need platforms that automate the entire outer loop from generation through review, testing, and deployment instead of tools that bolt on another manual step.

Frequently Asked Questions

Why Should Teams Upgrade from Free AI Code Review Tools?

Free AI code review tools often increase work instead of reducing it. PR review times have already increased 91% due to AI-driven code volume, so teams now need systems that fix issues, not just flag them.

Paid platforms like Gitar deliver clear ROI through automated fixes, fewer context switches, and higher rates of green builds. A 14-day trial usually reveals enough velocity improvement to justify the investment through faster delivery and lower frustration.

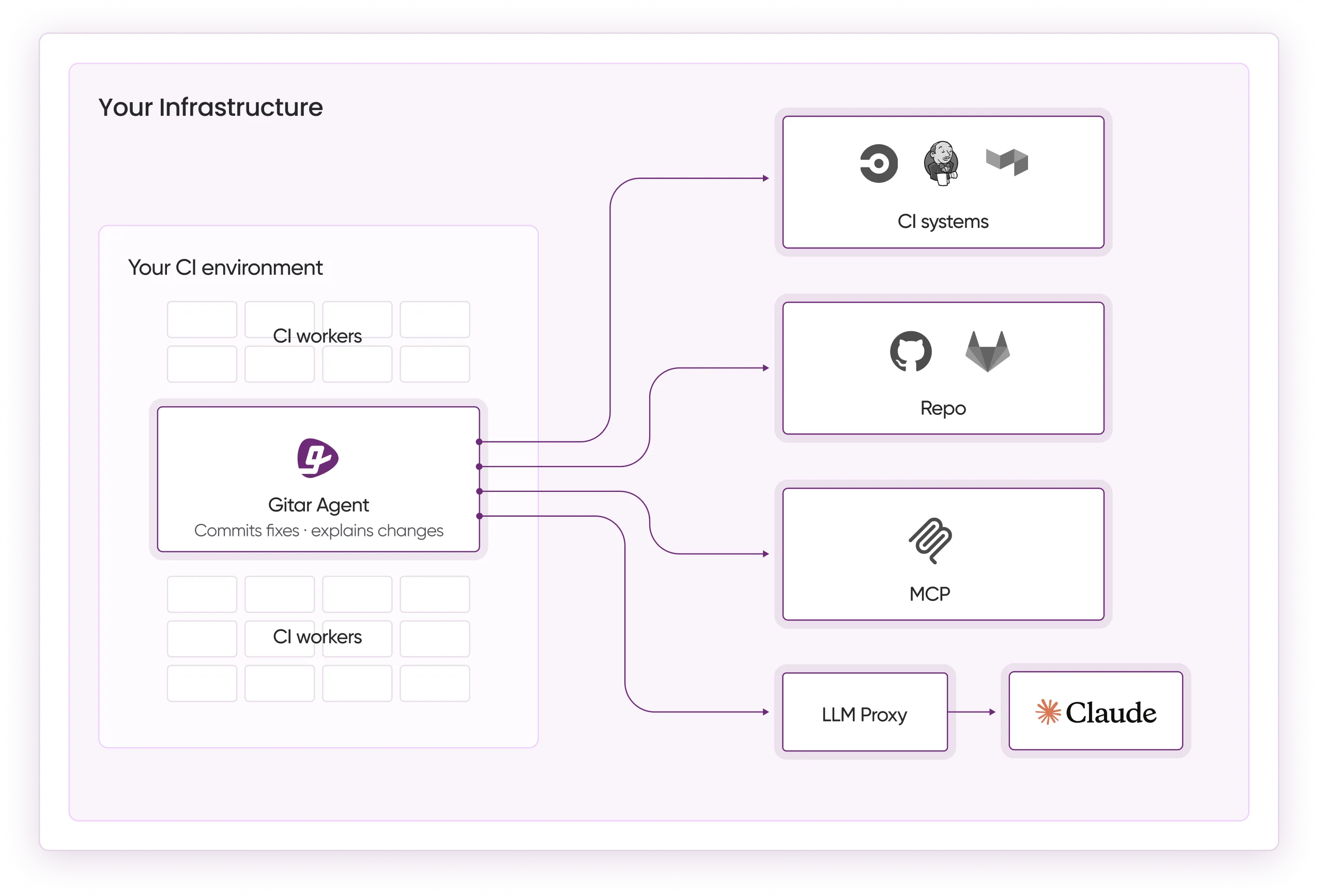

How Does Gitar Handle Complex CI Environments?

Gitar’s Healing Engine works directly with complex CI setups. When CI checks fail from lint errors, test failures, or build breaks, Gitar reads the logs, identifies root causes, generates fixes with full codebase context, validates those fixes, and commits them back to the pull request.

On the Enterprise Plan, the agent runs inside your CI pipeline with access to configs, secrets, and caches, so no code leaves your infrastructure. Gitar holds SOC 2 Type II and ISO 27001 certifications and focuses on fixes that succeed in production environments.

What Are the Main Limitations of Free AI Code Review Tools for Large Teams?

Free tools usually collapse at more than 50 pull requests per week because of usage caps, performance slowdowns, and rising infrastructure costs. GPU needs and API rate limits make them hard to justify for active teams, and missing collaboration features add coordination overhead.

Without CI integration, free tools cannot assist with the most time-consuming work, which is debugging build failures and validating production readiness. Teams then pay hidden costs through extra developer hours on manual fixes and status updates.

Why Do Free AI Code Review Tools Struggle with Security?

Free tools often lack deep security scanning and can introduce new risks through prompt injection and third-party APIs. Code analysis usually sends proprietary information to external services, which creates data sovereignty and compliance concerns.

They also miss features such as audit logs and security-focused models, so they overlook vulnerabilities that dedicated platforms detect. Regulated organizations typically need stronger security integration than free tools can provide without heavy extra investment.

How Can Teams Measure ROI from an AI Code Review Upgrade?

Teams can track time saved from fewer context switches, less false positive triage, and automated fix application. A 20-developer team often saves about $750,000 per year by cutting CI-related time from one hour to 15 minutes per developer per day.

Additional metrics include shorter PR cycle times, lower build failure rates, and higher developer satisfaction. The 14-day trial gives enough data to calculate productivity gains and support a clear business case.

Conclusion: Remove the Bottleneck with Real Fixes

Free AI code review tools promise speed but often create friction that slows teams despite faster code generation. The 10 limitations described here, from shallow context and noisy feedback to security and scale issues, make them a poor fit for serious engineering organizations.

Teams need platforms that deliver autonomous fixes and integrate deeply with CI so issues resolve automatically instead of piling up as manual tasks. Healing engines that guarantee green builds provide real leverage, while suggestion-only tools add overhead.

Move from comments to commits and from suggestions to solutions. Install Gitar, automatically fix broken builds, and start shipping higher quality software faster during your 14-day Team Plan trial, then measure the productivity lift for your team.