Last updated: March 10, 2026

Key Takeaways

- AI-assisted code now drives a good chunk of commits, yet a lot of teams still face slow PR reviews as their main bottleneck.

- Amazon Q Developer works best in AWS environments with strong security scanning, but lacks autonomous auto-fix and broad platform support.

- Traditional tools like CodeRabbit and Greptile suggest fixes but require manual changes, which costs $15-30 per developer monthly, plus labor.

- Gitar delivers platform-agnostic auto-fixes, CI validation, and measurable ROI, cutting CI and review time from 1 hour to 15 minutes per developer.

- Multi-platform teams that want working fixes instead of suggestions should choose Gitar and start a 14-day Team Plan trial to remove PR bottlenecks.

How to Evaluate AI Code Review Tools in 2026

Teams should evaluate AI code review tools against six concrete capabilities that separate real automation from basic assistance.

- Platform Integration: AWS-only coverage versus support for GitHub, GitLab, CircleCI, and Buildkite

- Fix Implementation: Suggestion-only comments versus autonomous auto-fix with CI validation

- Pricing Model: Per-seat fees ($15-30 per developer) versus full-featured trials without seat limits

- Notification Management: Noisy inline comment floods versus concise, single-comment updates

- Scope Coverage: Simple PR checks versus full CI healing, natural language rules, and workflow automation

- Trust Configuration: Manual approvals only versus configurable auto-commit and safety controls

These criteria separate tools that only flag issues from platforms that actually fix them. In 2026, the most effective tools ship autonomous fixes instead of leaving teams with manual cleanup work.

Amazon Q Developer: Strong in AWS, Limited Beyond It

Amazon Q Developer delivers robust code assistance for teams building on AWS. The platform supports code review-related features in IDEs, including chat about code, inline suggestions, transformations, security scanning, and code optimizations across VSCode, JetBrains, Eclipse, and Visual Studio.

Its main strengths include deep integration with AWS services such as Lambda, DynamoDB, and CloudFormation. Security scan benchmarks show high precision (84.7%-88.2%) and recall (83.3%-100%) that outperform competitors on OWASP and CredData. Reported productivity gains include a 40% increase in development speed for teams like Deloitte.

Limitations appear once teams need flexibility beyond AWS. Amazon Q Developer remains primarily AWS-focused and less effective in non-AWS or multi-cloud environments. The platform does not provide autonomous auto-fix or CI healing, which many teams now expect. It offers security scanning with suggested fixes, but there is no explicit auto-fix beyond suggestions and agent capabilities.

AWS-centric teams gain clear value from Amazon Q Developer. Organizations that need autonomous fixes across multiple platforms see better results from specialized healing platforms.

Leading AI Code Review Tools in 2026

Most leading AI code review tools in 2026 still focus on analysis and suggestions instead of full automation. CodeRabbit provides detailed PR comments, walkthrough summaries, LLM plus linters integration, and a chat interface. It uses LLM-based analysis for bugs, security, and performance in PRs with GitHub and GitLab integration.

Greptile and Qodo offer similar capabilities with rich codebase context and quality improvements. Tests on real PRs, including security vulnerabilities, show Qodo excels in correctness and security when configured properly. Ellipsis can auto-generate fixes from reviewer comments and verify them with tests, with multi-language support on GitHub.

A critical gap remains across these tools. They suggest fixes but rarely implement and validate them end to end. Snyk Code offers hybrid symbolic AI with autofix capabilities, yet many findings still require manual validation. Teams often pay $15-30 per developer each month for tools that still demand manual work to apply suggested changes.

Feature Comparison: Amazon Q, Suggestion Tools, and Gitar

|

Feature |

Amazon Q Developer |

CodeRabbit/Greptile/Qodo |

Gitar |

|

PR Summaries |

Yes |

Yes |

Yes |

|

Auto-Apply Fixes |

No |

No |

Yes |

|

CI Auto-Fix/Validation |

No |

No |

Yes |

|

Platform Support |

AWS-optimized |

GitHub-focused |

GitHub/GitLab/CircleCI/Buildkite |

|

Green Build Guarantee |

No |

No |

Yes |

This comparison highlights the gap between suggestion engines and healing platforms. Amazon Q Developer and traditional tools identify issues, while Gitar resolves them autonomously and validates the results in CI.

Why Gitar Delivers Higher Engineering Velocity

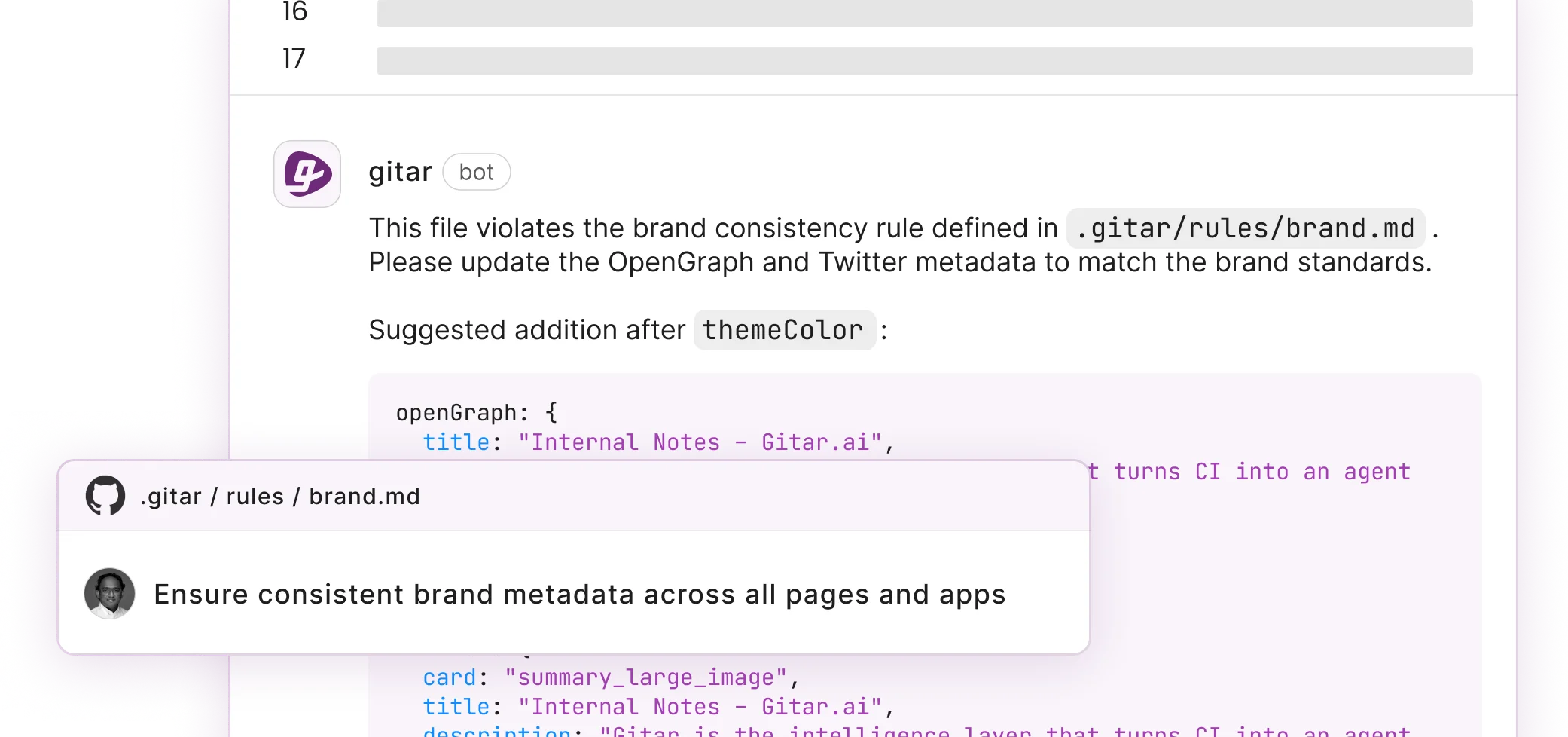

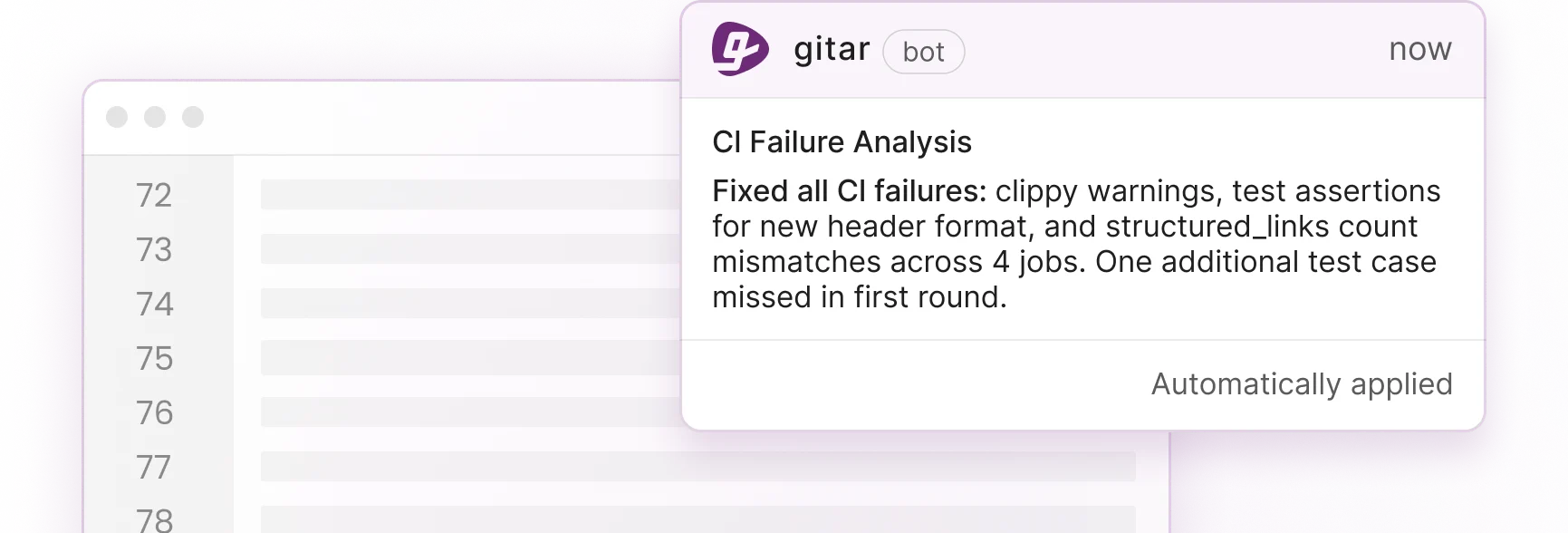

Gitar moves beyond suggestion-based code review and focuses on shipping working fixes. Its healing engine analyzes CI failures, generates validated fixes, and commits them automatically. When lint errors, test failures, or broken builds appear, the platform identifies and resolves them instead of only commenting on them. For a deeper walkthrough of this process, review the Gitar documentation.

The autonomous fix flow includes several clear steps. The engine analyzes failure logs to understand root cause, generates code changes with full codebase context, validates that fixes pass CI, and then commits them to PRs with a single consolidated update. This approach removes the notification spam that comes from tools that scatter dozens of inline comments across diffs.

ROI metrics show the impact clearly.

|

Metric |

Before Gitar |

After Gitar |

|

Daily CI/Review Time |

1 hour/developer |

15 minutes/developer |

|

Annual Productivity Cost (20 devs) |

$1M |

$250K |

|

Context Switching |

Multiple/day |

Near-zero |

Gitar also supports natural language workflow rules, Jira context integration, and detailed analytics. Teams can define rules, such as “Security Review” for PRs that modify authentication code without writing complex YAML. The platform learns team patterns and maintains hierarchical memory at the line, PR, and repository levels. Configuration details appear in the Gitar docs.

Real-world usage includes catching high-severity security vulnerabilities that Copilot missed and providing “unrelated PR failure detection” that separates infrastructure flakiness from genuine code bugs.

Start Gitar’s 14-day Team Plan trial to test full auto-fix capabilities with no seat limits during evaluation.

What Developers Say About Amazon Q and AI Review Tools

Developer conversations on forums highlight recurring pain points with current AI code review tools. Teams describe PR floods that overwhelm review capacity, AWS lock-in that restricts platform choice, and AI-generated bugs that require more review effort than human-written code. AI-generated PRs contained about 1.7 times more issues overall, with 10.83 issues per PR versus 6.45 for human-only PRs.

Many teams feel frustrated paying premium prices for suggestion engines that cannot guarantee working fixes. Gitar addresses these concerns with unrelated failure detection, duplication analytics, and validated auto-fixes that remove the manual implementation burden common with traditional tools.

Best AI Code Review Automation Choices for 2026

Rankings for 2026 favor tools that deliver autonomous fixes and flexible platform support. Gitar leads for teams that need non-AWS flexibility and measurable ROI from actual code changes instead of comments. The platform offers zero-setup deployment, SOC2 compliance, and configurable trust levels so teams can begin in suggestion mode and gradually enable auto-commit for specific failure types.

Amazon Q Developer remains a strong option for AWS-focused teams but lacks the autonomous capabilities and platform-agnostic coverage that many modern workflows demand. Suggestion engines like CodeRabbit and Greptile still provide analysis value, yet their per-seat pricing becomes harder to justify without automated fix implementation.

Decision Framework for Selecting Your Tool

|

Use Case |

Recommended Tool |

Rationale |

|

AWS-only environment |

Amazon Q Developer |

Deep AWS integration and strong security scanning |

|

Multi-platform with auto-fixes |

Gitar |

Platform-agnostic healing engine with clear ROI |

|

Basic PR analysis only |

CodeRabbit/Greptile |

Suggestion-focused capabilities with lower complexity |

Engineering leaders should remember that suggestion engines always require manual work to apply fixes. Healing platforms such as Gitar remove that overhead by implementing and validating changes. Teams that feel cautious about automated commits can begin in suggestion mode and expand automation as trust grows.

The key decision centers on whether your team needs suggestions or working solutions. In 2026, the main bottleneck has shifted from spotting problems to fixing them at scale. Install Gitar, automatically fix broken builds, and ship higher quality software faster with automation that goes far beyond suggestion-only tools.

Frequently Asked Questions

How does Amazon Q Developer compare to Gitar for multi-CI teams?

Amazon Q Developer performs best inside AWS ecosystems but does not fully support non-AWS CI platforms such as CircleCI, Buildkite, or GitLab CI. It offers valuable code assistance and security scanning for AWS-centric teams, yet it lacks autonomous fix capabilities and platform-agnostic coverage. Gitar supports GitHub, GitLab, CircleCI, and Buildkite with full CI healing. Amazon Q Developer suggests fixes, while Gitar implements and validates them automatically across supported platforms.

What is the ROI gap between suggestion tools and autonomous fix platforms?

Suggestion-based tools such as CodeRabbit and Greptile cost $15-30 per developer each month and still require manual implementation of suggested fixes. A 20-developer team spends $300-600 monthly on licenses plus ongoing labor to apply changes. Autonomous fix platforms remove that manual implementation cost. Teams report cutting daily CI and review time from 1 hour per developer to 15 minutes, which represents about $750K in annual productivity savings for a 20-developer team. Tools that only suggest fixes cannot match that return.

Can AI code review tools handle CI failures that depend on environment context?

Most AI code review tools lack the CI context needed to diagnose and repair complex failures. They focus on code diffs and ignore build environments, dependency conflicts, and infrastructure issues. Gitar’s healing engine analyzes real CI failure logs, understands the full build context including SDK versions and dependencies, and generates fixes that work in production. It can distinguish between code bugs and infrastructure flakiness, which traditional review tools cannot do. This approach ensures that fixes resolve root causes instead of masking surface symptoms. Learn more in the Gitar documentation.

How do teams move from manual review to automated fixes?

Teams should build trust in automation step by step. Start in suggestion mode and review every proposed fix while tracking how the platform handles different failure types. After confidence grows, enable auto-commit for low-risk issues such as lint errors or formatting problems. Expand automation to more complex fixes as the team becomes comfortable with accuracy levels. Gitar offers configurable trust levels so teams can control how aggressive automation becomes while keeping oversight. Setup guidance appears in the Gitar documentation.

What security practices matter for automated code fixes?

Automated fixes require strong security controls, especially in production environments. Teams should look for SOC2 Type II certification, configurable approval workflows, and the option to run agents inside their own infrastructure. Gitar’s Enterprise plan runs the agent inside your CI pipeline with full access to configs and secrets while keeping code inside your environment. The platform records audit trails for all automated changes and supports rollbacks. Teams should define clear policies for which fixes can be auto-committed and which require human review, especially for security-sensitive paths. Review these controls in the Gitar documentation.