Key Takeaways for CI Automation ROI

- PR volumes surged 29% YoY while review capacity stayed flat, shifting bottlenecks from coding to CI validation amid 84% AI tool adoption.

- Manual reviews cap teams at about 50 cases per day and introduce fatigue, while suggestion-only AI tools like CodeRabbit and Greptile see 70% rejection rates that still require manual fixes.

- Gitar delivers free unlimited code review plus autofix for CI failures, cutting fix time from 1 hour per day per developer to 15 minutes, which equals about $750K annual savings for a 20-developer team.

- Comprehensive autofix outperforms suggestions by automatically implementing and validating fixes in CI environments, supporting GitHub, GitLab, and CircleCI at enterprise scale.

- Teams report 40–50% velocity gains with Gitar’s hybrid automation model, and you can install Gitar free today for green builds and faster shipping.

The New CI Bottleneck Created by AI-Accelerated Coding

AI-assisted coding increased output so quickly that CI review capacity became the new bottleneck. GitHub processed 43.2 million pull requests monthly in 2025, a 23% increase, while GitHub Copilot reached 15 million users with 51% faster coding speeds. Sprint velocities stayed flat because review and CI validation could not keep up with the new volume.

Manual review processes slow teams and introduce inconsistency. Human reviewers are limited to approximately 50 test cases per day and experience fatigue, distraction, and bias, which all reduce reliability. When CI failures occur, manual debugging and fixing can consume hours of developer time for each incident.

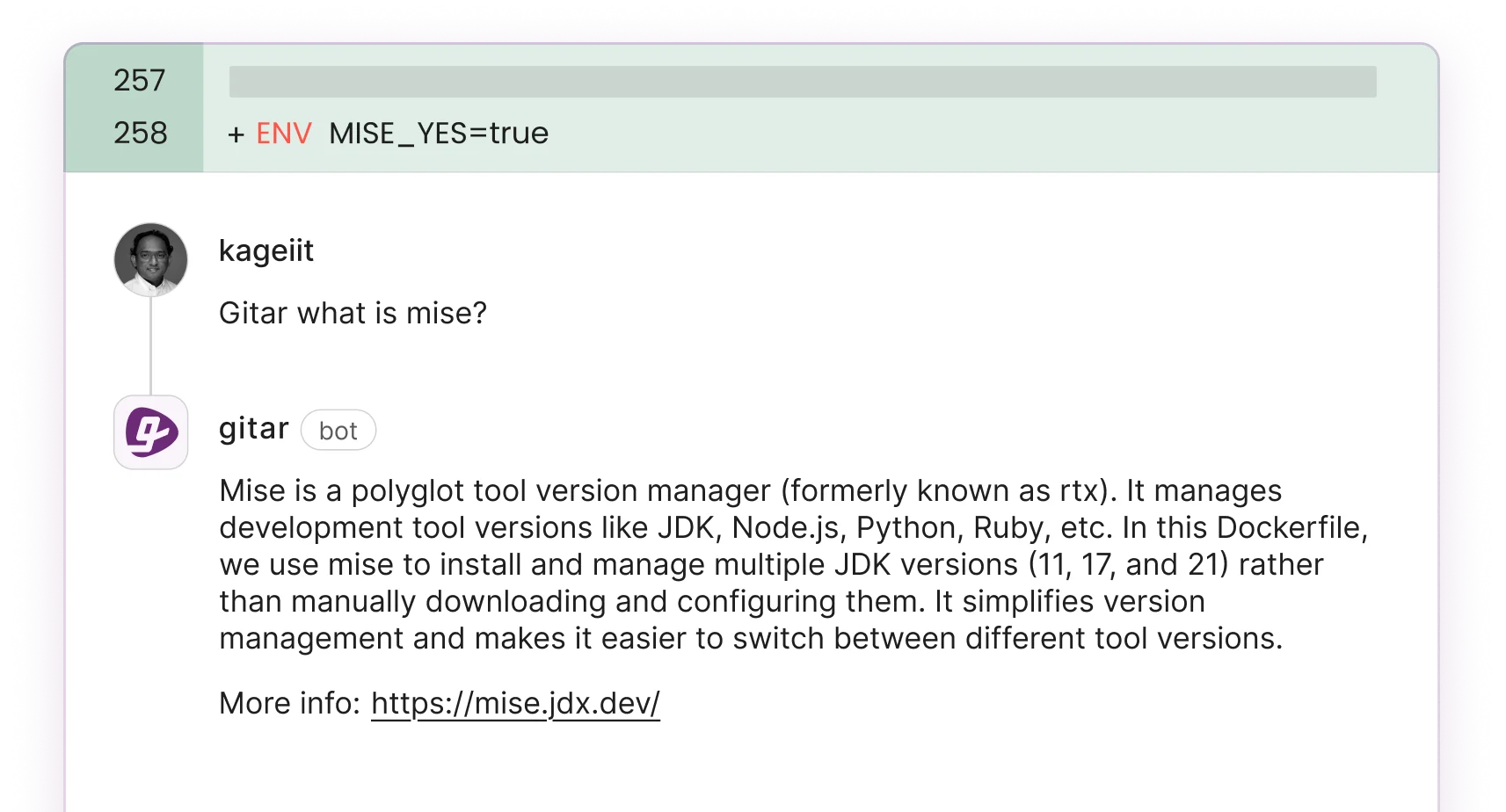

Suggestion-only AI tools try to relieve this pressure but add their own overhead. Only 30% of AI-suggested code gets accepted by developers, so 70% of suggestions still need manual review and modification. These tools generate streams of notifications and inline comments without applying fixes, which creates suggestion noise instead of resolved builds.

A hybrid approach that blends automation with targeted human review delivers better results. Gitar provides free comprehensive AI code review, with autofix features available through a 14-day free trial, and handles routine CI failures and review feedback automatically. Teams keep peer review focused on high-value architectural decisions and complex business logic while Gitar manages repetitive CI maintenance work.

ROI Breakdown: Manual Review, Suggestion AI, and Gitar

Capabilities differ sharply across manual review, suggestion-only tools, and Gitar:

|

Feature |

Manual |

CodeRabbit/Greptile |

Gitar |

|

Inline Suggestions |

N/A |

Yes ($15-30/seat) |

Yes (Free) |

|

Autofix CI Failures |

No |

No |

Yes (14-day free trial) |

|

CI Validation/Guarantee |

No |

No |

Yes |

|

Seat Cost |

Dev salary overhead |

$15-30/month |

$0 unlimited |

ROI metrics highlight the real cost gap between these approaches:

|

Metric |

Manual |

Suggestion AI |

Gitar |

|

Time/Fix (20-dev team) |

1hr/day/dev ($1M/yr) |

45min/day/dev |

15min/day/dev ($750K savings) |

|

Merge Speed |

Days |

Hours (manual impl) |

Minutes (autofix) |

|

Tool Cost (20 devs) |

$0 |

$300-600/month |

$0 |

Manual processes provide deep contextual understanding but scale poorly and create persistent bottlenecks. Suggestion-only AI tools like CodeRabbit and Greptile analyze code faster but still depend on developers to implement every fix. Greptile caught 82% of test issues versus CodeRabbit’s 44%, yet both still leave implementation work to the team.

Gitar’s healing engine automatically fixes CI failures, validates solutions in the actual CI environment, and commits working code. This closes the gap between suggestions and implementation that slows other tools, while also delivering full code review capabilities at no cost.

Install Gitar now to automatically fix broken builds and ship higher quality software faster

Why Manual Review and Suggestion-Only Tools Fall Short

Manual code review hits hard scalability limits as PR volume grows. Human reviewers are limited to approximately 50 cases per day, and their thoroughness drops as fatigue and cognitive load increase. Manual regression testing scales linearly in cost without matching value, which makes it unsustainable for teams facing AI-driven growth in pull requests.

Suggestion-only AI tools introduce a different friction point. With only 30% acceptance rates for AI-generated code, these tools create heavy notification overhead while still demanding manual implementation. Developers must read suggestions, apply them by hand, push new commits, and wait to see whether fixes pass CI, which adds steps instead of removing them.

The absence of CI context in suggestion-only tools prevents them from validating whether proposed fixes actually work in the target environment. Developers then need to double-check every suggestion, which erodes trust and reduces the real efficiency gains.

Post-automation hybrid models show the highest ROI by pairing automated fixes for routine issues with human oversight for complex decisions. This structure improves both speed and code quality while avoiding the notification fatigue that suggestion-only tools often create.

Choosing the Right CI Automation Strategy for Your Team

Team profile and constraints should guide your CI automation choice:

|

Team Profile |

Recommended |

Why Gitar Wins |

|

Small teams (<20 devs), budget-conscious |

Gitar |

Free unlimited usage, 50%+ velocity gains |

|

Enterprise, complex CI environments |

Gitar |

Scalable autofix, multi-CI platform support |

|

High-security environments |

Gitar Enterprise |

Agent runs in your CI pipeline, SOC 2 Type II, ISO 27001 certified |

Total cost of ownership analysis shows large gaps between suggestion-only tools and full automation. Competitors charge $450-900 monthly for 20-developer teams while offering only suggestions. Organizations using automation report 40% faster deployment cycles and meaningful cost reductions from less manual intervention.

Gitar removes both direct tool costs and productivity losses from manual CI maintenance. The platform delivers compound ROI through free access and automated fixes that consistently drive green builds.

Conclusion: Gitar Delivers Higher CI Automation ROI

Evidence across cost, time, and quality favors comprehensive automation over manual processes and suggestion-only tools. Gitar provides free code review capabilities that match or exceed paid alternatives, then adds autofix functionality that resolves CI failures instead of just flagging them. For teams overwhelmed by AI-driven PR volume, Gitar turns incremental efficiency gains into a step-change improvement in delivery speed.

Install Gitar now to automatically fix broken builds and ship higher quality software faster

FAQ: CI Pipeline Automation ROI and Tool Comparisons

What is the difference between manual and automated code review?

Manual code review relies on human reviewers who examine code line by line, which provides deep context but creates bottlenecks as PR volumes grow. Automated code review uses AI to scan for code patterns, security risks, and compliance issues at scale.

The main difference appears during implementation, because manual review requires human action for every fix, while automation like Gitar’s healing engine applies and validates solutions automatically. Manual review works best for architectural decisions and business logic, while automated systems handle routine issues such as lint errors, test failures, and dependency conflicts more efficiently.

Will ROI be higher with automation or manual testing?

Automation delivers higher ROI for routine CI pipeline tasks. Organizations using automated CI/CD pipelines report 40% faster deployment cycles and up to 50% reductions in development costs. The advantage comes from removing repetitive manual work, reducing human error, and enabling continuous operation.

Hybrid approaches that automate routine fixes while keeping humans involved for complex decisions usually deliver the strongest ROI. For a 20-developer team, automation can save about $750,000 annually in productivity costs, while manual approaches often translate into roughly $1 million per year in lost developer time.

How do AI code review and manual peer review compare on cost?

AI code review tools range from free, as with Gitar, to $15-30 per developer monthly for tools like CodeRabbit and Greptile. Manual peer review costs appear in developer salaries and lost productivity rather than direct invoices.

The real cost difference shows up in implementation speed, because AI autofix tools resolve issues in minutes, while manual processes can take hours or days. Manual peer review offers stronger context for architectural choices but becomes expensive for routine maintenance. Teams reach better cost efficiency by using free AI tools for automated fixes and reserving manual review for high-impact decisions.

How does Gitar ROI compare to CodeRabbit?

Gitar delivers stronger ROI by offering free unlimited code review plus autofix capabilities through a 14-day free trial, while CodeRabbit charges $15-30 per seat for suggestion-only features. Benchmarks show that Gitar’s autofix model removes the manual implementation step that CodeRabbit still requires, which cuts time-to-resolution from hours to minutes.

CodeRabbit users continue to spend time reading suggestions, applying changes manually, and validating fixes, while Gitar automatically implements and validates solutions. For a 20-developer team, this difference can save $300-600 monthly on tool costs and unlock much larger productivity gains from automated fix implementation.

How do I measure CI pipeline automation ROI?

Teams can measure CI automation ROI with a focused metric set. Track developer time spent on CI failures, build retry costs, merge-to-deployment cycle time, and the number of manual interventions. Capture baseline values such as average CI issue time per developer per day, then compare after automation.

Successful rollouts often show 60–75% reductions in CI-related developer time, 40% faster deployment cycles, and fewer production incidents. Calculate total cost, including tool fees, setup time, and ongoing maintenance, then compare those costs against productivity gains and lower operational overhead to determine true ROI.